Luma Uni-1.1 API: Top-3 Model Hits 8 Creator Tools

Luma's Uni-1.1 API hits 8 launch partners with a top-3 model, 9-reference generation, and native non-Latin script rendering at sub-half-price economics.

Luma's Uni-1.1 API hits 8 launch partners with a top-3 model, 9-reference generation, and native non-Latin script rendering at sub-half-price economics.

Midjourney V8.1 ships April 30, 2026 on Discord and the web with HD 3x faster and 3x cheaper, standard mode 50% faster, and stable style references and Moodboards.

Freepik officially rebranded as Magnific on April 28, 2026, hitting $230M ARR with no outside investment. Inside the model-agnostic aggregator bet, the no-collar economy, and what 1 million paying subscribers means for creative AI.

Picsart's GenAI CLI bundles 130+ image, video, and audio models with native MCP support, turning creative generation into an agent-callable surface.

SenseTime open-sourced SenseNova U1 on April 28, 2026, releasing three model variants under Apache 2.0. The architecture drops VAEs entirely, unifying image generation and text reasoning in one space.

On April 28, Figma added multiple new ways for designers to supply reference images when using AI to generate or edit images inside Figma.

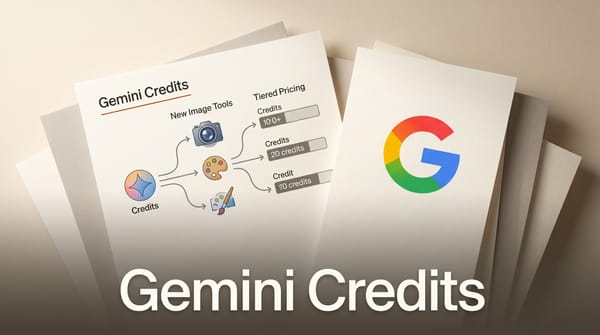

Google is testing a credit-based billing system for Gemini that replaces fixed prompt quotas, plus a new dedicated images section spotted in the Gemini web UI ahead of Google I/O.

Inclusion AI released LLaDA2.0-Uni, a 16B MoE diffusion model that unifies text-to-image generation, instruction-based editing, and image understanding in one Apache-2.0 checkpoint.

ComfyUI v0.19.4 adds OpenAI's GPT Image 2 as a Partner Node -- the first image model to reason before it generates, with pixel-stable editing and batch storyboarding.

OpenAI’s GPT Image 2, the company’s first reasoning-based image model, is now available inside ComfyUI through Partner Nodes — bringing 2K output, precise text rendering, and 8-frame batch consistency to node-based workflows.

ComfyUI added Quiver Arrow 1.1 as a Partner Node on April 20, 2026, bringing structured SVG generation to open-source visual workflows for the first time.

OpenAI has quietly begun rolling out GPT-Image-2 to paid ChatGPT subscribers -- no announcement, no blog post, just a significantly more capable image model showing up in Plus and Pro accounts.

Google launched a native Gemini app for Mac on April 16, 2026, bringing image, video, and music generation directly to the desktop for the first time.

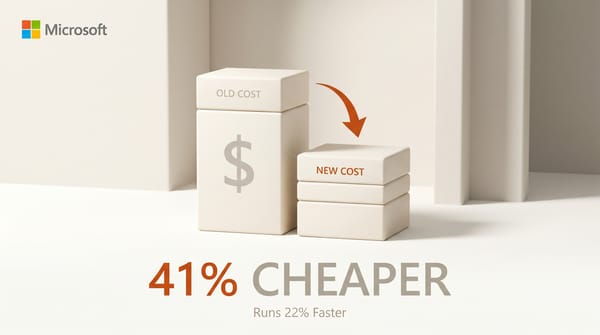

Microsoft launched MAI-Image-2-Efficient on April 14, 2026, a distilled variant of its flagship image model with 41 percent cheaper output tokens and 22 percent faster generation on H100 hardware.

NucleusAI released Nucleus-Image on April 14, a 17-billion parameter diffusion model that activates only 2 billion parameters per image -- cutting compute without sacrificing quality.

Black Forest Labs declined xAI partnership and pivots to physical AI. Analysis of the 70-person startup behind FLUX, its $300M+ enterprise deals, and what robots mean for creators.

Stability AI launched Brand Studio, an end-to-end creative production platform that trains custom AI models on brand identity and automates campaign asset creation.

Three image generation models appeared on LM Arena under codenames maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. The AI community identified them as OpenAI's unreleased GPT-Image-2.

Meta confirmed it will release open-source versions of its upcoming Mango multimedia generator and Avocado LLM, extending its open-weight strategy to next-gen frontier models.

Microsoft launched three in-house AI models on April 2, reducing its dependence on OpenAI. MAI-Image-2, MAI-Voice-1, and MAI-Transcribe-1 cover image generation, text-to-speech, and speech recognition.

Midjourney, FLUX Pro, and GPT Image 1.5 lead AI image generation in 2026, but the gap between the top tools has narrowed to the point where price, speed, and workflow fit matter more than raw quality.

The most downloaded text-to-image model on HuggingFace is not from OpenAI, Google, or Midjourney. We tracked 50 models, arena ELO rankings, and pricing to find who really leads AI image generation in 2026.

Midjourney has enabled relax mode for V8 Alpha and rolled out a significantly faster style reference system. The update gives Standard, Pro, and Mega subscribers access to unlimited V8 generations through relax queues, alongside a new SREF pipeline that runs 4x faster.

Adobe opened Firefly Custom Models to public beta, letting any Creative Cloud subscriber train a private AI model on their own images for consistent style output.