SenseTime open-sourced SenseNova U1 on April 28, 2026, releasing model weights and inference code under Apache 2.0 across three variants. The release is unusual on two counts. First, it drops the variational autoencoder (VAE) that virtually every diffusion-based image model has used as the bridge between pixel space and the latent space the model operates in. Second, it ships as fully permissive open weights from a major Chinese lab that has historically gated its strongest models behind enterprise licenses.

Background

SenseNova is SenseTime's flagship multimodal model family. Until April 28, the strongest publicly accessible variants were closed APIs aimed at enterprise customers in surveillance, automotive, and retail vision. The U1 release shifts that posture for the image-generation tier: weights, inference code, and a free public playground all dropped on the same day. A distilled 8-step inference checkpoint was added two days later on April 30, cutting generation latency for self-hosted deployment.

The release sits inside a wider 2026 trend of unified image-text models from non-US labs. Earlier in April, Ant Group open-sourced LLaDA 2.0-Uni, a discrete diffusion model that handles text and images in one architecture, and Alibaba launched Qwen-Image 2.0 Pro through its API. SenseNova U1 is the third major Chinese unified image model to reach the open-weights tier in six weeks.

Deep Analysis

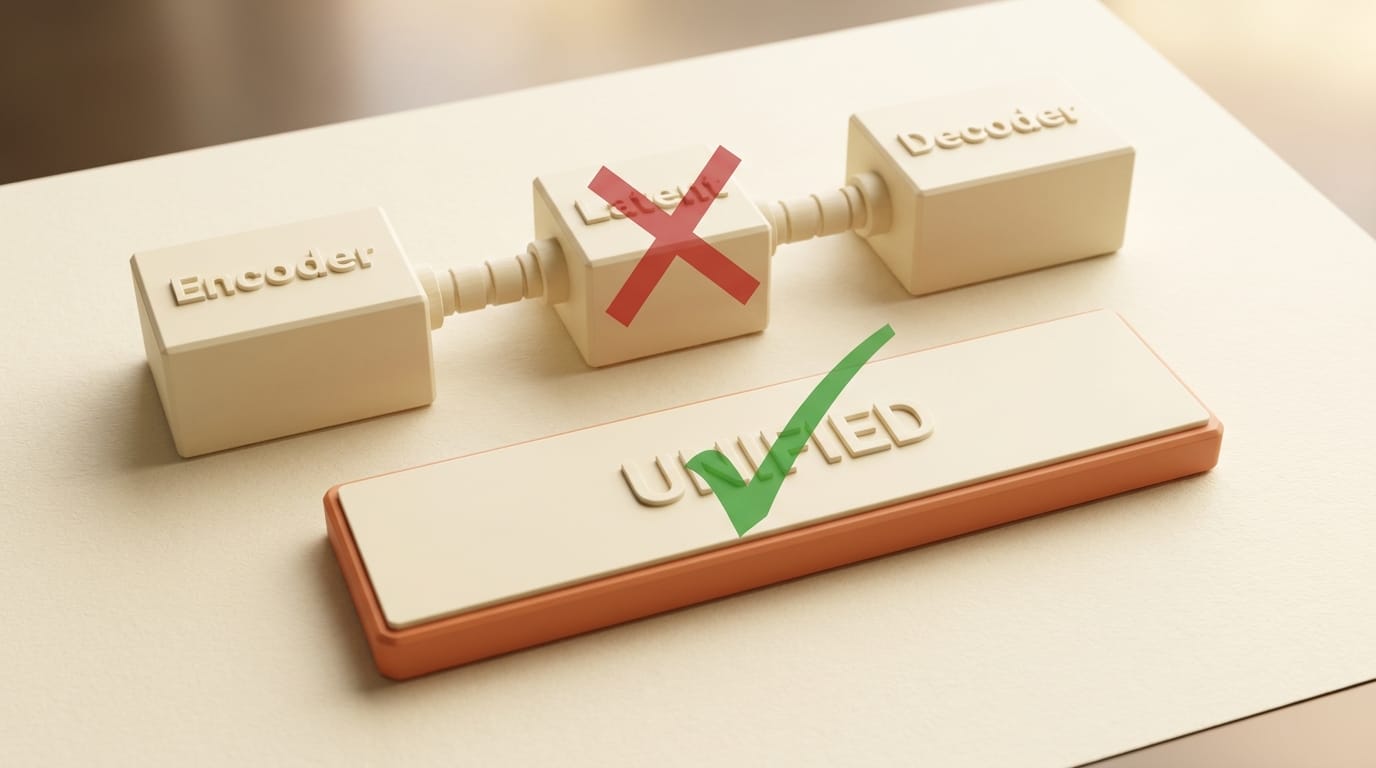

The architectural shift: why dropping the VAE matters

Almost every production image model in wide use today, including Stable Diffusion, FLUX, and SDXL, uses a VAE to compress pixel data into a lower-dimensional latent space. The diffusion model operates in that latent space, then the VAE decodes the final latent back into pixels. The arrangement is computationally efficient but introduces three persistent problems: reconstruction artifacts on fine detail, weak alignment between visual structure and language tokens, and a hard separation between image generation and image understanding that forces models to switch between components when reasoning across modes.

SenseNova U1 is built on what SenseTime calls the NEO-Unify architecture, which removes both the visual encoder and the VAE. Pixels and language tokens share a single representation space from the first training step. The model can generate an image, describe it, edit it, or interleave images and text in a single output sequence without component switching. SenseTime reports a 31.56 PSNR reconstruction quality on standard image benchmarks, comparable to Qwen-Image 2.0 Pro and Seedream 4.5, while preserving the unified text-image reasoning that VAE-bridged models cannot do natively.

The practical consequence shows up in tasks that have historically broken in diffusion pipelines. High-density layout output, including posters, infographics, resumes, and comics, requires the model to place dozens of small text elements with correct spelling and alignment inside a coherent visual composition. VAE-mediated models tend to produce mangled glyphs, misaligned columns, and hallucinated layout relationships because the latent compression discards the fine spatial-textual structure. Unified-space models can plan layout directly in the same representation that produces the pixels.

Three variants and the open-weights bet

The April 28 release ships three variants under Apache 2.0:

- SenseNova-U1-8B-MoT-SFT: 8B dense parameters, instruction-tuned, recommended for end-user image generation

- SenseNova-U1-8B-MoT: 8B base model, intended for fine-tuning and downstream research

- SenseNova-U1-A3B-MoT: 3B active-parameter mixture-of-experts variant for resource-constrained deployment

All three are on HuggingFace with full model cards. The Apache 2.0 license permits commercial use, redistribution, and modification without per-deployment licensing fees, which puts U1 in the same legal tier as other 2026 open-weights image models rather than the more restrictive non-commercial licenses that previously dominated Chinese model releases.

The bet behind the open-weights drop is straightforward. SenseTime's enterprise vision business is mature, and the unified image-generation segment is now too crowded for a closed API to win on capability alone. Open weights let the company seed the architecture inside research labs, hobbyist communities, and downstream products that will fine-tune U1 for vertical applications. The strategy mirrors the path Alibaba took with the early Qwen-Image releases and Mistral took with its open weights: trade short-term API revenue for long-term ecosystem position.

Benchmark positioning against Qwen-Image and Seedream

SenseTime's official benchmarks place U1 within striking distance of Qwen-Image 2.0 Pro and Seedream 4.5 on standard image-generation evaluations, with the unified-architecture variants pulling ahead on text-rendering and layout-density benchmarks where VAE-mediated models historically struggle. An independent analysis at Startup Fortune covers the comparison in depth, including side-by-side outputs on resume layouts, infographic generation, and multi-line poster text where U1's unified representation visibly outperforms VAE-bridged baselines.

The number worth flagging is reconstruction PSNR at 31.56, which is the upper band for current open-weights image models and roughly matches what the strongest closed APIs report. The implication is that the architectural shift does not cost reconstruction quality, which had been the standing objection to VAE-free designs since the early 2024 attempts. SenseNova U1 is the first open-weights demonstration that the unified approach is competitive at production-grade image fidelity.

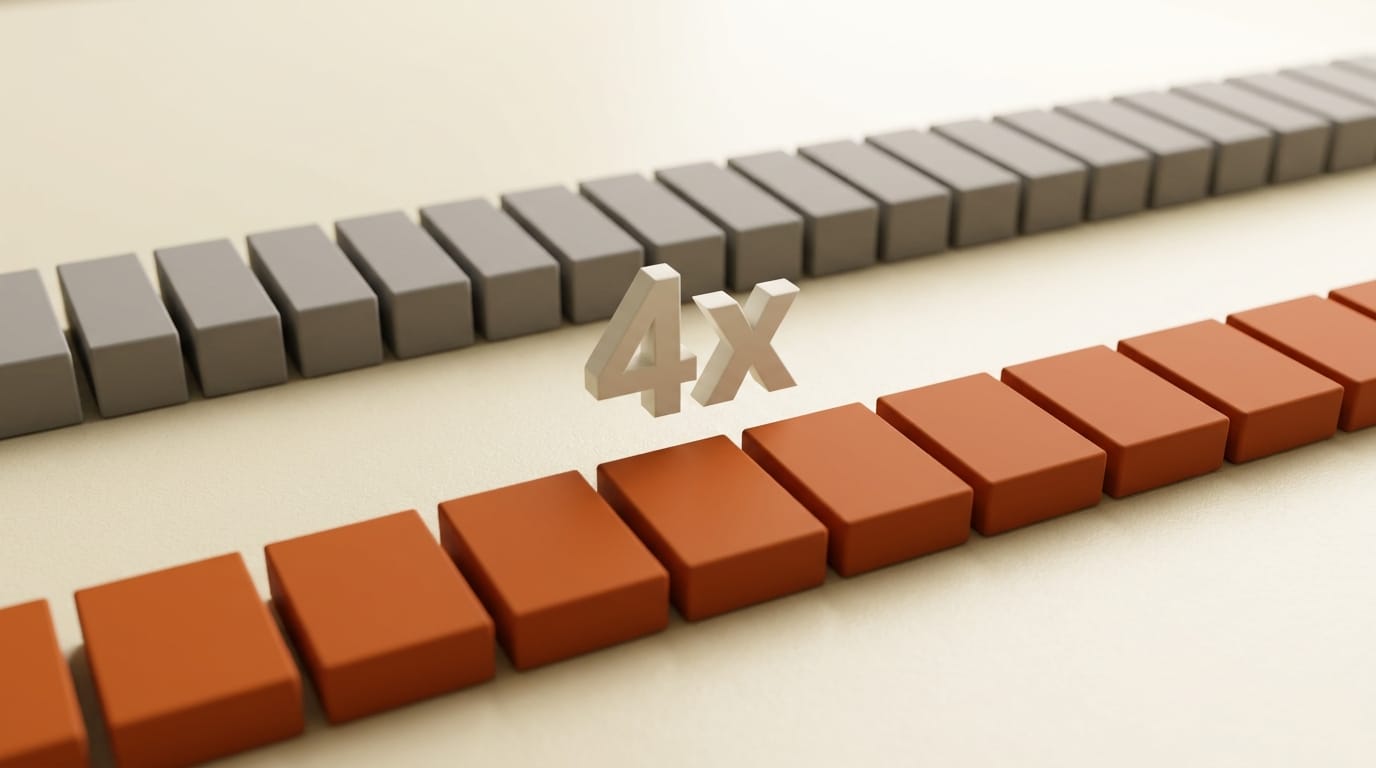

Distillation and the deployment-cost story

The April 30 distilled 8-step inference release is the second half of the strategy. A standard diffusion pipeline runs 25 to 50 denoising steps per image, which sets the compute floor for self-hosted deployment. The 8-step distillation cuts that floor by roughly 4x at minimal quality cost, putting single-GPU consumer-tier deployment within reach for the 8B and 3B-MoE variants.

For creators and small studios running local image generation, this matters more than the headline architecture story. A model that requires an A100 or H100 to run at acceptable latency is effectively a cloud API regardless of license. A model that runs on a consumer 4090 or 5090 at 8 steps per image is a self-hosted tool. SenseNova U1's distilled checkpoint moves it into the second category, alongside the smaller FLUX variants and distilled Stable Diffusion forks that anchor the current self-hosted creative AI stack.

Impact on Creators

For creators producing high-density layout content, SenseNova U1 is the first open-weights model that can credibly handle multi-line text in posters, comic panels, and infographics without the manual touch-up cycle that VAE-bridged models require. The unified image-text reasoning also closes the gap on iterative editing workflows, where a model has to read the current image, understand the requested change, and produce a coherent edit. VAE-free architectures handle that loop in one pass instead of decoding, re-encoding, and reasoning across separate components.

For solo developers and small studios, the Apache 2.0 license eliminates the deployment-licensing friction that has slowed Chinese open-weights adoption in Western markets. U1 can ship inside a commercial product without per-seat fees, region restrictions, or attribution requirements beyond standard Apache notice. The free SenseNova Studio playground at unify.light-ai.top provides a no-setup test path for evaluating the model before committing to local deployment.

The trade-off is the usual one for non-US open-weights releases: the model is trained primarily on Chinese-language and Chinese-context data, which surfaces in subject matter, cultural references, and prompt-following on idiomatic English text. Creators producing English-language layout output should expect to fine-tune or to use U1 as a layout-and-composition base rather than a finished-image generator for Western audiences.

Key Takeaways

- SenseNova U1 is the first open-weights image model to demonstrate that VAE-free unified architectures can match VAE-bridged diffusion on reconstruction quality (31.56 PSNR) while pulling ahead on text-rendering and layout-density tasks

- Three variants under Apache 2.0 cover dense (8B), base (8B), and resource-constrained (3B-MoE) deployment scenarios

- The April 30 distilled 8-step inference checkpoint pulls deployment cost into consumer-GPU range for the 8B and 3B variants

- The release continues the 2026 wave of Chinese open-weights unified image models, after Ant Group's LLaDA 2.0-Uni and Alibaba's Qwen-Image 2.0 Pro

- Apache 2.0 licensing permits commercial use without per-seat fees, removing a long-standing barrier to Chinese open-weights adoption in Western creator products

What to Watch

The first signal to track is fine-tuning activity on the 8B base variant in the next four to six weeks. Open-weights models live or die on the strength of community fine-tunes, and the unified architecture is novel enough that early fine-tuning recipes will not transfer cleanly from FLUX or SDXL. If the HuggingFace community produces three or four strong U1 fine-tunes by mid-June, the architecture will have crossed the threshold from research curiosity to production base model. If fine-tuning stalls, U1 will end up as another well-engineered model that the community could not adapt fast enough.

The second signal is whether the unified architecture starts appearing in non-SenseTime releases. Ant Group's LLaDA 2.0-Uni and SenseNova U1 are independent attempts at the same architectural bet. If a third major lab ships a VAE-free unified model in the next quarter, the standard-architecture assumption that has held since Stable Diffusion 1.5 is genuinely shifting. The candidates worth watching are the next Qwen-Image release, any Mistral image-generation entry, and whatever Black Forest Labs does after FLUX.2.

The third signal is whether SenseTime extends the open-weights treatment to the video and 3D tiers. The U1 release is an image-only model. SenseNova has video and 3D research tracks that have stayed closed. An open-weights U1-Video or U1-3D would be the more disruptive release, and the April 28 image drop is the obvious dry run for it.