NucleusAI released Nucleus-Image on April 14, a 17-billion parameter diffusion model that activates only 2 billion parameters per image -- cutting compute without sacrificing quality. It is the first fully open-source mixture-of-experts (MoE) diffusion model at this performance tier, licensed under Apache 2.0.

For the broader landscape, see our complete guide to AI image generation in 2026.

What Happened

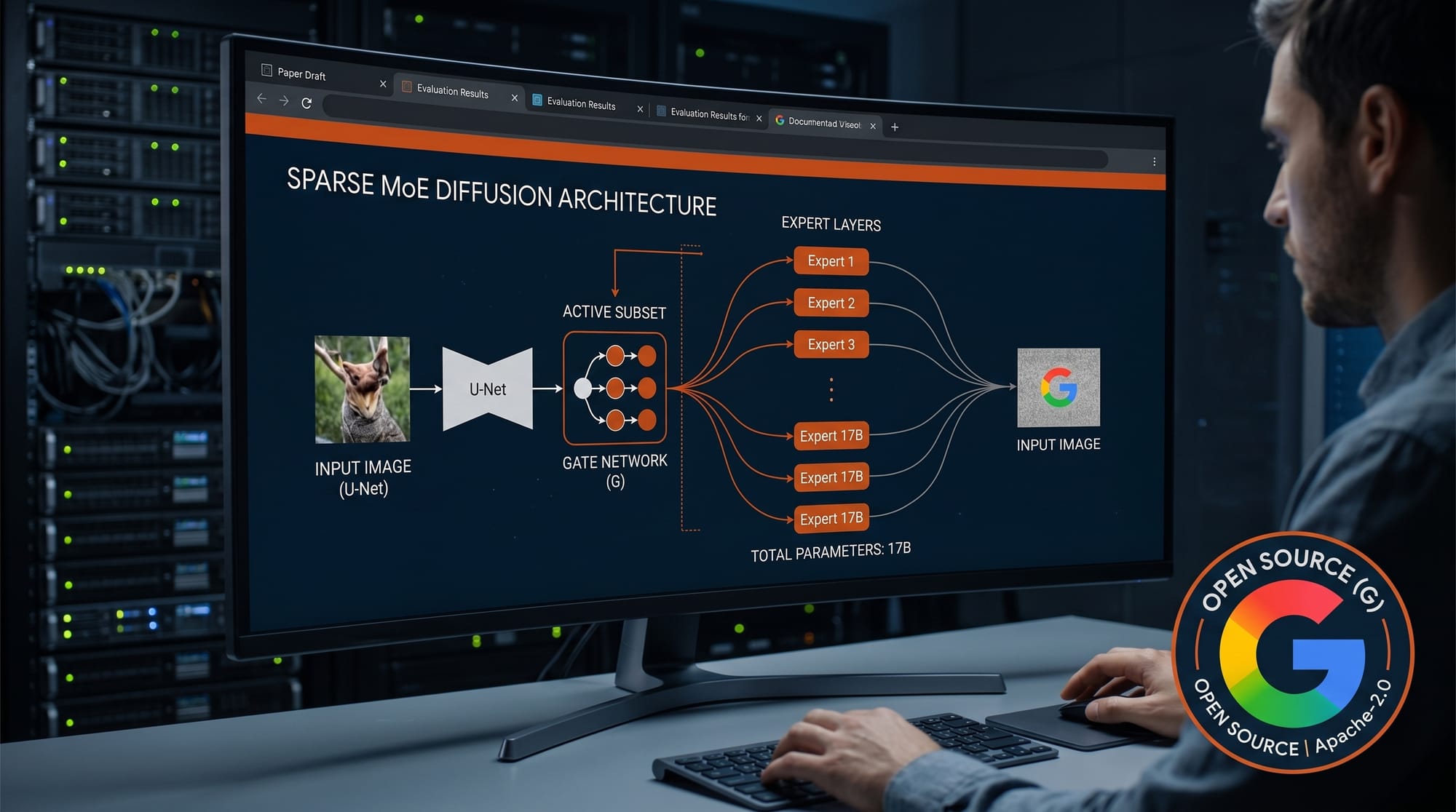

Nucleus-Image was posted to arXiv on April 14, 2026 alongside model weights on HuggingFace. The model uses a sparse mixture-of-experts transformer architecture with 64 routed experts per layer and one shared expert. Each image generation pass activates approximately 2 billion of the 17 billion total parameters, reducing the per-image compute load significantly compared to dense models of similar quality.

Critically, this is a base model with no post-training fine-tuning applied -- no reinforcement learning, no direct preference optimization, no human preference alignment. The results on standard benchmarks come purely from architecture and training data.

Why It Matters

Most high-quality open-source image generation models today are dense transformers: all parameters load into GPU memory and run on every forward pass. A 17B dense model requires substantial VRAM just to fit in memory. Nucleus-Image changes that: only 2B parameters are active at inference time, which means the GPU memory and compute requirements resemble a 2B model while output quality competes with much larger dense models.

For creators running local workflows, this is meaningful. The ComfyUI v0.19.0 release added native diffusers integration, and Nucleus-Image uses the same diffusers pipeline -- a standard quick-start is already published in the HuggingFace repo. The Apache 2.0 license covers commercial use without restriction.

Key Details

- Architecture: Sparse MoE diffusion transformer, 32 layers (29 with sparse MoE)

- Total parameters: 17B across 64 routed experts + 1 shared expert per layer

- Active parameters: ~2B per forward pass (Expert-Choice Routing)

- Benchmarks: Matches or exceeds leading models on GenEval (0.87), DPG-Bench (88.79), and OneIG-Bench (0.522)

- License: Apache 2.0 (commercial use allowed)

- Training: Full training recipe released, making it reproducible

- Inference: Native diffusers integration with Text KV caching for faster generation

- Aspect ratios: Multi-aspect-ratio support out of the box

What to Do Next

The model is available now on HuggingFace under Apache 2.0. Load it via the standard diffusers pipeline: DiffusionPipeline.from_pretrained("NucleusAI/Nucleus-Image"). Enable Text KV caching with TextKVCacheConfig() for faster inference. The full paper and training methodology are at arXiv 2604.12163. If you are already running ComfyUI workflows locally, this is a drop-in candidate to benchmark against your current base model -- VRAM usage should be notably lower at equivalent quality.