Three image generation models appeared on LM Arena over the weekend under codenames maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. Within hours, the AI community identified them as OpenAI's unreleased GPT-Image-2, a next-generation image model built on an entirely new architecture separate from GPT-4o.

What Happened

OpenAI deployed the three model variants into LM Arena's blind testing environment, where users evaluate image quality without knowing which model produced each output. The models were pulled within hours of identification, but not before testers documented significant improvements over the current GPT-Image-1 model. Some ChatGPT users also reported gaining direct access to the new model through A/B testing, mirroring OpenAI's December 2025 approach when Chestnut and Hazelnut codenames preceded the GPT-Image-1.5 release.

Why It Matters

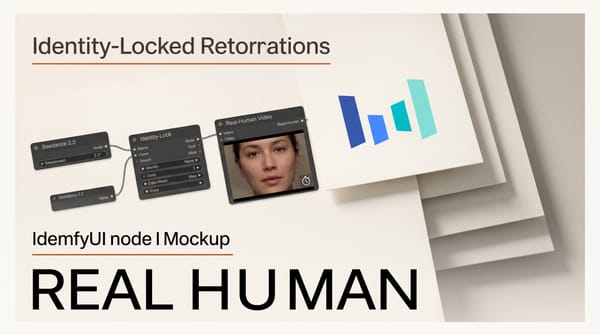

Early testers highlighted three breakthroughs that address longstanding weaknesses in AI image generation. Text rendering now works accurately within scenes, with correctly spelled button text, labels, and signage sitting naturally inside compositions. The persistent yellow color cast that plagued GPT-Image-1 outputs appears to be eliminated. World knowledge has improved substantially, meaning the model generates contextually accurate scenes without exhaustive prompt engineering.

The timing is notable. OpenAI shut down Sora on March 24, freeing significant compute resources. GPT-Image-2 is not based on the 4o architecture, suggesting OpenAI invested in a purpose-built image generation system rather than extending its multimodal language model.

Key Details

- Architecture: Independent image generation model, not based on GPT-4o

- Codenames: maskingtape-alpha, gaffertape-alpha, packingtape-alpha

- Improvements: Near-perfect text rendering, eliminated yellow tint, enhanced world knowledge, photorealistic output

- Competitive positioning: Early comparisons suggest GPT-Image-2 rivals Google's Nano Banana Pro at text rendering and world knowledge

- Release timeline: Based on OpenAI's previous cadence, 2 to 4 weeks typically separate Arena testing from official API release

What to Do Next

Creators who rely on AI image generation for UI mockups, marketing assets, or product visualization should watch for the official release. The text rendering improvements alone could eliminate a major pain point that currently requires manual correction. Detailed prompt examples and output comparisons from early testers are available while the official launch is pending.