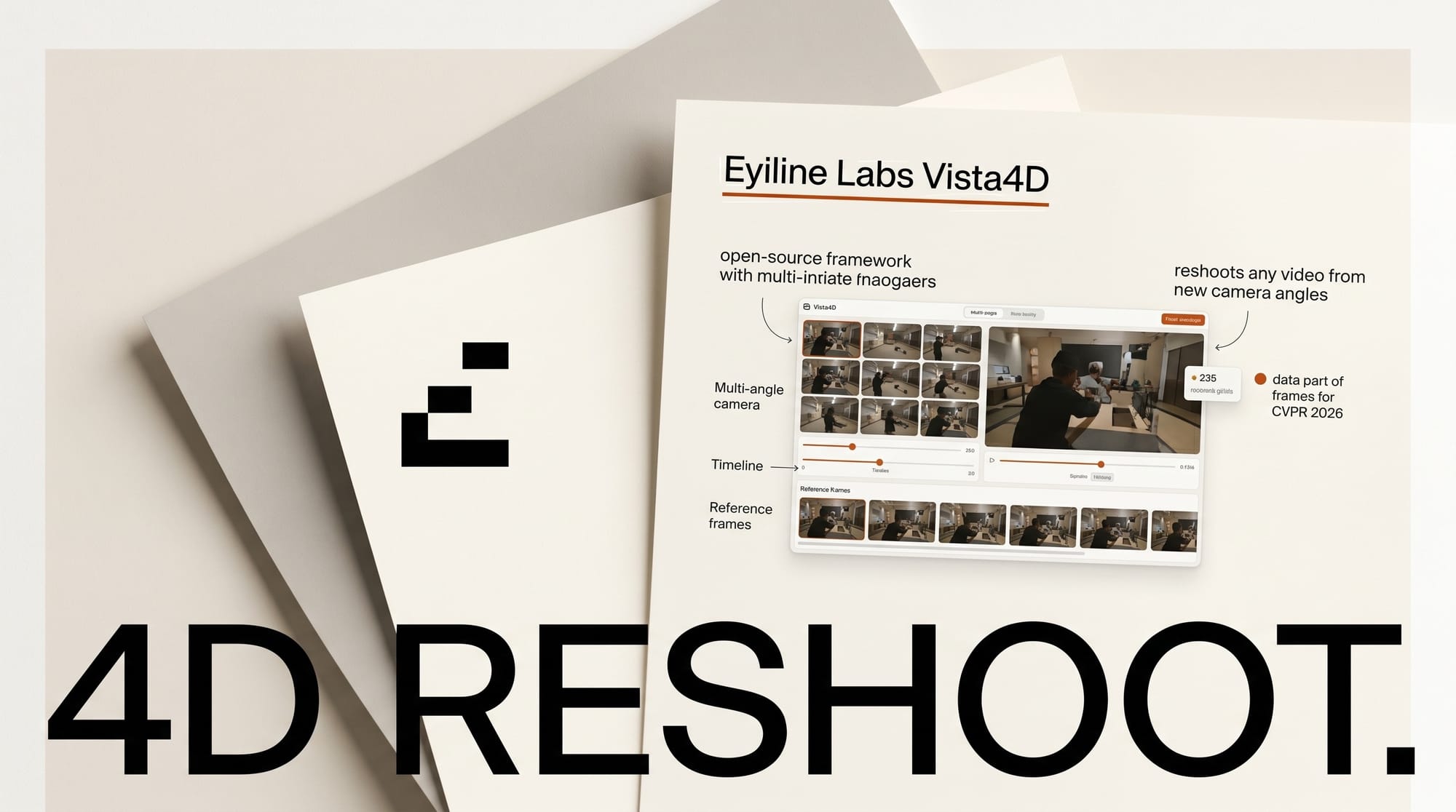

Eyeline Studios research arm, Eyeline Labs, released Vista4D on April 23 2026, an open-source framework that reshoots existing video footage from new camera angles using a 4D point cloud representation. The work is a CVPR 2026 highlight, and code, models, and paper are all live on the project page.

For the broader landscape, see our complete guide to AI video generation in 2026.

What Happened

Vista4D takes a single input video and re-synthesizes the scene from camera trajectories and viewpoints the creator did not originally capture. The method builds a 4D point cloud of the scene, applies static pixel segmentation to preserve visual content, and drives a video diffusion model to render novel views while maintaining temporal consistency. The team published the work on arXiv with the reference implementation on GitHub under a Creative Commons BY 4.0 license.

Why It Matters

Virtual reshooting has been a research goal for years, but prior 4D Gaussian splatting approaches required multi-view capture rigs or locked cameras. Vista4D generalizes to casual single-camera footage, which means a filmmaker can shoot once and later generate push-ins, tracking moves, or reverse angles in post. The same technology expands the frame beyond the original composition, letting editors recover content that was cropped or occluded during the shoot.

For creators already using AI video generators, Vista4D fills a gap those tools do not cover: consistent 4D reshoots of real captured footage, not synthesized clips. The Netflix-backed Eyeline Studios lineage also signals the work is aimed squarely at production pipelines rather than academic benchmarks.

Key Details

- Use cases: dynamic scene expansion with casual video as background reference, 4D scene recomposition through point cloud editing, and long video inference with memory.

- Architecture: 4D-grounded point cloud plus static pixel segmentation, paired with a video diffusion model for novel view synthesis.

- Authors: Kuan Heng Lin, Zhizheng Liu, Pablo Salamanca, Yash Kant, Ryan Burgert, Yuancheng Xu, Koichi Namekata, Yiwei Zhao, Bolei Zhou, Micah Goldblum, Paul Debevec, and Ning Yu.

- Venue: CVPR 2026 highlight paper, 24 pages with 20 figures.

- Reported results: the authors report 67 to 77 percent user preference over prior 4D reshooting methods in their study.

- License: code and weights released under CC BY 4.0 for research and attribution-based reuse.

What to Do Next

Start with the project page, which hosts the paper, code, and pretrained models alongside qualitative result galleries. Clone the GitHub repo to run the pipeline on your own footage. Expect substantial GPU memory usage because the point cloud plus diffusion stack is not lightweight, and plan for multi-minute per-clip inference on longer takes. The license is research-friendly, but commercial work should review the repository notes before shipping output in a paid production.