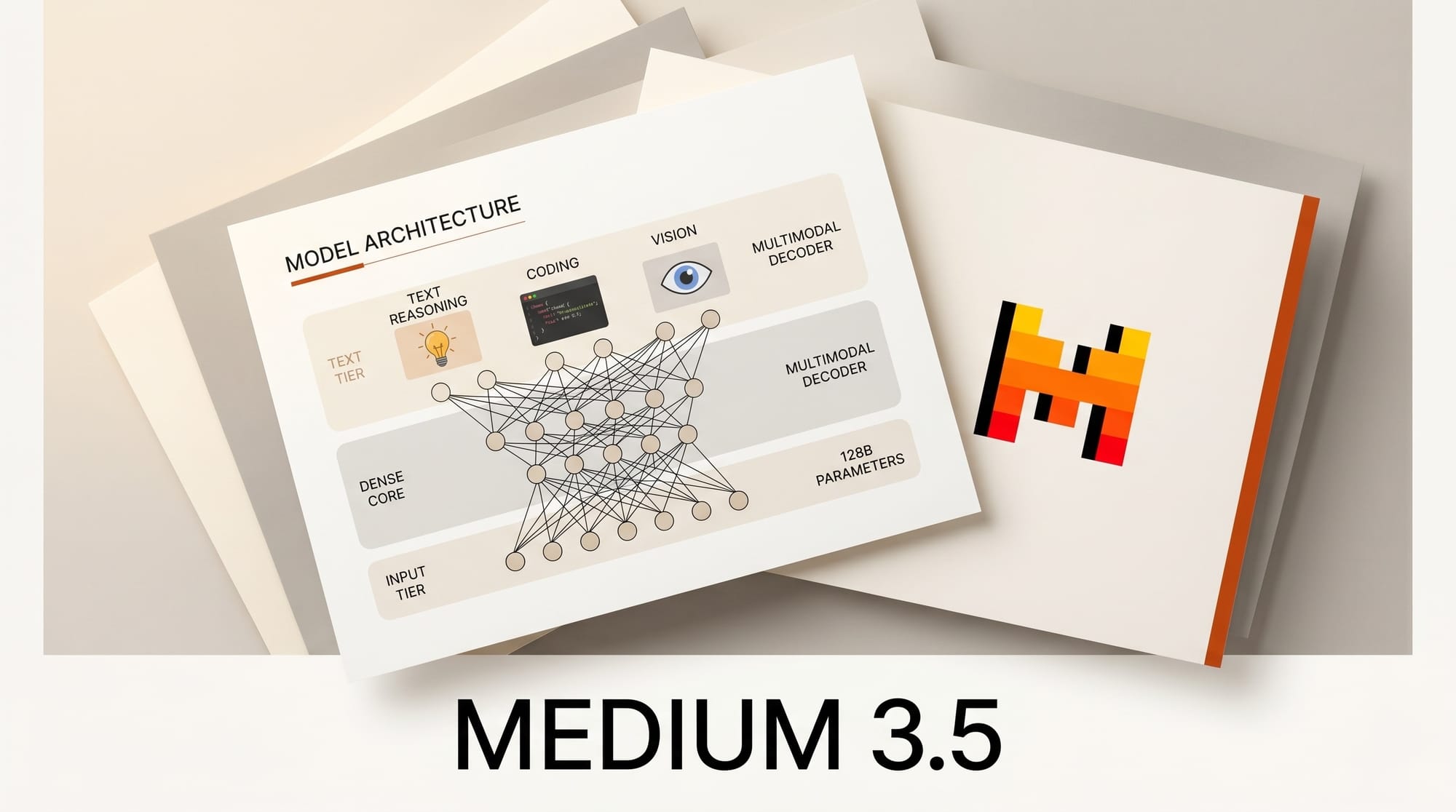

Mistral released Medium 3.5 on April 29, 2026, a 128 billion parameter dense multimodal model with a 256K context window, distributed under a modified MIT open-weights license. The release ships alongside remote cloud agents in Mistral Vibe and a new Work mode in Le Chat, both running on the same checkpoint. The combination is unusual. Most labs ship a hosted model first and an open variant later, often a smaller distillation. Mistral is shipping the flagship model, the agentic runtime, and the consumer chat surface on the same day, with the weights public from minute one.

Background

Mistral Medium 3.5 is the company's first attempt to merge what used to be three separate product lines. The Medium tier covers general instruction following and reasoning. Devstral covered coding. Pixtral covered vision. Medium 3.5 is a single dense checkpoint that handles all three, with a vision encoder trained from scratch to handle variable image sizes and aspect ratios, and a configurable reasoning effort exposed per request. The model posts 77.6% on SWE-Bench Verified and 91.4 on the τ³-Telecom agentic benchmark, scores Mistral claims put it ahead of Devstral 2 and Qwen3.5 397B on coding. Pricing through the Mistral API is $1.50 per million input tokens and $7.50 per million output tokens, undercutting most hosted frontier models on the input side and matching them on the output side.

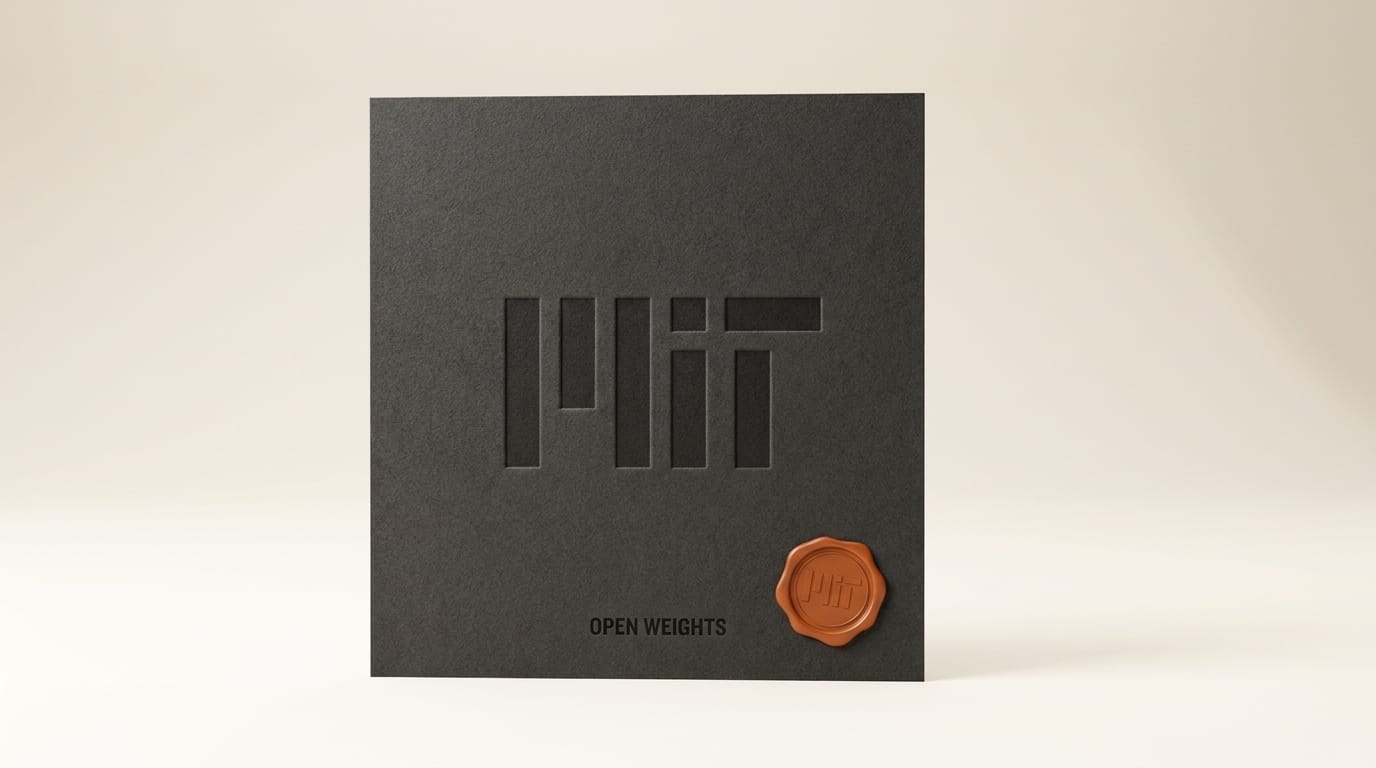

The open weights matter. Recent open-weight releases from competitors have drifted toward Apache 2.0 with use restrictions, custom commercial licenses, or research-only terms. Mistral's modified MIT is closer to permissive in the original sense. The weights are published on Hugging Face, with a separate EAGLE speculative decoding head for inference acceleration. For studios that need to self-host on their own GPUs, Mistral says the model runs on as few as four cards, and hosted inference is available through NVIDIA NIM endpoints for teams that want a managed deployment without leaving the open-weights track.

Deep Analysis

The dense bet against the MoE consensus

Most of the recent flagship open-weight releases have been sparse. DeepSeek V4 Preview ships as a 671B mixture-of-experts with roughly 37B active parameters per token. Kimi K2.6 went the same direction. The bet behind MoE is that you can scale total parameters without scaling per-token compute, so inference stays cheap. The bet behind a 128B dense model is that the routing overhead, training instability, and serving complexity of MoE are not worth the savings for a model that creators will actually run themselves on small clusters or studio workstations.

For self-hosted setups, dense is easier to reason about. Memory is predictable, latency is predictable, fine-tuning works without the expert-collapse failure modes that have hit MoE finetunes, and the model fits within the constraints of two to four high-end GPUs. The trade is that frontier capability per dollar of training compute is lower than a well-tuned MoE. Mistral is calling that trade. The 77.6% SWE-Bench Verified score is the test of whether a 128B dense model can keep up with sparse competitors on agentic coding without exotic hardware.

Cloud teleport changes the agentic coding loop

The bigger product change is the remote agent in Mistral Vibe. Sessions started in the local CLI can be teleported to a cloud sandbox where an isolated runtime executes broad edits, installs dependencies, runs tests, and opens a pull request on GitHub asynchronously. The creator can kick off a refactor from a laptop, close it, and pick up the finished branch later. Connectors for Linear, Jira, Sentry, Slack, and Teams give the agent a way to read tickets, log errors, and post status without manual context switching.

This mirrors how Poolside shipped Laguna XS.2 and M.1 the day before with a similar long-horizon coding posture, and how Lovable's mobile app moved vibe coding off the desktop entirely. The pattern is consistent across labs: code, walk away, review the PR. The local IDE is becoming a control surface for cloud agents, not the place where the work happens. For solo creators and small studios, that is the difference between treating an AI coding tool as autocomplete and treating it as a junior developer who picks up tasks from a queue.

Le Chat Work mode and the agent-permission problem

Le Chat's new Work mode is the consumer-surface analogue of Vibe's remote agents. It runs multi-step flows that span email, calendar, internal docs, and external connectors, with parallel tool calls and explicit approval prompts for sensitive actions. This is where the operational risk lives. An agent that can teleport into a cloud sandbox to refactor code is contained. An agent that can read your inbox, draft replies, schedule meetings, and update Jira tickets is not contained, and the question of which actions get implicit approval and which get explicit approval is what determines whether the product is useful or terrifying.

Mistral's choice to require explicit approval for sensitive calls is conservative in a way that Anthropic and OpenAI's similar agent surfaces have been less consistent about. For studios bringing this into a creative production workflow, the approval gate matters more than the benchmark score. A coding agent that opens a wrong PR is annoying. A work agent that sends a wrong email to a client is a contract problem.

Pricing pressure on the hosted frontier

API pricing of $1.50 input and $7.50 output is interesting more for what it signals than for what it costs. Most hosted frontier coding models price input at $2.50 to $3 and output at $10 to $15. Mistral is positioning Medium 3.5 as a substitute that costs roughly half on input, the dimension that matters most for agentic coding because agents read far more code than they write. For a studio running an agentic refactor across a 200K-line repo, the input bill dominates, and a 50% cut compounds across long sessions.

The pricing is also a hedge against the open-weights option. If a studio decides hosted is the right deployment, Mistral wants to be cheaper than the alternatives. If the studio decides self-hosting is the right deployment, the same model is available on Hugging Face under MIT terms. There is no version-skew penalty for switching between the two, which has been a recurring complaint with other labs that maintain closed flagships and smaller open variants.

Impact on Creators

For solo creators and small studios building apps, sites, and creative pipelines through vibe-coding tools, Medium 3.5 closes the gap between hosted frontier coding models and what runs on a workstation. The 256K context handles long monorepos and multi-file refactors in a single session, which is where shorter-context models break. The MIT-style license is more permissive than the Apache-with-restrictions terms on most recent open weights, which matters for studios shipping commercial workflows and for clients who want a clean licensing story.

The remote-agent loop is where the day-to-day change shows up. A creator who uses Vibe CLI today gets the upgrade automatically when they pass the --remote flag, and a session that would have tied up a local terminal for forty minutes now runs in the background while the creator works on something else. For small teams, that is the operational equivalent of hiring a part-time engineer who only does refactors and dependency upgrades. The cost is not zero, because cloud sessions consume API tokens, but the time-to-shipped-code is dramatically shorter than running the same agent locally.

The flip side is the discipline cost. Cloud agents that open pull requests asynchronously need a code review process to match. A creator who merges agent-generated PRs without reading them will ship bugs at scale. The 2026 AI coding tools guide covers the review workflows that scale with agent throughput, and they are the limiting factor, not the model capability.

Key Takeaways

- Architecture: 128B dense, 256K context, multimodal (text plus vision). Bet against the MoE consensus driving most recent open-weight releases.

- License: Modified MIT open weights, more permissive than Apache-with-restrictions or research-only terms common in the category.

- Benchmarks: 77.6% SWE-Bench Verified, 91.4 τ³-Telecom agentic. Mistral claims this beats Devstral 2 and Qwen3.5 397B on coding.

- API pricing: $1.50 / $7.50 per million input / output tokens. Roughly half the input cost of hosted frontier coding APIs.

- Vibe remote agents: Cloud sandbox sessions with GitHub PR creation, Linear / Jira / Sentry / Slack / Teams integrations, and CLI-to-cloud session teleport via the

--remoteflag. - Le Chat Work mode (preview): Multi-step agentic flows across email, calendar, web, and internal docs with explicit approval gates for sensitive tool calls.

- Replaces: Devstral 2 inside Vibe CLI; new default model in Le Chat. Self-hosting on as few as four GPUs, hosted on Mistral API and NVIDIA NIM.

What to Watch

The open question is whether the dense bet pays off. If Medium 3.5 holds the SWE-Bench Verified lead through community fine-tuning and real-world studio use, dense flagships will become a credible alternative to MoE for the open-weights tier. If MoE-based competitors close the gap on per-token cost and outperform on raw capability, this release will look like a transitional step. The next two open-weight flagship drops will tell. Watch for community fine-tunes on Hugging Face, especially long-context coding finetunes that push the 256K window into specific framework or repo territory.

The remote-agent product also has a watching brief. Whether creators trust the cloud sandbox enough to point it at production repos is a separate question from whether the model can do the work. Mistral's choice to require explicit approval for sensitive Le Chat Work actions is the right default. Whether competing agentic surfaces follow that lead, or keep optimizing for fewer interruptions, is the policy fight that will determine which agent products end up safe to deploy in client work. New users testing the loop should connect a side repo first via the Mistral API docs, watch a few PR cycles, and only then point the agent at production code.