IBM released the Granite 4.1 family on April 29, 2026, under Apache 2.0. Three sizes ship today: 3B, 8B, and 30B dense decoder-only LLMs, each with 128K production context and a Phase V long-context extension to 512K. The full stack includes Granite Vision 4.1 for document and chart understanding, a 2B Granite Speech 4.1 model at 5.33% word error rate, Granite Guardian 4.1 for safety classification, and the Embedding Multilingual R2. The most interesting story is not the launch itself but the architectural reversal underneath it.

Granite 4.0 shipped in early 2026 as a hybrid Mamba-2 and transformer model with a Mixture-of-Experts variant called 4.0-H-Small at 32B total parameters and 9B active. IBM bet on hybrid plus MoE to push throughput. Six months later, with Granite 4.1, IBM walked the bet back. The 4.1 family is dense, decoder-only, and homogenous across sizes. The reason is in the published numbers: the 4.1 8B-instruct dense model meets or beats 4.0-H-Small on instruction following, AlpacaEval, MMLU-Pro, BBH, math, EvalPlus, ArenaHard, and tool calling. Dense at 8B beats hybrid-MoE at 32B-A9B on most metrics that matter for production. That is the news.

What shipped on April 29

The launch is broader than a single model card. IBM published a coordinated stack from the same architecture pivot: three instruct LLMs at 3B, 8B, and 30B with matched FP8 variants for vLLM, a Vision 4.1 model for document and chart understanding paired with a million-scale chart dataset called ChartNet, a 2B Granite Speech 4.1 multilingual ASR and translation model with three variants including a non-autoregressive higher-throughput build, a Granite Guardian 4.1 safety classifier fine-tuned on the 4.1 8B base, and the Embedding Multilingual R2 model at 97M parameters covering 200-plus languages.

The license is Apache 2.0 across the family, unchanged from Granite 3.x and 4.0. The lineage carries cryptographic signing for authenticity and ISO 42001 certification, the first open language model family to hold that ISO designation. None of those guarantees were loosened in 4.1. License consistency on a more capable model is the practical takeaway for paid client work.

Inference partners named in the launch include Hugging Face as the primary host, Ollama, LM Studio, vLLM with FP8 tuning, Unsloth for fine-tuning recipes, OpenRouter, Replicate, AnythingLLM, Artificial Analysis, Weights and Biases, and IBM's own watsonx. NVIDIA NIM was a Granite 4.0 partner but is not named in the 4.1 announcement; expect a follow-on rather than treating NIM as live today.

The dense pivot, with numbers

IBM's published benchmark table is unambiguous on size scaling. The 30B-instruct lands at MMLU 80.16, HumanEval pass@1 88.41 to 89.63, HumanEval+ 85.37, MBPP 83 to 85, GSM8K 94.16, Minerva Math 81.32, DeepMind Math 81.93, BFCL v3 73.68 for tool calling, and IFEval 89.65 for instruction following. These are headline numbers for an Apache 2.0 dense model.

The 8B-instruct posts MMLU 73.84, HumanEval 85 to 87, MBPP 82 to 87, GSM8K 92.49, BFCL v3 68.27, IFEval 87.06. The 3B-instruct hits MMLU 67.02, HumanEval 79 to 82, MBPP 61 to 71, GSM8K 86.88, BFCL v3 60.80, IFEval 82.30. The point of citing all three sizes is that the family scales cleanly: dense 3B is a real coding assistant, dense 8B is a serious local agent, and dense 30B is enterprise-grade.

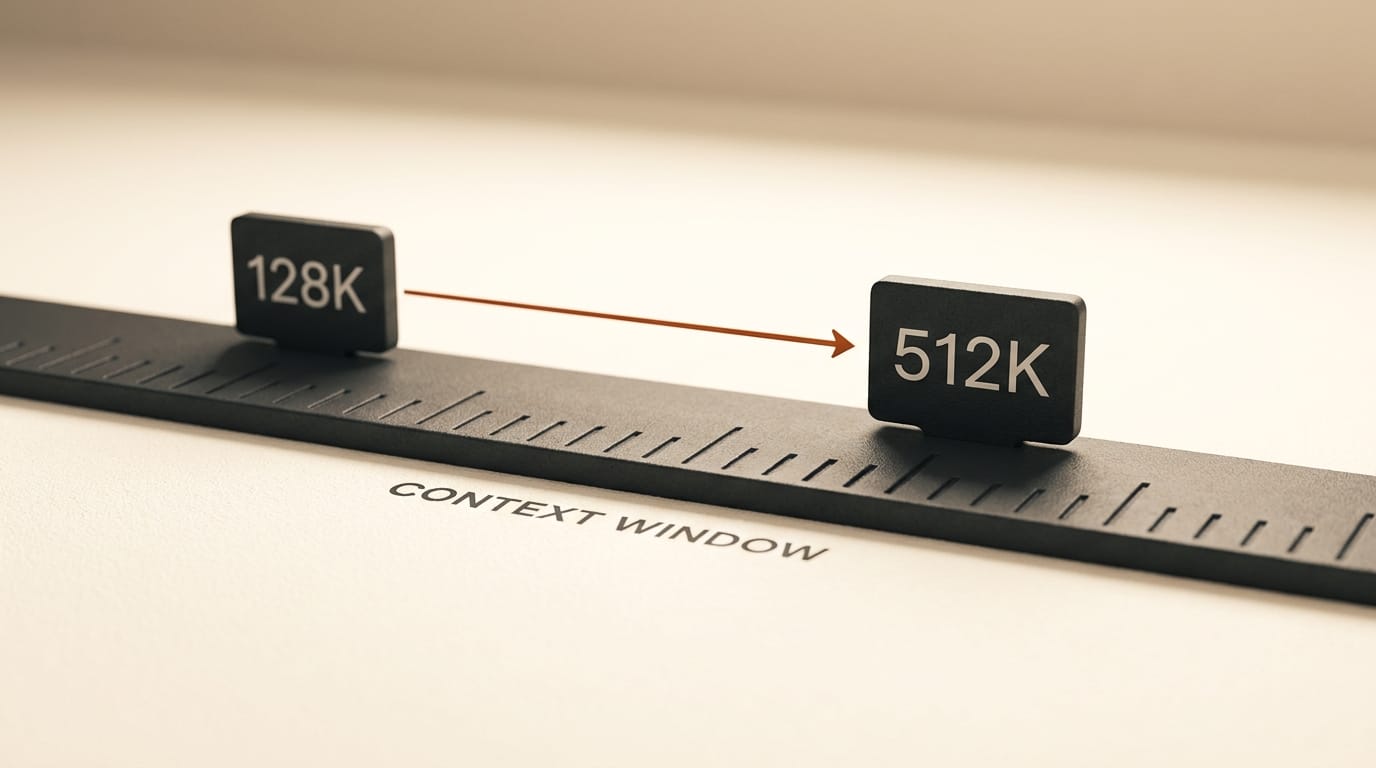

Long-context retention is honest. RULER scores at 128K are 73.0 for the 8B and 76.7 for the 30B. The 512K Phase V extension is a real capability, not a marketing claim, but production deployments should plan around the 128K number where retrieval quality is verified.

What IBM did not publish: head-to-head tables against Llama 3.1 or 3.3, Qwen 2.5, DeepSeek-V3, or Mistral Small. The launch material compares 4.1 only against IBM's own Granite 4.0-H-Small and characterises the family verbally as competitive with Gemma and Qwen. The HumanEval 88-90 and MBPP 83-85 figures at 30B are in the same league as Qwen 2.5-Coder territory at the same size, and well above Llama 3.x non-coder variants. State the absolute numbers, attribute to IBM's published benchmarks, and let readers do their own competitor comparisons.

Architecture and training, briefly

All three sizes use the same building blocks: decoder-only transformer with grouped-query attention at 8 KV heads, RoPE positional encoding, SwiGLU activation, RMSNorm, shared input and output embeddings, BF16 native precision. The 3B uses 40 layers at 2,560 embedding dimension. The 8B uses 40 layers at 4,096. The 30B uses 64 layers at 4,096 embedding with a much larger MLP hidden width.

Training is a five-phase pipeline totaling roughly 15 trillion tokens. Phase I is general pretraining at 10T tokens with 59 percent CommonCrawl, 20 percent code, and 7 percent math. Phase II is a 2T-token math and code pivot. Phase III is 2T tokens of high-quality data with chain-of-thought traces. Phase IV is 500B tokens of refinement on CommonCrawl-HQ, code, and math. Phase V is the 396B-token long-context extension that ramps from 4K through 32K, 128K, and 512K. The training cluster is NVIDIA GB200 NVL72 on CoreWeave with NVLink intra-rack and 400 Gb/s InfiniBand between racks.

Post-training is a multi-stage RL pipeline. Supervised fine-tuning runs on 4.1 million curated samples with a six-dimensional LLM-as-Judge rubric covering instruction following, correctness, completeness, conciseness, naturalness, and calibration. Reinforcement learning is on-policy GRPO with DAPO loss on the SkyRL stack. The RL pipeline runs multi-domain RL across eight domains, then RLHF with a multilingual reward, then identity and knowledge calibration, then a math RL recovery pass. The math recovery alone is reported to lift GSM8K by 3.8 points and DeepMind Math by 23.48 points.

One deliberate omission: there is no extended chain-of-thought in 4.1 instruct. IBM chose predictable latency over reasoning-style benchmarks. If you need o-series or Claude-tier extended reasoning, this is not the model. If you need an instruction-following agent that returns in seconds and supports tool calling, it is.

Why creators should care

The Creative AI News audience runs creative pipelines in image, video, audio, 3D, and code. A permissive, locally-runnable, dense 8B that lands above 85 on HumanEval and 87 on MBPP is exactly the model class missing from most of those pipelines today. Five concrete openings.

Local code copilot for creative tooling. The 8B variant runs on a single 24 GB consumer GPU like an RTX 4090 or 5090 and will autocomplete Python for ComfyUI custom nodes, Blender add-ons, TouchDesigner shaders, p5.js sketches, and Houdini VEX without sending any code to a third party. The 3B variant runs on 6 to 8 GB of GPU memory or a modern MacBook through Ollama or LM Studio. For a freelancer who pays $20 a month for Cursor, a local Granite 4.1 8B is a real alternative for the Python-and-shader two-thirds of the work.

RAG over portfolio and brand documents. Production-grade 128K context is enough to drop an entire client brief, brand guideline, episode transcript, or shot list into the prompt without chunking. The 512K extension is enough to load a year's worth of brand guidelines plus competitive research. Running this locally on Apache 2.0 weights means an internal asset search has zero per-token API spend.

Tool-calling for agentic creative pipelines. BFCL v3 of 73.68 at 30B is enterprise-grade tool routing. Pair the model with MCP servers for Photoshop, Premiere, Figma, Houdini, Blender, or the new Anthropic-blessed Claude Blender connector and the result is a local agent that can fire render queues, tag stock footage, batch-rename assets, or dispatch to ElevenLabs and Runway APIs without OpenAI or Anthropic in the loop.

Document and chart understanding in the same family. Granite Vision 4.1 plus the ChartNet dataset means creators handling pitch decks, revenue dashboards, or research papers get a same-family vision model with the same Apache 2.0 license. VFX bid sheets, music royalty statements, and stock-photo metadata are all in scope.

Speech is part of the bundle. Granite Speech 4.1 at 2B parameters and 5.33 percent word error rate lives next to the LLM. Interview transcription, podcast indexing, and voice-driven editor commands all stay local. The non-autoregressive variant trades a small accuracy hit for higher throughput, which matters when you batch a hundred episodes.

Deployment paths today

The fastest path to a working setup is Hugging Face plus Transformers: pip install torch torchvision torchaudio accelerate transformers, then load ibm-granite/granite-4.1-8b-instruct directly. For lower-friction local use, Ollama and LM Studio both ship Granite 4.1 in their catalogues; the standard ollama pull path that worked for 4.0 carries over. vLLM is the production-tier path with explicit FP8 tuning for the 8B variant, which IBM reports cuts disk and GPU memory by roughly half versus BF16. Unsloth ships fine-tuning recipes. OpenRouter and Replicate host the family for serverless inference. AnythingLLM is a drop-in agent and RAG UI.

Hardware planning. The 3B at BF16 is roughly 6 GB on disk and runs on 6 to 8 GB of VRAM. The 8B at BF16 is roughly 16 GB on disk and 16 GB of VRAM; the FP8 variant halves both. The 30B at BF16 is roughly 60 GB on disk; IBM's model card recommends 64 GB-plus of VRAM, which means a single H100 80 GB or a pair of A100 80 GBs in NVLink, plus 128 GB of system RAM.

Documented limits and gotchas

Five things worth flagging before committing the model to a production pipeline.

- No extended chain-of-thought. If your workflow needs Claude-tier or o-series reasoning, Granite 4.1 instruct is not the right tool.

- NVIDIA NIM packaging is not confirmed for 4.1 yet. NIM was a 4.0 partner and will likely follow, but treat the 4.0 NIM containers as the reference point for now.

- No technical paper or arXiv preprint is linked from the launch. The HF blog and model cards are the canonical sources today.

- The 512K context is a Phase V extension, not the production-tested figure. Plan around 128K where RULER quality is verified, treat 512K as a reach.

- watsonx hosted-inference price points are not public. Local self-hosting is the cheapest path; cloud-hosted pricing requires a watsonx contract.

What to do this week

If you have a 24 GB GPU or a modern MacBook, pull ibm-granite/granite-4.1-8b-instruct tonight via Ollama or LM Studio and run a real workload against it. Start with code generation for whichever creative tool you use daily; that is where the published benchmarks are strongest and where the value-per-token math is most favourable against a Cursor or GitHub Copilot subscription.

If you are evaluating the 3B for embedded or constrained-device use, the 3B model card documents the exact quantization and prompt templates. The size is small enough for laptop-class deployment and the benchmarks are good enough for daily code assistance.

If you are an agency or studio standardising on a permissive open model for client work, the Apache 2.0 license plus ISO 42001 lineage is the contractual story. Granite 4.1 is the rare open model where derivatives can be shipped to clients without a license review.

Canonical sources to bookmark: the HuggingFace launch blog, the IBM Research announcement, the HF model collection, the 8B model card with full benchmark tables and prompt templates, and the 30B model card for the largest variant. The launch coverage will compound over the next week as community fine-tunes and quantizations land. The dense pivot story is the angle that survives the news cycle.