Deep Dive

Apr 13, 2026

ByteDance's Seedance 2.0 is now a native node in ComfyUI, bringing multimodal audio-video generation with 12 simultaneous reference inputs directly into the most popular open-source creative AI pipeline.

ComfyUI

Apr 13, 2026

ComfyUI v0.19.0 lands with Ace Step 1.5 XL music generation, Qwen 3.5 text generation, SeeDance 2.0 video nodes, and a faster Flux 2 decoder.

Open Source

Apr 12, 2026

llama.cpp release b8769 adds audio multimodal support for Qwen3-Omni and Qwen3-ASR models, bringing local speech recognition and audio understanding to consumer hardware.

audio

Apr 12, 2026

OpenBMB released VoxCPM2, a 2 billion parameter text-to-speech model that runs on 8GB VRAM, supports 30 languages at 48kHz, and can design voices from natural language descriptions.

ai-music

Apr 10, 2026

MiniMax released Music 2.6 on April 10, adding a Cover feature that extracts a song's melodic skeleton and lets creators rebuild everything around it.

AI

Apr 9, 2026

llama.cpp b8738 adds backend-agnostic tensor parallelism that enables large AI models to run across multiple GPUs without vendor-specific code.

ai-3d

Apr 9, 2026

Researchers released GaussiAnimate, a framework that automatically rigs and animates 3D Gaussian Splatting assets with 17.3% quality improvement.

ai-image

Apr 9, 2026

Researchers from Tongji University, Tencent, and five other institutions released MegaStyle, a 1.4-million image dataset for style transfer alongside a FLUX-based model.

AI Video

Apr 9, 2026

A team of 23 researchers released LPM 1.0, a 17-billion parameter Diffusion Transformer that generates real-time character video from audio input.

AI

Apr 8, 2026

Hugging Face contributes Safetensors to the PyTorch Foundation, making the de facto AI model storage format a vendor-neutral, community-governed project.

AI

Apr 7, 2026

Google Cloud Platform has open-sourced Scion, an experimental orchestration testbed that runs multiple AI coding agents as isolated, concurrent container processes.

AI Models

Apr 7, 2026

Z.AI released GLM-5.1, a 754-billion-parameter open-weight model that claims the top spot on SWE-Bench Pro with a score of 58.4, surpassing Claude Opus 4.6 and GPT-5.4.

AI Models

Apr 6, 2026

Meta confirmed it will release open-source versions of its upcoming Mango multimedia generator and Avocado LLM, extending its open-weight strategy to next-gen frontier models.

Deep Dive

Apr 5, 2026

In April 2026, a creator with a $1,200 desktop PC and a 12GB graphics card can generate publication-quality images in under 10 seconds, produce video clips, run a conversational AI, and compose music without sending a single byte to the cloud.

video

Apr 4, 2026

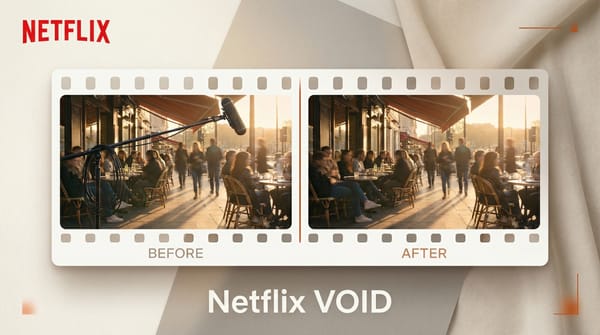

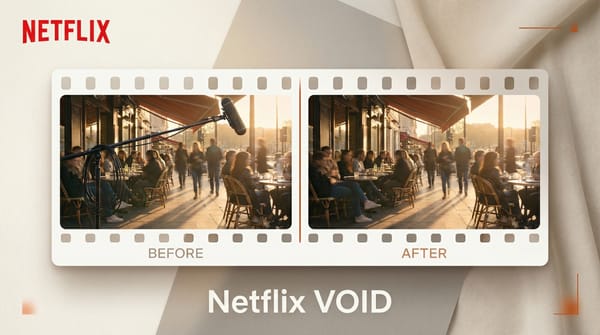

Netflix has released VOID (Video Object and Interaction Deletion), its first public AI model. The open-source tool removes objects from video while preserving physically plausible interactions.

News

Apr 3, 2026

DeepSeek V4 will run exclusively on Huawei Ascend 950PR processors, marking a milestone in China's push to build advanced AI without American chip technology.

AI

Apr 3, 2026

Tencent has released OmniWeaving, a unified video generation model that handles seven distinct tasks from text-to-video to reasoning-augmented generation, with publicly available weights and code.

AI Video

Apr 3, 2026

Alibaba Wan2.7 video generation model is now available directly in ComfyUI through Partner Nodes, bringing comprehensive video creation capabilities to the popular node-based workflow tool.

AI Tools

Apr 2, 2026

Sony AI has released Woosh, an open source sound effects foundation model supporting text-to-audio and video-to-audio generation.

Deep Dive

Apr 2, 2026

The first week of April 2026 delivered three significant open-source audio AI models that directly challenge paid alternatives in voice synthesis, sound effects, and multilingual speech.

Deep Dive

Apr 2, 2026

Google released Gemma 4, a family of four open multimodal models under Apache 2.0. The 31B dense model scores 89.2% on AIME 2026, quadrupling Gemma 3. For creators and developers building on open models, this changes everything.

Open Source

Apr 1, 2026

Liquid AI releases LFM2.5-350M, a 350M parameter open-weight model that runs AI agents on mobile GPUs in just 81MB with 32k context window.

AI Tools

Apr 1, 2026

OmniVoice is a zero-shot text-to-speech model supporting 600+ languages with voice cloning, an Apache 2.0 license, and inference 40x faster than real-time.

ai-image

Apr 1, 2026

Alibaba released Wan2.7-Image, a unified AI model that handles both image generation and editing in one system, with fine-grained personalization, color control, and a Pro variant capable of 4K output.