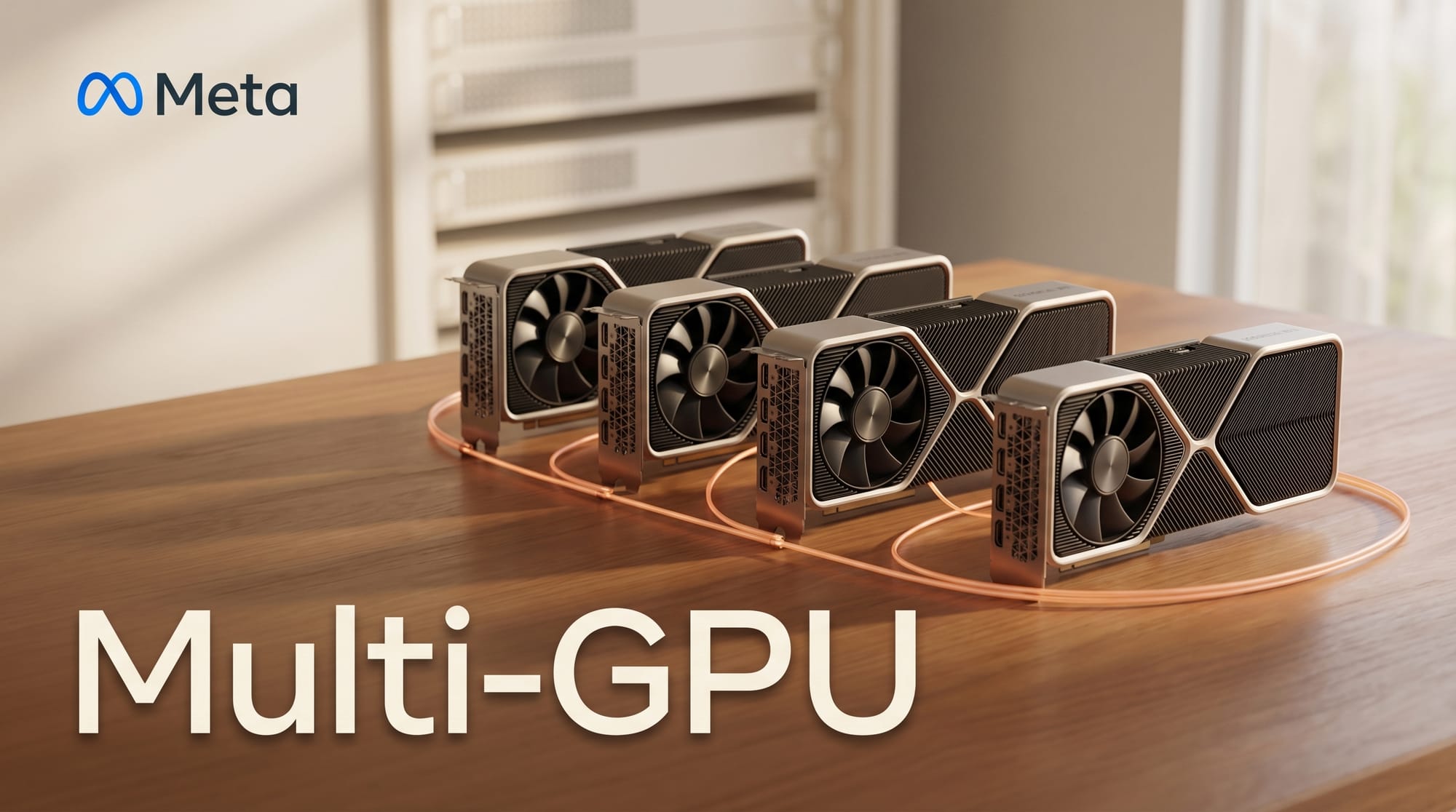

The llama.cpp project released version b8738 on April 9, 2026, adding backend-agnostic tensor parallelism that enables large AI models to run across multiple GPUs without requiring vendor-specific code. The feature supports 4 to 8 or more GPUs, works with both NVIDIA (NCCL) and AMD (RCCL) communication libraries, and handles uneven tensor splits across devices.

What Happened

The b8738 release introduces experimental multi-GPU tensor parallelism as a core feature. Previous versions of llama.cpp supported multi-GPU setups, but the new implementation is backend-agnostic, meaning the same code works across different GPU vendors without modification. Key additions include unconditional peer access and buffer reuse across GPU contexts, BF16 allreduce operations, and KV cache serialization improvements.

The release also adds support for newer model architectures including Qwen 3 and Qwen 3.5 MoE variants, and Gemma 4 MoE configurations. A follow-up release (b8739) added AMD Instinct MI350X/MI355X support for the latest CDNA4 architecture, and b8744 introduced a reasoning budget sampler for Gemma 4 models.

Why It Matters for Creators

For anyone running AI models locally, multi-GPU tensor parallelism removes a significant constraint. Models that previously required a single expensive GPU can now be split across multiple smaller cards. A creator with two mid-range GPUs can now run models that would otherwise need a single high-end card with double the VRAM.

The backend-agnostic design is particularly relevant because it means AMD GPU users get the same multi-GPU capabilities as NVIDIA users. This broadens hardware options for creators who want to run image generation, video, or language models without being locked into NVIDIA's ecosystem. For a practical guide to local AI workflows, see our Creator's Guide to Running AI Locally.

What to Do Next

If you run models locally with multiple GPUs, pull the latest llama.cpp from GitHub and test the tensor parallelism with your existing setup. Note that the feature is marked experimental, so expect some rough edges. Check the release notes for configuration details and supported model architectures.

This story was covered by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.