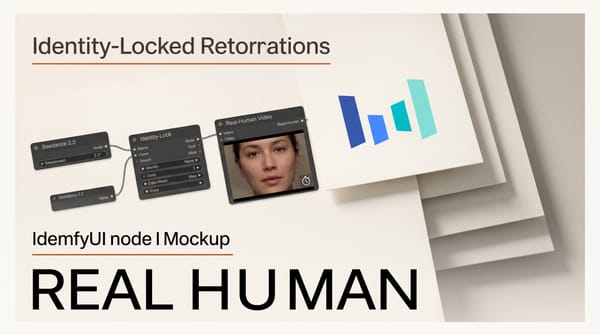

ByteDance's Seedance 2.0, the video generation model that was suspended over Disney copyright concerns just weeks ago, is now a native node in ComfyUI. The integration brings multimodal audio-video generation with up to 12 simultaneous reference inputs directly into the most widely used open-source creative AI pipeline, marking ByteDance's most significant push into the open creative tooling ecosystem.

Background

Seedance 2.0 first launched on ByteDance's CapCut platform in March 2026 as a unified multimodal audio-video generation model. The initial rollout was brief. ByteDance suspended the model after users generated Disney character content that went viral, triggering copyright takedown demands. The model returned weeks later on CapCut with new IP protection guardrails, content filtering, and usage restrictions.

The ComfyUI integration, announced April 13, represents a different distribution strategy entirely. Rather than limiting access to ByteDance's own platforms, the company is making Seedance 2.0 available inside the node-based workflow environment where many professional video creators already work. ComfyUI's open architecture means Seedance 2.0 can be combined with any other node in the ecosystem, from upscalers to face restoration to audio processing.

Deep Analysis

The Dual-Branch Architecture: Audio and Video in One Pass

Most video generation models treat audio as an afterthought. You generate a video clip, then find or generate audio separately, then synchronize them manually. Seedance 2.0's Dual-Branch Diffusion Transformer changes this by generating both streams simultaneously from a shared latent representation.

The architecture processes visual and audio information through parallel diffusion branches that share cross-attention layers. This means the model does not just produce a video and then guess what sounds should accompany it. The audio and video are generated together, with each informing the other throughout the denoising process. Lip movements match speech at the phoneme level across multiple languages and dialects. Sound effects align with on-screen actions.

For creators, single-pass audio-video generation eliminates one of the most tedious steps in AI video workflows. Current pipelines typically require generating video with one model, generating or sourcing audio with another, and then spending significant time on manual alignment. Seedance 2.0 collapses these three steps into one. The output still needs refinement for professional work, but the starting point is dramatically closer to final.

12 Reference Inputs: The Multimodal Control System

Seedance 2.0 accepts up to nine reference images, three reference videos totaling 15 seconds, and three audio files simultaneously. This multimodal reference system is the most extensive input pipeline available in any video generation model currently integrated with ComfyUI.

The reference images serve multiple purposes. Character reference images lock in visual identity: facial features, clothing, body proportions, and style persist across every generated frame. Product reference images maintain brand consistency for commercial content. Style reference images transfer artistic direction without requiring lengthy text descriptions.

Video references add motion control. Upload a clip demonstrating a specific camera movement, action choreography, or editing rhythm, and Seedance 2.0 replicates those motion patterns in the generated output. This is particularly useful for creators who need to match existing footage styles or produce content that fits within an established visual language.

Audio references enable phoneme-level synchronization. Upload dialogue, and the model generates lip movements that match the speech precisely. Upload music, and the generated video responds to tempo and intensity. The multi-language support means this works equally well for English, Mandarin, Japanese, and other languages, opening up international content production without language-specific workarounds.

ComfyUI as Distribution: Why Open Pipelines Win

The decision to bring Seedance 2.0 to ComfyUI rather than keeping it locked to CapCut reflects a broader industry shift. Closed platforms like Runway and Pika control the entire experience but limit composability. ComfyUI's node graph lets creators build custom pipelines that combine the best capabilities from multiple models.

A practical example: a creator could use Seedance 2.0 for initial video generation with audio, pipe the output through Wan2.7 nodes for style transfer, apply Topaz Starlight upscaling for resolution enhancement, and use a dedicated face restoration node for close-up shots. This kind of multi-model pipeline is impossible on closed platforms.

The integration includes three generation modes available now: Text-to-Video, Reference-to-Video, and First-Last-Frame-to-Video. A Realism character reference mode is listed as coming soon through an early access program. For creators who do not run ComfyUI locally, the model is also available through Comfy Cloud for immediate browser-based access, and through the fal.ai API for programmatic integration.

ByteDance's strategy here mirrors what Alibaba did with Wan2.7 and what Tencent did with OmniWeaving. Chinese AI companies are increasingly using open-source distribution to build developer loyalty and establish their models as infrastructure that other tools depend on. For creators, this competition drives prices down and capabilities up.

Post-Suspension: The Copyright Question Remains

The Disney copyright suspension in March was not just a PR problem for ByteDance. It raised fundamental questions about how video generation models handle copyrighted characters, and those questions do not disappear with a platform switch. When Seedance 2.0 relaunched on CapCut, it came with content filters, character detection, and usage restrictions.

The ComfyUI integration complicates content moderation. On CapCut, ByteDance controls the entire pipeline and can enforce restrictions at the UI level. In ComfyUI, the model runs within a user-controlled environment where output filtering depends on implementation choices made by the ComfyUI team and individual users. ByteDance still processes generation requests through its API (local model weights are not available), so server-side filtering remains active. But the shift to an open workflow tool signals that ByteDance is prioritizing reach over control.

For commercial creators, this means exercising the same copyright judgment with Seedance 2.0 that applies to any generative tool. The model's capabilities make it easy to produce derivative content. The responsibility for ensuring that content does not infringe existing IP falls on the creator, regardless of what platform-level filters exist.

Impact on Creators

Seedance 2.0 in ComfyUI changes the competitive dynamics of AI video generation in several concrete ways:

Audio-video workflows get simpler. Creators who currently use separate tools for video generation and audio production can now prototype synchronized audio-visual content in a single node. The quality may not match dedicated audio tools for final production, but for storyboarding, previsualization, and rapid iteration, unified generation saves significant time.

Character consistency improves. The nine-image reference system is the most robust character locking mechanism available in an open-source-compatible video tool. For creators producing series content, brand videos, or multi-shot narratives, this reduces the most common complaint about AI video: inconsistent characters between clips.

The open-source video pipeline matures. With Seedance 2.0, Wan2.7, and dedicated upscaling and restoration nodes all available in ComfyUI, the open-source video creation stack now covers generation, editing, extension, and post-processing. Professional creators still need traditional editing software for final assembly, but the AI-assisted portions of the pipeline are increasingly self-contained within ComfyUI.

Key Takeaways

- Seedance 2.0 is now a native ComfyUI node with three generation modes: Text-to-Video, Reference-to-Video, and First-Last-Frame-to-Video, with Realism mode coming soon.

- The Dual-Branch Diffusion Transformer generates synchronized audio and video in a single pass, eliminating manual audio alignment for AI video workflows.

- Up to 12 simultaneous reference inputs (9 images, 3 videos, 3 audio files) provide the most extensive multimodal control system in any ComfyUI-compatible video model.

- ByteDance's move to open distribution mirrors Alibaba and Tencent's strategies, intensifying competition that benefits creators through lower costs and better tools.

- Copyright concerns from the Disney suspension persist; server-side content filtering remains active, but creators bear responsibility for IP compliance.

What to Watch

The Realism character reference mode, currently in early access, could be the most impactful upcoming feature. If it delivers photorealistic human consistency across shots, it would address the single biggest limitation holding AI video back from professional live-action production workflows.

Watch whether other frontier video models follow Seedance 2.0 to ComfyUI. If Runway, Pika, or Luma bring official nodes to the platform, it would confirm the shift from closed platforms to open pipelines as the primary way professional creators interact with AI video tools. The current landscape is still split between closed and open approaches, but the momentum is clearly moving toward composability.

This analysis was produced by Creative AI News, covering AI tools for image, video, audio, and 3D creators.