Mistral launched Workflows in public preview on April 28, 2026, alongside Mistral Medium 3.5, Vibe remote coding agents, and Le Chat Work mode. Workflows is a durable orchestration platform for AI processes built on Temporal, the open-source durable-execution engine that already powers production systems at Netflix, Stripe, and Salesforce. The pitch is that AI agents need orchestration primitives a Python script in a single container cannot provide, and Mistral has wrapped Temporal in an AI-specific SDK so teams do not have to assemble the substrate themselves. Six private-preview customers including ASML, ABANCA, CMA-CGM, France Travail, La Banque Postale, and Moeve are already running what Mistral calls millions of daily executions on the platform.

The headline launch news is real but the structurally interesting part is what Workflows says about where AI infrastructure consolidates next. A normal LLM agent loop is brittle in production: long-running, expensive per step, probabilistic, and dependent on flaky external services. Durable execution flips that, persisting every step to an event history before it runs, surviving worker crashes, network blips, and provider outages by replaying state. Mistral, AWS Step Functions plus Bedrock, and the LangGraph and Inngest cohort are converging on the same answer. Workflows is the most ambitious entrant from a model lab.

What durable orchestration actually buys you

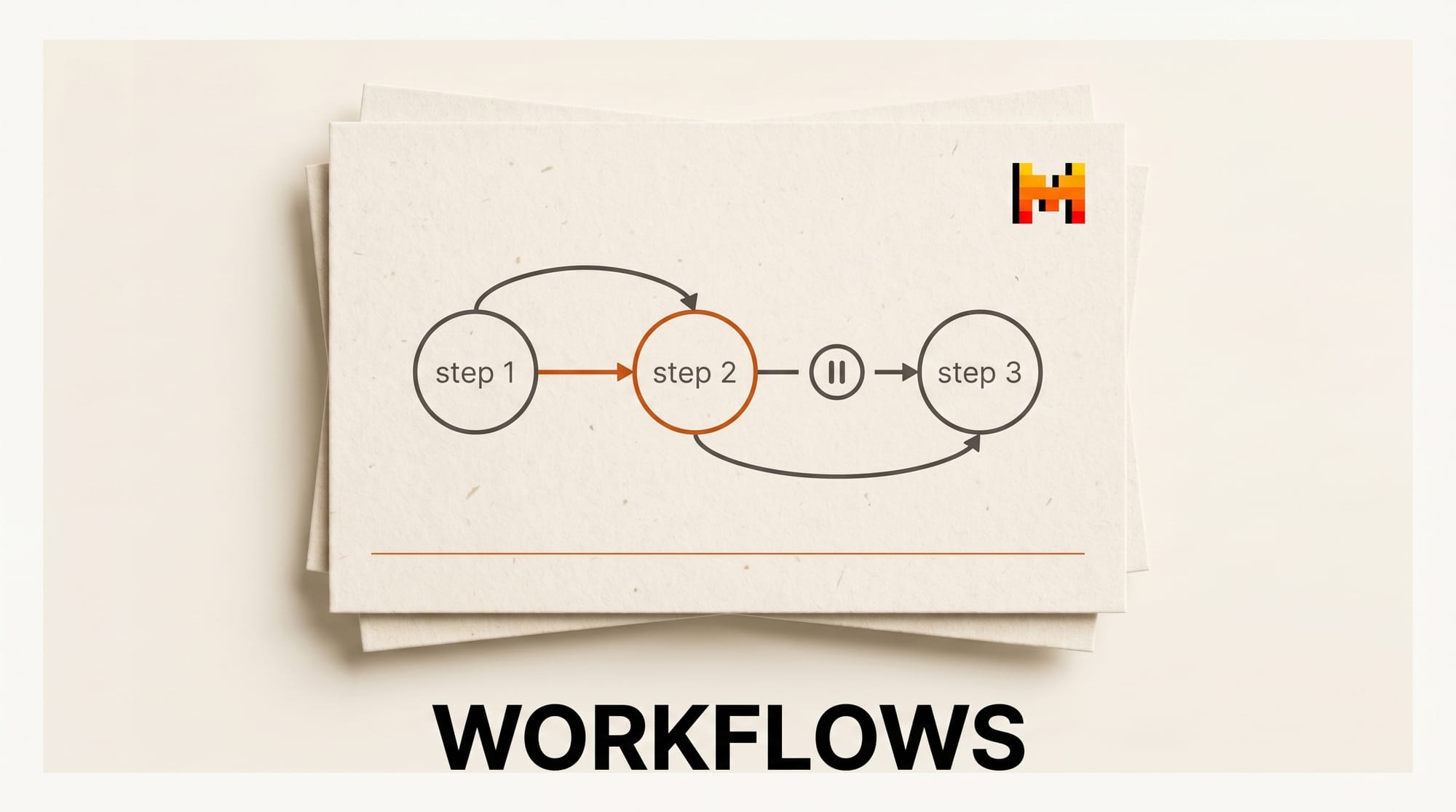

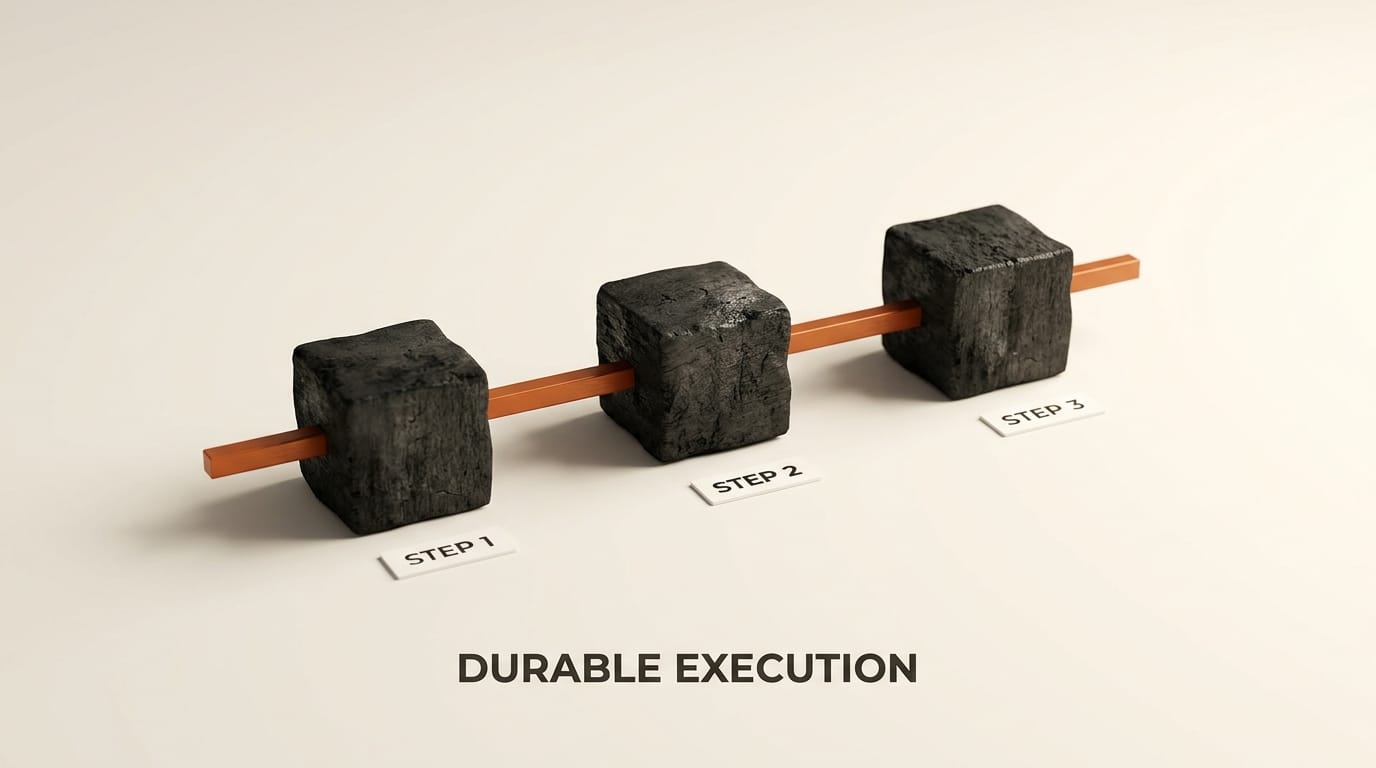

The technical thesis is concrete. A typical agent workflow is a Python script that calls an LLM, parses output, calls a tool, branches, calls another LLM. If the worker dies mid-run, the whole workflow is lost: no checkpoint, no resumability, no replay. Durable execution writes every step to a persistent event history before it executes. If the worker crashes, another worker picks up the same workflow ID, replays the event history to reconstruct state, and continues from the exact failed step. Long pauses cost nothing; the workflow state sits in storage waiting for an external signal.

This pattern is mature outside AI. Temporal, Cadence, AWS Step Functions, and Inngest have a decade of production traffic behind them. AI workflows are a perfect fit because they are simultaneously long-running (minutes to weeks for human-in-the-loop processes), expensive per step (an LLM call costs cents to dollars; losing it stings), and inherently probabilistic (retries with different prompts, fallback models, branching by classifier output, human approval gates). A naive for step in steps: result = llm(step) loop has none of the safety nets that pattern needs.

Mistral's enterprise GTM lead Elisa Salamanca framed the gap directly in an interview with The Decoder: organisations are struggling to move beyond isolated proofs of concept, and the gap is operational, not model quality. Workflows is Mistral's claim that the operational gap is what matters now. The Decoder calls it more structurally important than any new model release Mistral has shipped in 2026, which is rhetorical but signals that orchestration infrastructure may matter more than the next checkpoint.

The Temporal integration, in detail

Workflows is built on Temporal's open-source durable-execution engine. Mistral did not fork Temporal or rebuild the underlying primitives; Mistral added an AI-specific layer on top.

The architecture is a control-plane plus data-plane split. Mistral hosts the Temporal cluster, the Workflows API, and Studio (the inspection UI). Customers host the workers, which are Python processes that execute activity code, on their own Kubernetes via a Helm chart Mistral ships separately. Workers connect outbound to Mistral's orchestrator; the orchestrator never initiates connections into customer networks. Business logic and customer data never leave the customer's perimeter.

What Mistral added on top of Temporal:

- Token streaming from worker activities back to clients, which raw Temporal Cloud does not surface.

- Large-payload offload at a 2 MB threshold to S3, GCS, or Azure Blob storage. AI workflows routinely shuttle multi-MB images, embeddings, or document corpora between steps; native Temporal payloads are limited to 2 MB.

- Multi-tenancy and per-tenant observability surfaced through Mistral Studio.

- Native LLM activity helpers that wrap Mistral model calls with default retry, timeout, and trace conventions.

- Native OpenTelemetry tracing and structured logging.

What is hidden from the developer: the Temporal cluster, gRPC plumbing, event-history schema, and replay semantics. A developer writes import mistralai.workflows as workflows, decorates async functions with @workflows.activity(), decorates a workflow class with @workflows.workflow.define(), marks an entry method with @workflows.workflow.entrypoint, and starts a worker with await workflows.run_worker(discovered). Triggering happens via the Mistral Console UI, the Python SDK, or a REST API. There are no Temporal-specific imports in user code.

The Threads commentariat dismissed Workflows as Temporal without AI. Half right, half wrong. The engine is Temporal; the AI primitives are real but thin. For an AI team that already pays Mistral for models, picking Workflows over raw Temporal Cloud plus a Mistral SDK glue layer is a real convenience trade. For a team that is provider-agnostic, raw Temporal plus LangGraph or Inngest covers similar ground at lower vendor lock-in.

Human-in-the-loop primitives and audit

The HITL pattern is a one-line workflow pause that waits for a human signal or external event. While paused, no compute is consumed; the workflow state sits in the durable store. When the signal arrives via the SDK, an HTTP call, or a Studio UI action, the workflow resumes from the exact pause point.

The canonical use case is a know-your-customer document-processing pipeline. A workflow ingests a passport scan, extracts data with a vision model, classifies confidence, and pauses on low-confidence fields for a compliance officer. The pause can last hours, days, or weeks. When the officer approves through Studio, the workflow resumes, writes the audited record, and notifies downstream systems. The same workflow process survives reboots, deploys, region failovers, and infrastructure outages.

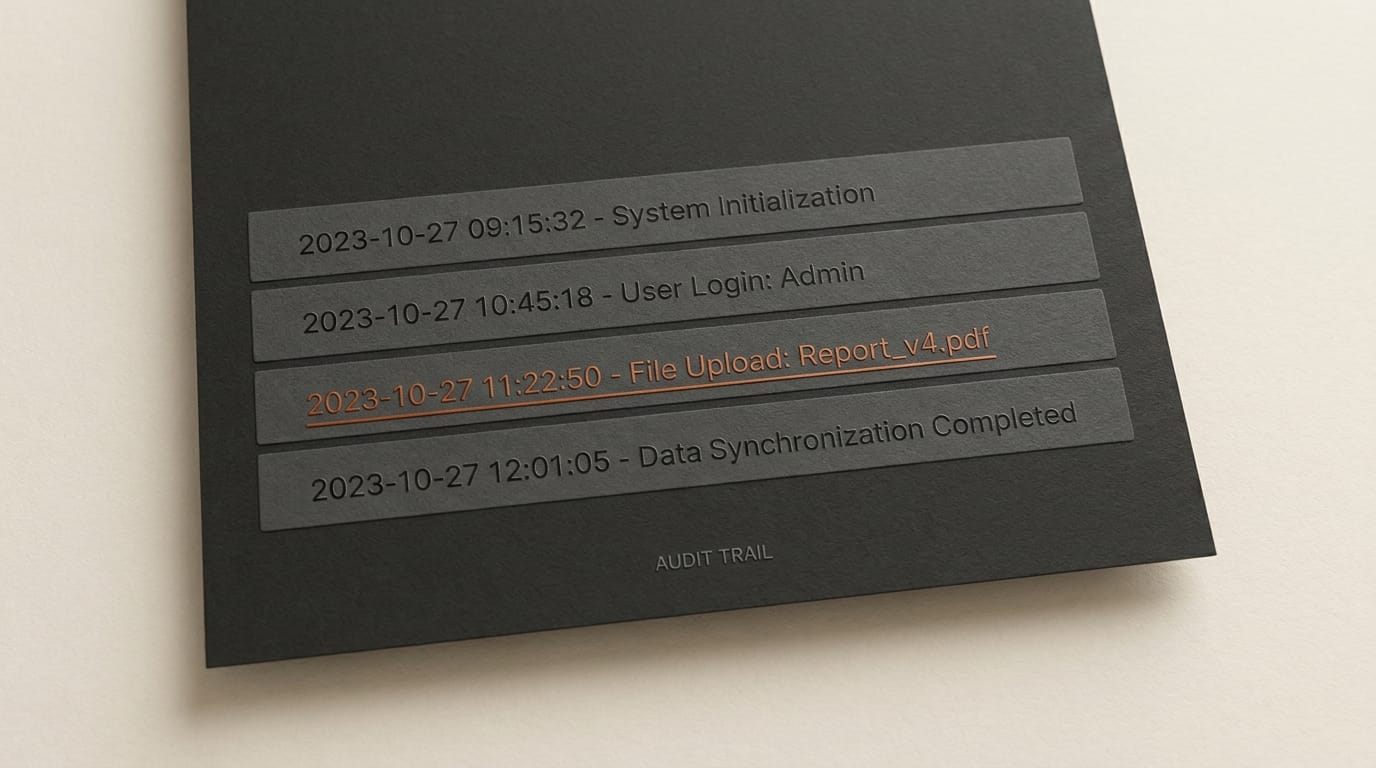

The audit surface is more complete than most agent frameworks ship. Every branch, every retry, and every state change writes to the event history Studio reads from. A workflow run is fully replayable, which means a compliance team can replay any execution to see exactly what each LLM call returned, what each tool call did, and when each human approval came in. Native OpenTelemetry traces flow into existing observability stacks. Retention is not publicly stated; Temporal Cloud's default is 30 days configurable up to a year, and Mistral has not yet documented its policy.

SDKs, deployment, and where it does not yet reach

The Python SDK is mistralai-workflows on PyPI, Apache-2.0 licensed, currently at version 3.3.0 as of April 23, 2026, requiring Python 3.12 to 3.15. Install with uv add mistralai-workflows or pip install mistralai-workflows. Optional extras include [mistralai] for native model integration and [s3], [gcs], or [azure] for payload offloading.

TypeScript and Go SDKs are not announced. Temporal itself supports Python, TypeScript, Ruby, C#, Java, and PHP, so the engineering effort to port the Mistral wrapper is bounded, but there is no public roadmap. Until the SDK ships in your language of choice, the alternatives are calling the REST API directly or running raw Temporal Cloud with a hand-written Mistral glue layer.

One discrepancy worth flagging. The PyPI metadata declares Apache-2.0 but the source repository link points to github.com/mistralai/dashboard, which is a private repo. There is a public github.com/mistralai/workflows-starter-app template, but the SDK source itself is not in a public repository as of April 29. The license declaration is binding regardless, but transparency-minded enterprises may want to wait for the source publication before committing.

Deployment options today: Mistral's cloud orchestrator with customer-hosted workers via Helm, or a fully local Python process for development. There is no fully self-hosted orchestrator option; the control plane must be Mistral's. For regulated deployments where the orchestrator location is contractually constrained, Workflows in its current form is not yet a fit. Self-hosted is the most-asked feature in early community feedback and seems likely to land for enterprise contracts before going broadly available.

How it stacks against the alternatives

The agentic-orchestration space is no longer empty. Five honest comparison points.

AWS Step Functions plus Bedrock is the closest hyperscaler analog. JSON state machines using Amazon States Language; durable; deeply integrated into the AWS ecosystem. The trade is AWS lock-in and a programming model that is less ergonomic than Python decorators. Bedrock Agents are a separate product surface; Step Functions does not give you the LLM-native primitives Mistral wraps in.

OpenAI Assistants API plus threads is a thinner persistence layer. Threads survive across calls, but there is no replay, no branching, and no durable execution. Assistants is built for conversational agents, not multi-step pipelines that need to survive hours-long human pauses.

LangGraph from the LangChain team is the obvious open-source alternative. MIT licensed, optional checkpointer for SQLite, Postgres, or Redis. Designed for LLM agents from day one. Lacks the production hardening Temporal brings; trades it for transparency and self-hosting.

Inngest, Restate, and Hatchet are the LLM-agnostic durable-execution platforms. Inngest is the closest in spirit to what Mistral built, and is available standalone if you want similar primitives without committing to Mistral's model stack. Restate is lighter-weight and runs as a single binary. Hatchet is Postgres-backed and Python-first.

Raw Temporal Cloud is the substrate Mistral built on. Picking Temporal directly buys you full SDK breadth (Python, TypeScript, Go, Java, .NET), public documentation, and a vendor with a decade of production references. The catch is bring-your-own-LLM glue and bring-your-own-observability for AI workloads.

Temporal as a company raised $300M at a $5B valuation on February 17, 2026 with 9.1 trillion lifetime action executions, 1.86 trillion of those from AI-native customers. Mistral's choice to build on Temporal rather than reinvent the engine is a vote on where the market consolidates.

Why creators should care

The Creative AI News audience runs multi-step generative pipelines daily: storyboard, shot list, image generation, upscale, VFX pass, render, review, publish. Most creators script this in Python with Replicate, Fal, or ComfyUI APIs and a try-except block; they lose work when their laptop sleeps mid-batch and have no audit trail of which prompt and seed produced a final asset. Five concrete openings Workflows or a Workflows-like platform unlocks.

Render queues that survive crashes. A 200-shot batch render checkpoints each shot. Laptop closes? VPS reboots? The workflow resumes at shot 147 the next morning. Most creators currently mitigate this with manual restarts and a lot of except Exception: continue. A durable workflow makes resumability default.

HITL approval gates for client work. Generate ten thumbnail variants, pause workflow, email client, resume on click. The workflow waits days without consuming a CPU cycle. Compare to the current pattern of cron-polling a database table.

Replay-able generations. Every prompt, seed, model version, and output URL is logged. Six months later, make me another like that one actually works without trawling through Notion or a spreadsheet of seeds.

Multi-provider failover. Pixtral fails on a frame? Activity retries with Gemini. Then Flux. The workflow does not die at the first 500. Most creators currently hand-write retry logic per provider; durable execution centralizes it.

Long-running research agents. A research one tool per day for 30 days workflow is one long-running orchestration, not 30 cron jobs and a state-tracking spreadsheet. Agentic coding pipelines already moved this way; creative pipelines are next.

The catch for indie creators: $1.5 to $7.5 per million tokens (Mistral Medium 3.5 pricing) is competitive but not free, and Workflows production deployment requires Python plus Kubernetes for the worker layer. Inngest, LangGraph plus a Postgres checkpointer, or raw Temporal Cloud are realistic alternatives for one-person studios that do not want to commit to Mistral's stack. For agencies and small studios already standardising on Mistral models, Workflows is a natural extension.

Open questions to track

Five things the launch did not yet disclose, in order of how much they matter for production planning.

- Pricing. No per-execution fee, no preview-period tier, no Pro versus Enterprise pricing breakdown. The Apache 2.0 SDK is free to install; running real workloads is not yet quoted.

- GA date. No commitment beyond public preview. Production-critical workflows should treat preview-tier SLAs as preview-tier.

- TypeScript and Go SDKs. Not announced.

- Self-hosted orchestrator. Not available. Regulated deployments are blocked until this lands.

- HITL signal API surface. The docs imply signals and external events but the exact decorator or method name is not yet in public docs. Worth checking before committing a HITL design.

What to do this week

If you build creative pipelines that already cross five or more steps, install mistralai-workflows, port one workflow, and time how long it takes to write the same logic with raw Temporal plus a Mistral glue layer. The convenience delta is the deciding factor. If you are provider-agnostic, install Inngest or LangGraph alongside and benchmark all three against your real pipeline.

If you are running production AI workflows on Python scripts and try-except blocks today, you are paying the cost of brittleness in lost runs and vanished context. Durable orchestration was already worth adopting before this launch. Mistral pricing matching frontier-model API rates means the model side of the bill stays competitive while the orchestration side gets the structural fix.

Canonical sources: the Mistral announcement, the Workflows getting-started docs, the PyPI package, the starter template repository, and the underlying Temporal platform for the substrate Mistral built on.