xAI quietly shipped an Imagine Agent inside Grok's open canvas workspace on April 30, 2026, giving Grok Heavy and Super Grok subscribers a way to hand entire creative projects to an autonomous AI. The feature was first observed by early testers and rolled out without a press release. It is the first xAI feature that moves Grok Imagine beyond single-shot generation and into multi-step delegated execution, which is the direction OpenAI, Adobe, Google, and the rest of the creative AI stack are also moving in 2026.

Background

Grok Imagine launched in 2025 as xAI's image and video generation surface inside the Grok app. Across the first four months of 2026, xAI shipped a roughly biweekly cadence of feature updates: a Voice Agent API in mid-April, quality and speed modes in early April, and a lip-sync upgrade for image-to-video on April 25. The Imagine Agent is a different kind of release. Each prior update added a new capability inside the existing single-prompt generation loop. The agent replaces the loop.

The agent runs inside Grok's open canvas, a persistent multi-step workspace that xAI introduced earlier in 2026 as a place to build longer creative projects with Grok rather than a chat-style turn-by-turn surface. The open canvas was the necessary substrate. Multi-step agents need a workspace to plan in, execute against, and assemble outputs inside. xAI shipped the canvas first and the agent second.

Deep Analysis

From single-shot generation to delegated execution

Single-shot creative AI tools, including the original Grok Imagine, take a prompt and return one asset. To build a one-minute short film with consistent characters, you write the script yourself, prompt for each scene individually, regenerate when characters drift, prompt separately for voiceover, and assemble the result in an editor. The model is a tool inside your workflow.

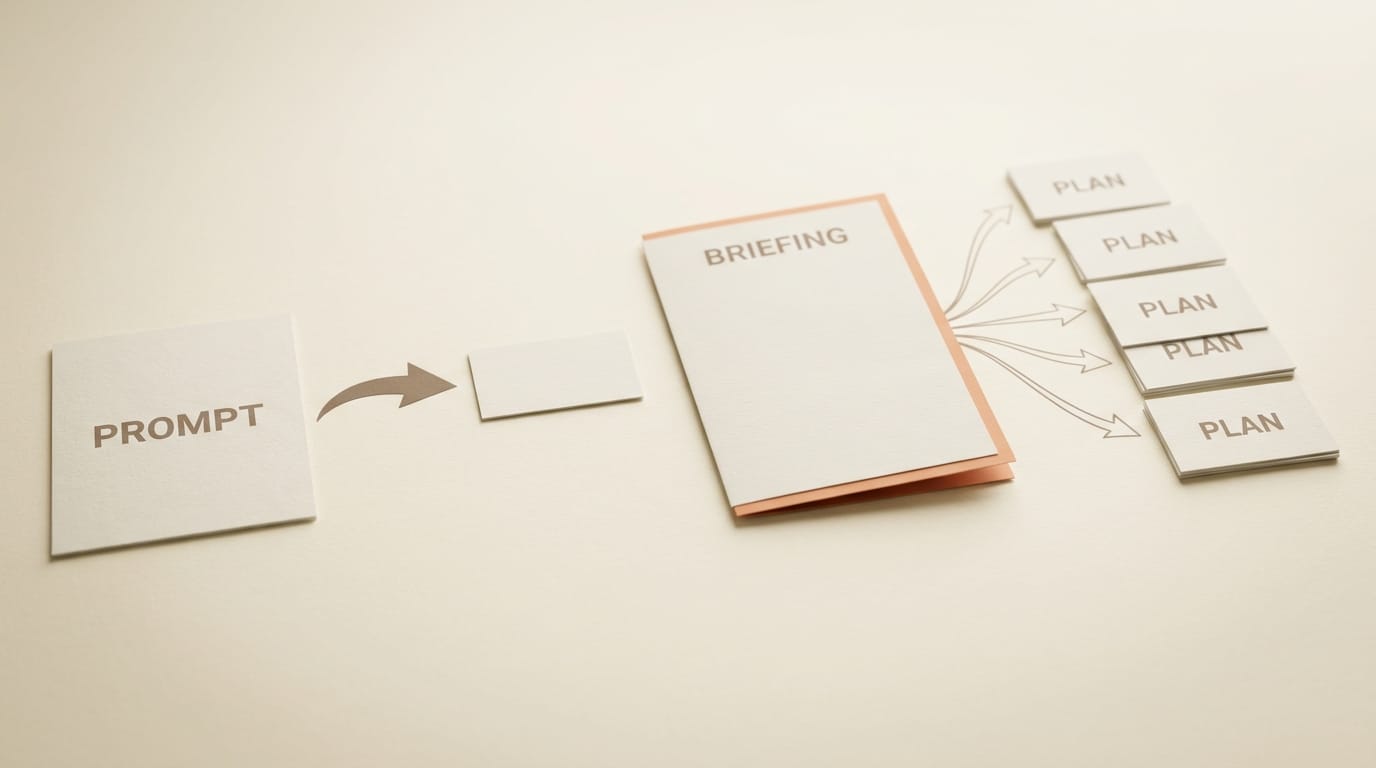

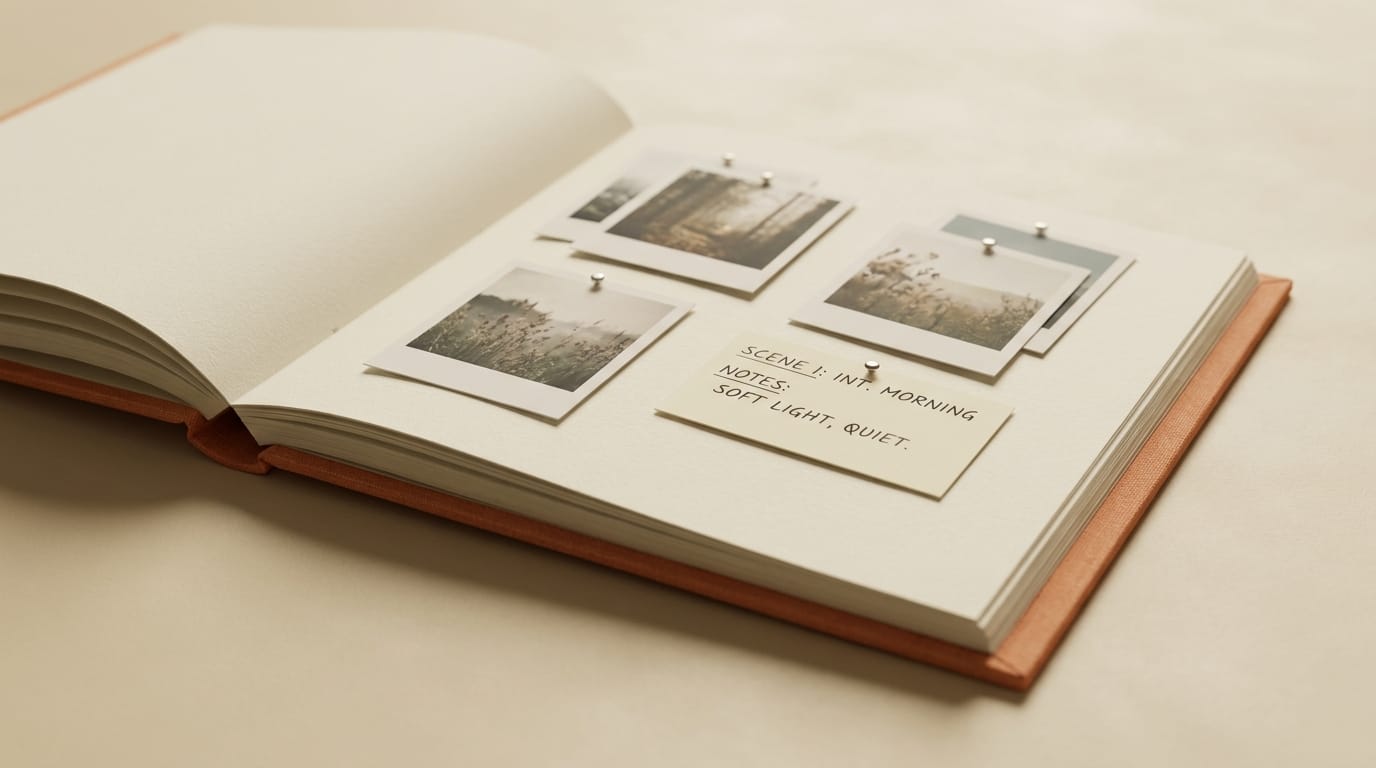

The Imagine Agent inverts the relationship. The user submits a project brief, and the agent decomposes it into a plan, generates the assets, composites the environments, stitches the sequences, and produces companion poster art. The user is the editor reviewing and approving the agent's output rather than the operator stitching individual generations together. Confirmed use cases in the early testing reports include one-minute short films, full manga sets, user-generated-content product stories, and product photoshoots across multiple SKUs.

This is the same architectural shift that Anthropic, OpenAI, and Google are pursuing in their creative AI stacks, and that Mistral pushed into the coding agent space at the end of April with cloud-teleported Vibe agents. The interesting question is not whether multi-step agents will replace single-shot generation, but which surface wins. Grok Imagine Agent is xAI's bet that the answer is "inside a chat-adjacent canvas owned by the foundation model lab," not "inside a third-party creator tool that calls the lab's API."

The competitive landscape: who else is shipping creative agents

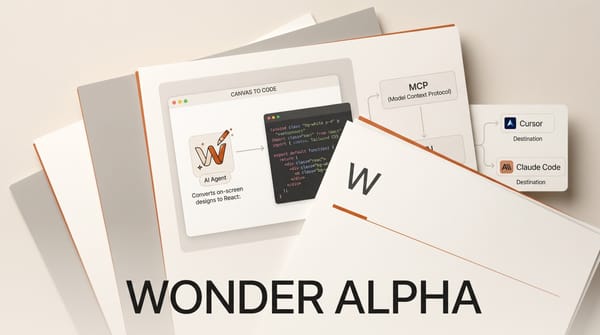

The Imagine Agent lands in a crowded April. Adobe Firefly's AI Assistant entered public beta on April 27 as Adobe's multi-step creative orchestrator across Photoshop, Premiere, and Express. Anthropic shipped Claude Creative Work Connectors on April 28, giving Claude the ability to orchestrate Figma, Adobe, and creative tool actions through MCP. Wonder's design agent went public alpha on April 30. Google's Gemini-driven creative agents continue rolling out across Workspace and Vertex.

The frame to use here is that 2026 is the year creative AI labs stop selling models and start selling delegated execution. The tools are moving up the workflow stack. xAI's distinction is that Grok Imagine Agent is the first creative agent to ship from a foundation lab whose primary model is a general-purpose chat assistant, not a specialized creative model. The agent runs Grok's image and video generation underneath, but the orchestration layer is the same Grok that handles general reasoning. The bet is that a unified agent is more useful than a specialized one because it can chain creative steps into broader workflows like research, drafting, and distribution.

Open canvas as the workspace primitive

The open canvas is the more interesting product decision than the agent itself. ChatGPT Canvas, Claude Artifacts, and Gemini's project workspaces have all converged on a similar primitive: a persistent multi-document workspace that sits next to the chat surface and accumulates the artifacts of a longer project. Grok's open canvas extends the pattern by treating image and video generations as first-class canvas objects rather than chat attachments.

That distinction matters for creative work. In a chat-attachment model, generated assets live in the message history, scrolling out of view as the conversation continues. In a canvas model, assets persist in a stable workspace that the agent can reference, edit, recombine, and assemble across sessions. For the kind of multi-asset projects the Imagine Agent targets, including manga sets and multi-SKU product photoshoots, the canvas substrate is what makes the agent usable. Without it, the agent would be generating into the void.

The "no press release" signal

xAI did not ship a press release, blog post, or formal announcement for the Imagine Agent. The rollout was observed by early testers and confirmed through screenshots before any official communication. This is consistent with xAI's broader 2026 cadence, which has favored quiet feature drops over choreographed launches, but it is more notable for an agent than for a feature update.

One read is that xAI is shipping intentionally fast and quiet because the multi-step agent space is moving too quickly for set-piece launches. Adobe spent weeks building up to the Firefly AI Assistant beta. Anthropic ran a coordinated launch for Claude Creative Connectors. xAI shipped the Imagine Agent into limited beta, watched what early testers did with it, and skipped the marketing layer entirely. The other read is that the agent is not yet ready for a formal launch and the limited beta is buying time. Both can be true. What the rollout pattern signals clearly is that xAI is iterating on the agent inside production, not behind a closed beta wall, and the next several updates will probably ship the same way.

TechCrunch's ongoing coverage of Grok tracks the cadence in detail.

Impact on Creators

For creators on Grok Heavy or Super Grok plans, the Imagine Agent collapses a typical short-film or multi-asset project from a half-day of prompt-and-edit work into a single brief-and-review pass. The trade-off is the usual one for delegated agents: less granular control, more iteration on the brief instead of on individual assets, and a learning curve on writing project briefs that the agent can decompose well. Creators who already think in storyboards, shot lists, and asset breakdowns will adapt quickly. Creators who treat each generation as a standalone prompt will find the agent overshoots and underspecifies until they relearn the briefing pattern.

Pricing has not changed. The agent is included in current Grok Heavy and Super Grok plans, which puts it in the same per-month range as ChatGPT Pro and Claude Max. The bet for xAI is that the agent makes those plans more sticky for creators who would otherwise churn between specialized tools. For creators evaluating where to anchor their primary AI subscription in 2026, the question shifts from "which model produces the best individual outputs" to "which agent decomposes my projects best." Grok Imagine Agent is xAI's first serious entrant in that comparison.

Access remains gated. The beta is limited even within the Grok Heavy and Super Grok cohort, and not all eligible subscribers will see the feature immediately. Sign in to grok.com and look for the open canvas workspace inside the Imagine section to check availability.

Key Takeaways

- Grok Imagine Agent is xAI's first multi-step creative orchestrator, decomposing project briefs into planned, generated, and assembled outputs inside the open canvas workspace

- The release lands in a crowded April that also includes Adobe's Firefly AI Assistant beta, Anthropic's Claude Creative Connectors, and Wonder's design agent alpha

- xAI's distinction is that the agent is built on a general-purpose chat model, not a specialized creative model, which positions Grok as a unified surface for creative and non-creative workflows

- Confirmed use cases include one-minute short films, full manga sets, UGC product stories, and product photoshoots across multiple SKUs

- Access is limited beta for Grok Heavy and Super Grok subscribers, with no pricing change

- The rollout shipped without a press release, consistent with xAI's preference for production iteration over set-piece launches

What to Watch

The first signal to track is whether xAI ships an API for the Imagine Agent. Single-shot Grok Imagine has been gated to the consumer app. An agent API would let third-party creator tools embed multi-step Grok generation inside their own workflows, which is the surface where Anthropic, OpenAI, and Google are competing hardest. If xAI keeps the agent locked inside grok.com, it positions Grok as a destination product. If it ships an API, it competes for the same orchestration substrate that Claude, GPT, and Gemini are building.

The second signal is the brief-decomposition quality on longer projects. One-minute films are the upper end of what current generation models can hold consistent across scenes. Three- and five-minute projects are the next tier, and the constraint is not generation quality but agent planning. If the next two updates lift the agent's project length and SKU count meaningfully, xAI is investing seriously in the agent layer. If updates stay focused on the underlying generation models, the agent is a wrapper rather than a roadmap.

The third signal is whether Grok Imagine Agent appears in Adobe's, Figma's, or any third-party creative tool's MCP integration list in the next quarter. The competitive frame for creative agents is increasingly about which orchestrator can call which tools. Grok Imagine Agent currently runs on xAI's own image and video stack. An expansion to call third-party generation models, asset libraries, and editing tools would put it on the same level as Claude Creative Connectors and Adobe Firefly AI Assistant. Without it, the agent stays a closed loop.