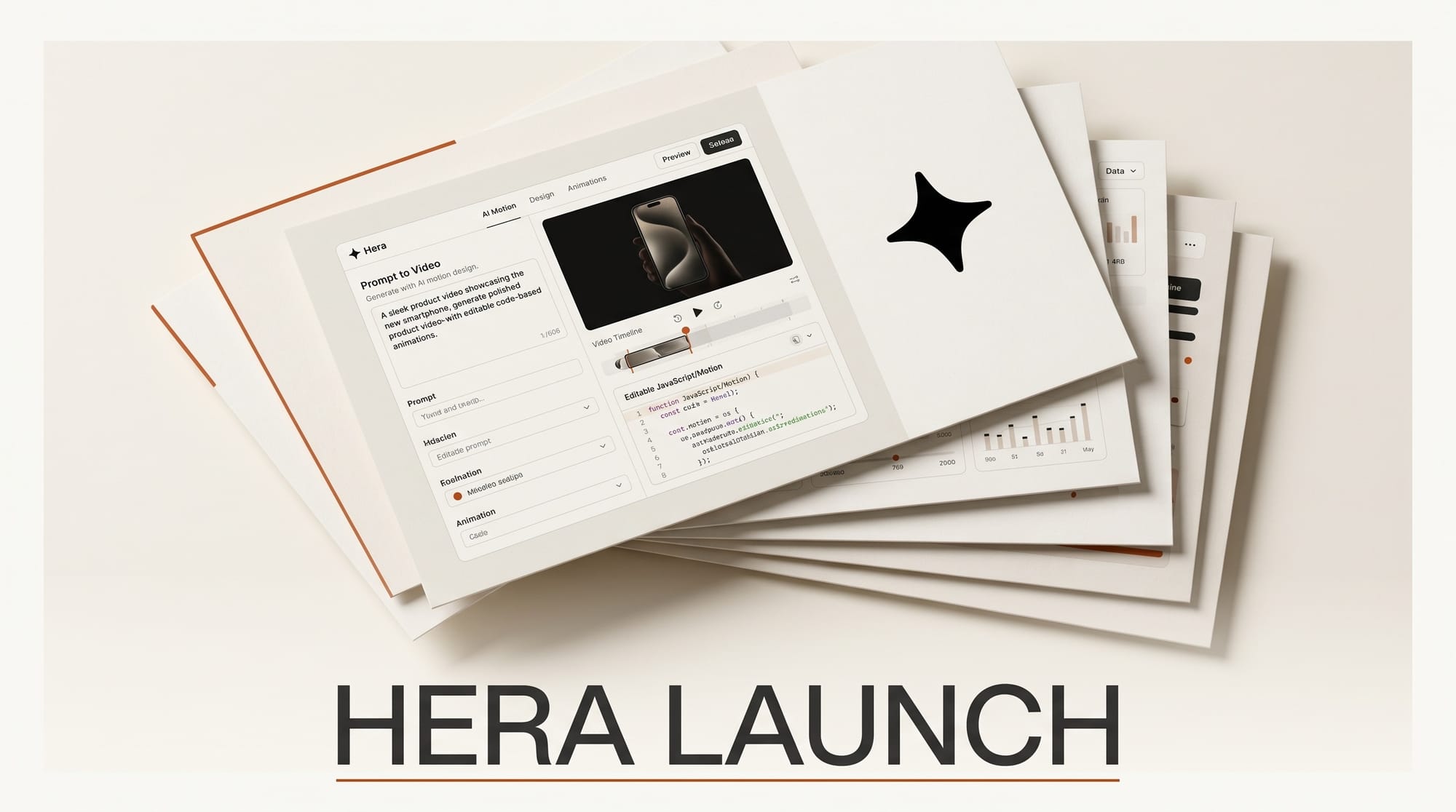

Hera, the Y Combinator-backed AI motion design tool, launched Hera Launch on April 30, 2026, a new mode that generates complete product launch videos from a single text prompt. Where a motion design studio might take weeks and thousands of dollars to produce a polished launch video, the tool targets a turnaround measured in minutes. The launch ranked third on Product Hunt on day one with 233 upvotes, signaling that the indie founder and creator audience the team is targeting is paying attention.

Background

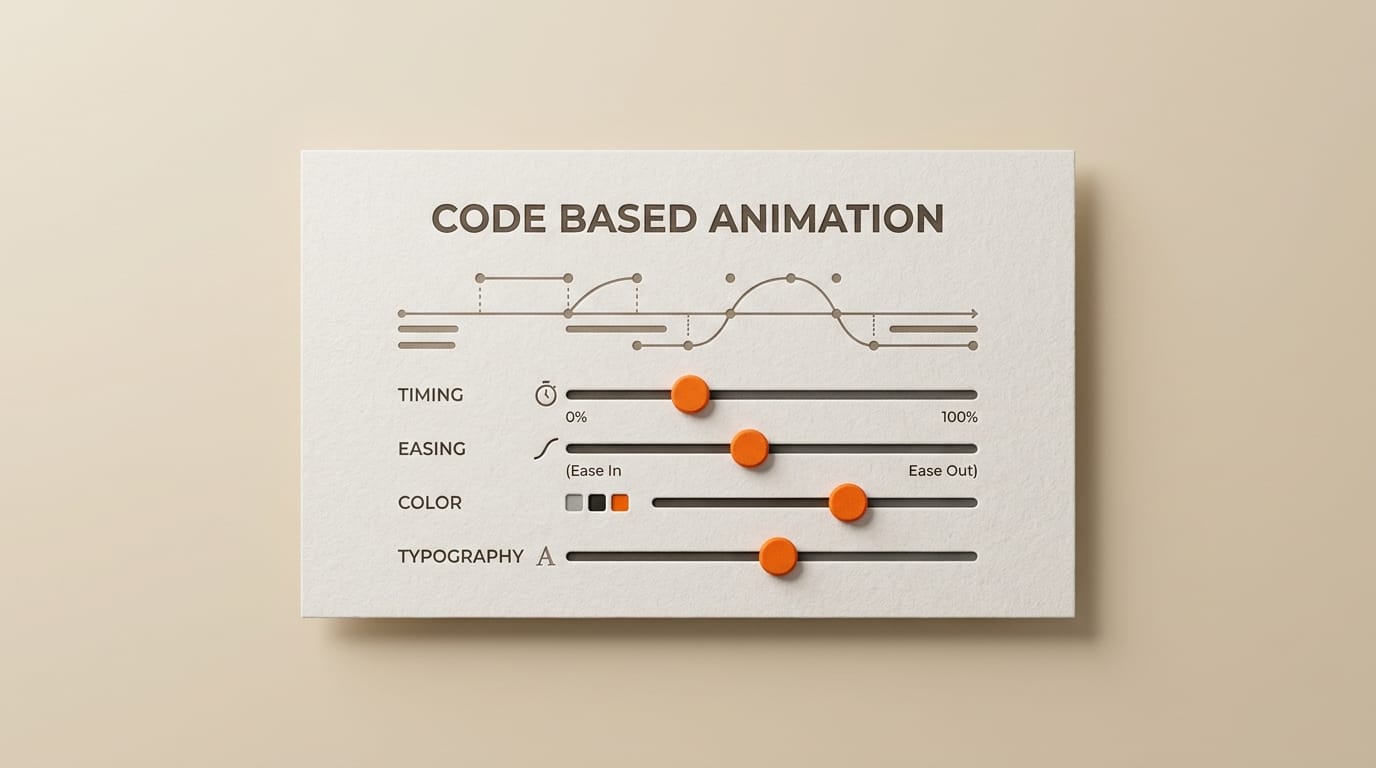

Hera debuted on Product Hunt in August 2025 as an AI animation tool that generates code-based motion graphics from prompts. Unlike text-to-video generators that produce fixed video files, Hera outputs animations where every timing, typography, and easing parameter remains editable after generation. Within weeks of that first launch, the team reported 200,000 animations created and 100,000 waitlist signups. The original tool now holds a 4.9 out of 5 rating across 127 reviews, a strong signal for an early-stage motion design product.

The April 30 follow-up, Hera Launch, is the team's first focused mode rather than a general-purpose animation generator. It targets product promotion videos specifically: the 30-to-90-second clips that creators and founders publish when shipping a new app, feature, or physical product. The AI applies opinionated decisions about pacing, motion curves, brand-appropriate typography, and visual hierarchy, drawing on the same code-based output approach so the result is not a locked render but a fully adjustable animation timeline.

How Hera Launch works

The technical distinction that separates Hera from text-to-video generators like Runway Gen-4 or OpenAI Sora is structural. Text-to-video models produce a video file: a sequence of pixels at a fixed resolution, framerate, and length. To change anything, you generate again with a new prompt. Hera produces a code-based animation: a structured representation where every element is a tracked, editable object. Want the title to fade in 200 milliseconds slower? Adjust the easing curve. Wrong font? Swap it. Brand color is off by a hex digit? Edit it. The change applies without re-running the generation.

For a product launch video specifically, this means a creator can iterate on the AI's first draft instead of regenerating until something matches. The opinionated decisions Hera Launch makes upfront (pacing, motion language, hierarchy) are themselves treated as starting points rather than locked constraints. The pitch is: get to "90 percent of a launch video" in minutes, then spend 20 minutes polishing rather than 3 weeks producing.

Why this matters for creators

Launch videos sit at an expensive bottleneck in the creator and indie-founder workflow. Hiring a motion designer or video editor for a 60-second product clip routinely costs several hundred to several thousand dollars and involves multiple revision rounds. Stock motion graphics templates from libraries like Storyblocks or Envato exist as the cheap alternative but produce generic output that does not adapt to brand tone or product specifics. The middle of that price-quality spectrum has been thin for years.

Hera Launch positions itself in that middle: AI-generated and opinionated about cinematic conventions, but editable at the parameter level rather than locked. For a creator shipping a side project, an indie SaaS founder announcing a new feature, or a small marketing team publishing weekly product updates, the math is different than it was a year ago. The cost question shifts from "Can we afford to ship a launch video at all?" to "How much time do we want to spend polishing the AI's first cut?"

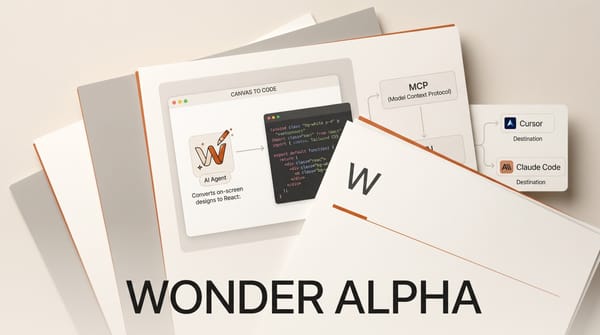

Adjacent tools point at the same trend. Runway, the video AI company, just doubled its GPU efficiency on research compute, accelerating the cadence at which it ships new model capabilities. Tools like Synthesia, HeyGen, and Pika cover the avatar and explainer slice of the market. Hera carved out a different lane: opinionated launch videos with code-level editability, not freeform text-to-video and not avatar lectures.

Who is behind Hera

The co-founders bring direct production experience from both sides of the launch-video problem. Peter Tribelhorn previously managed YouTube channels that aggregated more than 30 million subscribers at Lunar X, where weekly production budgets at that scale meant the cost-quality tradeoff was a daily concern. Chia-Lun Wu was the first engineer at Vyond, the video-editor company whose explainer-video tool serves around 20,000 businesses. Vyond's strength is exactly the editable-after-generation model. Wu's experience there shows up in Hera's architectural choice to output editable timelines rather than locked renders.

Hera is a Y Combinator Summer 2025 company. The five-person team operates out of San Francisco and ships fast: the gap between the original August 2025 Product Hunt debut and the April 30 Hera Launch mode is roughly eight months, and the metrics in between (200K animations, 100K waitlist) say the underlying motion engine has been validated against real creator demand before being narrowed to the launch-video use case.

Key details

- Input: a single text prompt describing the product, target audience, and desired tone. No footage, screen recordings, or design assets required to start.

- Output: code-based animation, not a fixed video export. Every timing parameter, font choice, color, and motion curve can be adjusted in the editor after generation.

- Pricing: monthly subscription model. Specific plan tiers and prices are listed at hera.video; the free trial is the practical entry point for evaluating against a real launch.

- Track record: 200,000-plus animations created on the original Hera tool; 100,000 waitlist signups; 4.9-star rating across 127 reviews.

- Team: five people, including two co-founders with creator-economy production and explainer-video engineering backgrounds.

- Funding: Y Combinator Summer 2025 cohort. The team has not publicly disclosed seed-round figures beyond the YC investment.

- Day-one traction: third place on Product Hunt April 30, 2026, with 233 upvotes.

What to watch

Three open questions will determine whether Hera Launch becomes a default tool for creator launch videos or stays a niche option.

First, the editing surface. Code-based animation only matters if the editor is approachable for non-technical creators. The team has not publicly demonstrated the post-generation editor at depth, and that is the make-or-break feature. If editing is harder than just regenerating with a new prompt, the architectural advantage collapses.

Second, brand consistency. Launch videos for established companies need to match brand systems: specific fonts, kerning, color tolerances, motion language. The next test is whether Hera Launch can ingest a brand kit (logos, font files, hex codes, motion conventions) and produce output that meets a brand manager's bar. Early adopters will be founders without strict brand systems; growth past that audience requires the brand-kit story.

Third, the catalog. Generic launch-video templates already exist in stock libraries. AI-generated launch videos differentiate on adaptability, but they have to actually adapt. If the model's outputs cluster around a narrow stylistic range, it competes with templates rather than with motion designers. The diversity of the next 1,000 published Hera Launch outputs is the metric that proves the differentiation, or doesn't.

The team's bet, and the bet creators are making by paying for the subscription, is that opinionated AI defaults plus parameter-level editability lands closer to a hired motion designer than to a stock template. Whether that bet pays off is observable from a creator's first attempt: did the output feel custom, or did it feel templated? That answer arrives one launch at a time.