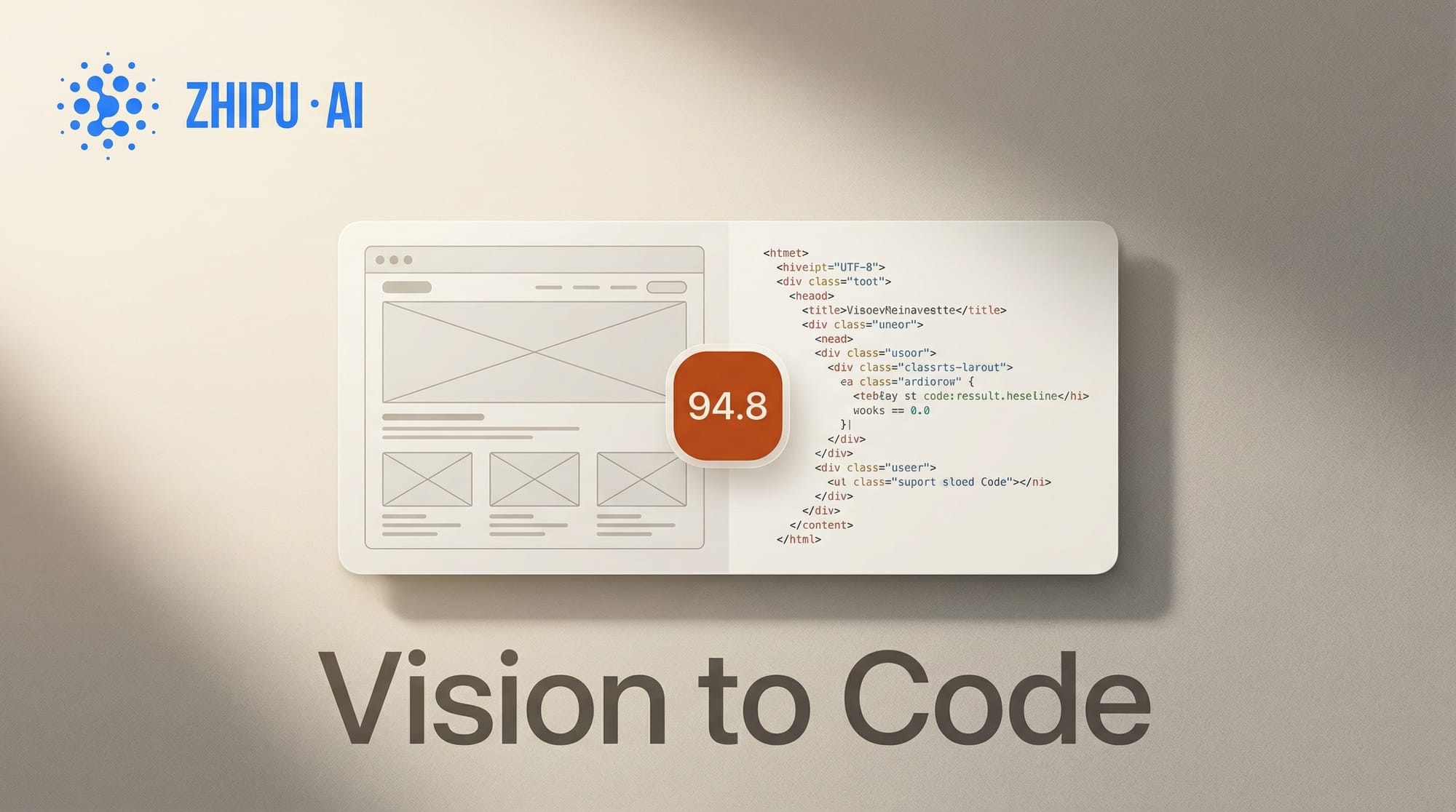

Z.ai has launched GLM-5V-Turbo, a vision-coding model that converts design mockups, screenshots, and wireframes into working frontend code. The model scored 94.8 on the Design2Code benchmark, compared to 77.3 for Claude Opus 4.6 on the same test.

For the broader landscape, see our complete guide to AI coding tools in 2026.

What Happened

GLM-5V-Turbo is Z.ai's first multimodal coding foundation model, built to bridge the gap between what a model sees and the code it writes. The model natively processes images, video, design drafts, and document layouts through its CogViT encoder, then generates code that reproduces those visual inputs as functional interfaces.

During reinforcement learning, Z.ai jointly optimized the model across 30+ task types spanning STEM, visual grounding, video understanding, GUI agents, and coding agents. The result is a model that handles design-to-code as a core capability rather than an add-on. It supports a 200K context window and is priced at $1.20 per million input tokens and $4.00 per million output tokens via API.

Why It Matters

Design-to-code workflows have been one of the hardest problems for AI coding tools to solve reliably. Most models treat visual input as secondary to text, leading to code that vaguely approximates a design rather than accurately reproducing it. A 94.8 Design2Code score suggests GLM-5V-Turbo can reproduce UI mockups with high fidelity, which could eliminate entire handoff cycles between designers and developers.

The model is narrowly specialized. On pure text coding and backend tasks, Claude still leads. But for the specific workflow of looking at a design and turning it into HTML, CSS, and JavaScript, this is the current benchmark leader. Teams building AI coding tools now have a model optimized specifically for visual-to-code conversion.

Key Details

- Design2Code benchmark: 94.8 (vs. Claude Opus 4.6 at 77.3)

- Native multimodal: images, video, design drafts, document layouts

- 200K context window for large documentation and codebase analysis

- RL training across 30+ task types for robust visual grounding

- Pricing: $1.20/M input tokens, $4.00/M output tokens

- Free web interface at chat.z.ai

What to Do Next

Developers and designers can try GLM-5V-Turbo free at chat.z.ai or access it via API through OpenRouter. The model works best for frontend code generation from visual inputs. For teams that previously used the GLM-5 base model, GLM-5V-Turbo adds dedicated vision-coding capabilities on top of the same architecture.