The anonymous AI video model that topped every Artificial Analysis benchmark in April 2026 belongs to Alibaba. HappyHorse-1.0, a 15-billion parameter model that appeared without attribution on the Video Arena leaderboard, was confirmed on April 10 as the work of engineers from Alibaba's Token Hub (ATH) AI unit, led by Zhang Di, the former VP of Kuaishou who architected Kling AI. The model generates 1080p video with synchronized audio in a single pass across seven languages, using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor. Its Elo score of 1,415 in image-to-video leads the second-place Seedance 2.0 by 57 points, the largest margin in the arena's history.

For the broader landscape, see our complete guide to AI video generation in 2026.

Background

HappyHorse-1.0 appeared on the Artificial Analysis Video Arena around April 7, 2026, with no corporate attribution. The leaderboard uses blind user voting: participants see two video outputs side by side and pick the better one without knowing which model produced which result. HappyHorse immediately climbed to first place in both text-to-video and image-to-video categories.

The anonymous entry generated intense speculation. Previous anonymous leaderboard entries had been linked to Google, ByteDance, and other major players testing models before official launch. HappyHorse's dominance was so decisive that the community focused less on who built it and more on how a model nobody had heard of could outperform every known competitor by such a large margin.

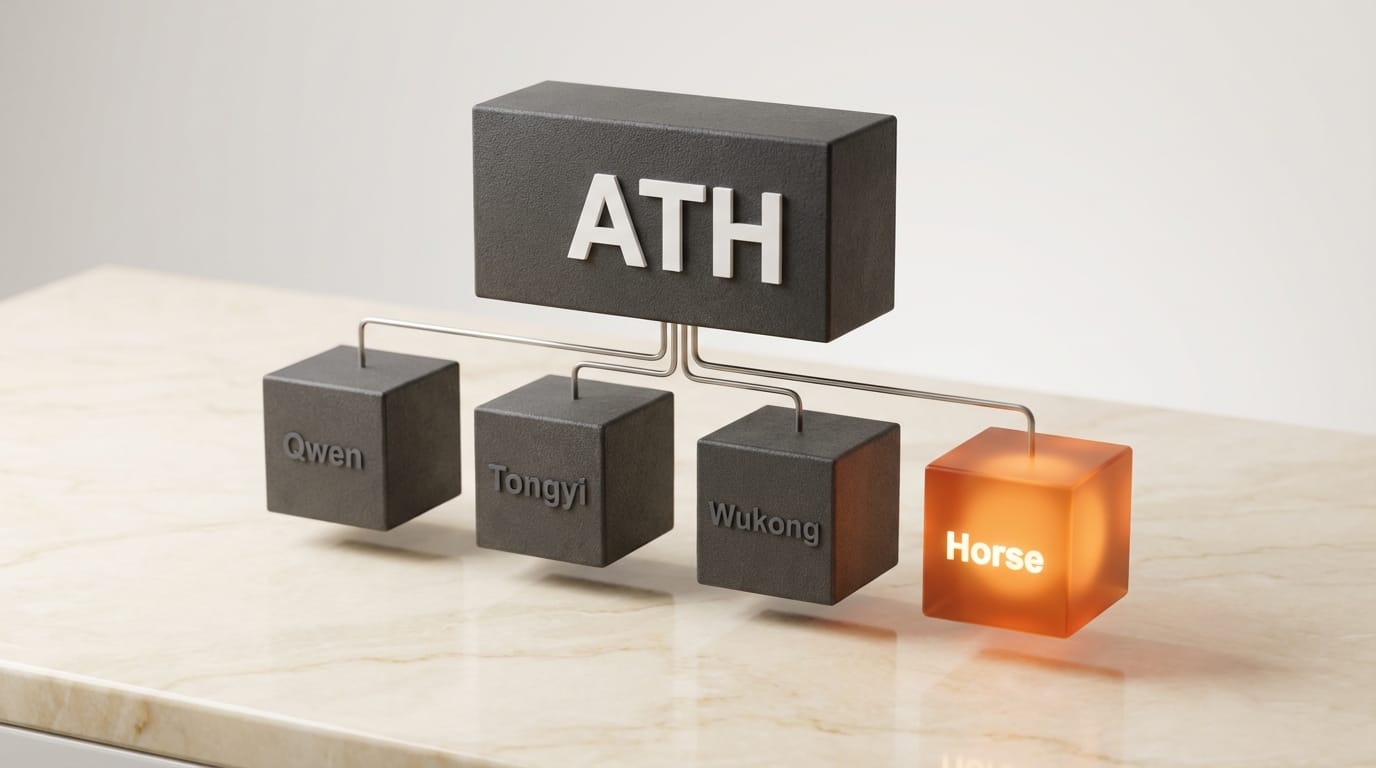

The identity reveal came on April 10 through a post from a newly created X account. TechNode reported that Alibaba confirmed the model belongs to its ATH AI Innovation unit, a new business group established in March 2026 under CEO Eddie Wu that consolidates Alibaba's AI assets including Tongyi Lab, the Qwen unit, and the Wukong unit. Creative AI News previously covered the model's initial benchmark dominance before the identity reveal.

Deep Analysis

Zhang Di's Defection: From Kling to Its Killer

The most consequential detail in the HappyHorse story is not the model itself but the person leading it. Zhang Di served as Vice President at Kuaishou and was the technical architect of Kling AI, one of the most commercially successful AI video generators. At the end of 2025, Zhang Di left Kuaishou to join Alibaba and lead multimodal AI innovation.

The result is a model that directly competes with, and now outranks, the platform Zhang Di built at his previous company. Heise reported that Kling 3.0 Omni Pro scores 1,299 on the image-to-video leaderboard, a full 116 Elo points behind HappyHorse's 1,415. The technical lead of one of the top AI video models built a better one within months of switching companies.

This pattern of talent movement driving competitive advantage is becoming a defining feature of the AI video generation market. The knowledge and architectural insights that produced Kling now inform a competitor. For Kuaishou, this represents a significant talent risk. For the market, it suggests that the competitive moat in AI video generation is less about data or compute and more about the architects who design the training pipelines.

Single-Pass Audio-Video: The Architecture Advantage

Most AI video generation systems use a multi-stage pipeline: generate silent video, then add audio, then align lip movements. Each stage introduces potential errors and latency. HappyHorse uses a unified single-stream Transformer with 40 layers that processes text, image, video, and audio tokens together in one pass.

The practical advantage is native lip synchronization. Because audio and video are generated jointly, the lip movements are not retrofitted onto a silent video but produced as an integral part of the generation. The model supports seven languages (Mandarin, Cantonese, English, Japanese, Korean, German, French) with this native synchronization, compared to the typical approach of supporting one or two languages and then dubbing the rest.

The inference performance is notable: 1080p output in approximately 38 seconds on a single NVIDIA H100, using an 8-step denoising process without classifier-free guidance. For comparison, most competing models require 25-50 denoising steps. The reduced step count suggests aggressive distillation work, trading marginal quality for significant speed improvement.

However, the single-pass architecture has a tradeoff. On the Artificial Analysis leaderboard, Seedance 2.0 edges HappyHorse in both audio-inclusive categories (text-to-video with audio: 1,219 vs 1,205; image-to-video with audio: 1,162 vs 1,161). This suggests that while the unified architecture produces better base video quality, dedicated audio pipelines may still have an edge in audio-specific quality. The margins are narrow enough that future training runs could close the gap.

The Open-Source Question: Claims vs Reality

Press releases circulating on April 9-10 described HappyHorse as "fully open-source with complete commercial licensing," claiming that model weights, distilled versions, a super-resolution module, and inference code were available on GitHub. These claims are premature at best.

According to WaveSpeedAI's verification, as of April 10 no weights are publicly downloadable, no real GitHub repository exists (repos found are unofficial fan-made forks), no HuggingFace model card or weights have been published, and no license file has been released. Alibaba's own statement describes the model as "currently in internal testing" with "API access to open soon."

The gap between press release claims and actual availability is significant because it affects how the community should evaluate HappyHorse's competitive position. A benchmark-leading model with open weights would reshape the market. A benchmark-leading model with no public access is a demonstration of capability, not a product. Creators should treat HappyHorse's open-source claims as forward-looking until weights are independently verified and downloadable.

Alibaba also clarified that HappyHorse "does not currently have an official website," warning that multiple unauthorized sites have appeared. This mirrors a pattern seen with other Chinese AI model launches where third-party SEO operations create unofficial presence before the team has published anything official.

Alibaba's ATH: E-Commerce to AI Powerhouse

HappyHorse is the first major model publicly linked to Alibaba's restructured ATH (Alibaba Token Hub) organization. CEO Eddie Wu established ATH in March 2026 to consolidate Alibaba's fragmented AI efforts, merging Tongyi Lab, the Qwen large language model unit, the Wukong unit, and an AI innovation division into a single business group.

The organizational context matters because HappyHorse emerged from an unusual origin: the Future Life Laboratory within Taotian Group, Alibaba's domestic e-commerce division that oversees Taobao and Tmall. A benchmark-leading AI video model built inside an e-commerce R&D lab, not a dedicated AI research organization, suggests that Alibaba's AI capabilities are more distributed and deeper than the market has recognized.

For the competitive landscape, HappyHorse signals that Alibaba is a serious player in video generation alongside ByteDance (Seedance), Kuaishou (Kling), and Google (Veo). Combined with the Qwen language model family, Alibaba now competes across both text and video generation, the two modalities that matter most for creative AI applications.

Impact on Creators

The immediate impact for creators is limited because HappyHorse is not yet publicly available. There is no API, no web interface, and no downloadable weights. The benchmark performance demonstrates what is technically possible but does not translate into a tool creators can use today.

The longer-term implications are more significant. If HappyHorse's weights are released with a permissive commercial license as claimed, a model that generates 1080p video with native audio sync in 38 seconds on a single H100 would dramatically lower the cost of AI video production. Consumer GPU versions in development could bring that capability to creators running models locally, shifting video generation from a cloud-only service to a local-first tool.

For creators currently using Kling, Seedance, or Veo, HappyHorse's native audio-video generation represents a workflow simplification. Eliminating the separate audio and lip-sync steps removes two stages of potential error and reduces total production time. When and if the model becomes accessible, it would be the first major video generator where synchronized audio is a native output rather than a post-processing step.

The competitive pressure HappyHorse creates also benefits creators indirectly. Seedance, Kling, and Veo will need to match its quality or compete on access, pricing, or ecosystem integration. More competition in the benchmark-leading tier pushes prices down and quality up across the board, as detailed in our AI video benchmark comparison.

Key Takeaways

- HappyHorse-1.0 is a 15B-parameter AI video model from Alibaba's ATH unit, led by ex-Kling architect Zhang Di

- It tops the Artificial Analysis leaderboard at 1,415 Elo (image-to-video), 57 points above Seedance 2.0, the largest gap in arena history

- The unified single-stream Transformer generates 1080p video with native audio sync in 38 seconds on one H100

- Open-source claims are unverified: no weights, code, or licenses are publicly available as of April 10

- The model signals Alibaba's serious entry into AI video generation through its reorganized ATH business group

What to Watch

The weight release timeline is the critical variable. If Alibaba follows through on open-source claims within weeks, HappyHorse becomes the most capable open-weight video model available and a direct threat to Seedance and Kling's commercial positions. If the release delays or comes with restrictive terms, the model remains a benchmark demonstration rather than a market-moving product.

Watch for Kuaishou's response. Zhang Di built Kling; he now leads a model that outranks it by 116 Elo points. Kuaishou will need to accelerate its roadmap or risk losing its competitive position to a model designed by someone who knows its architecture intimately.

The consumer GPU versions are worth tracking for creators who run models locally. A benchmark-leading video model that runs on accessible hardware would be a step change for independent creators. Watch the HuggingFace and GitHub spaces for official releases from Alibaba's ATH team.

This analysis was produced by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.