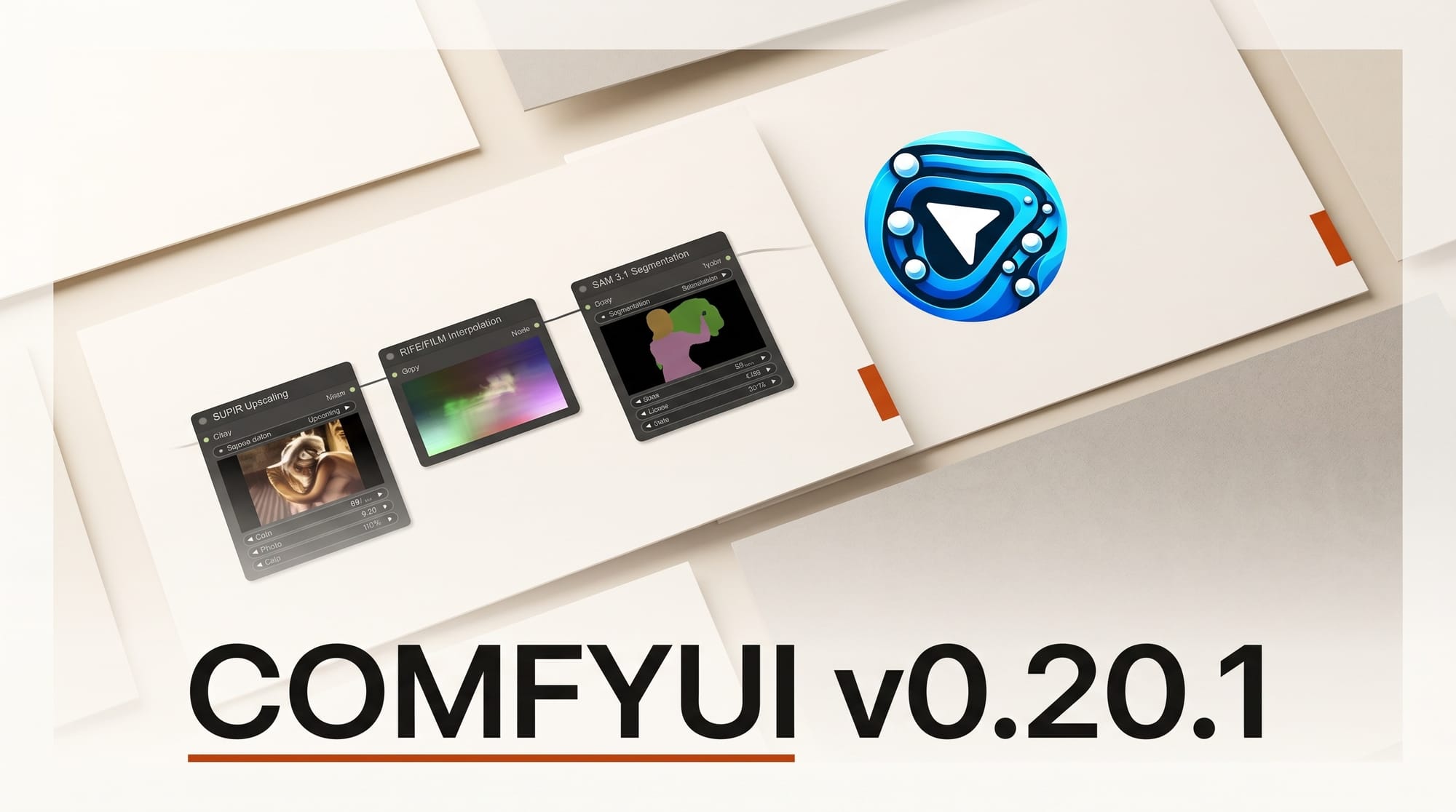

ComfyUI shipped v0.20.1 on April 27, 2026, bundling four foundational nodes that creators have been requesting for months: SUPIR upscaling, RIFE and FILM frame interpolation, and SAM 3.1 segmentation. The release also expands partner-node support to Google Veo 3 Lite, native 4K output for Veo and Kling, and GPT-Image-2 versioning inside the GPTImage node. The headline is not any single node. The headline is that ComfyUI now ships post-processing parity with paid platforms inside the open-source workflow graph.

Background

The Comfy-Org team published v0.20.1 on April 27 at 21:53 UTC, hours after v0.20.0 dropped earlier the same afternoon. The two patches close out a sprint that brings a long list of post-processing capabilities into core. Until this release, finishing a ComfyUI render usually meant exporting to a separate tool: Topaz Gigapixel for upscaling, Flowframes or DAIN-App for interpolation, an external Photoshop plugin for SAM-based masks. Each handoff cost time and introduced file-format bugs that are a persistent friction point for solo creators.

The v0.20.1 changeset stamps that gap shut. SUPIR (Scaling-UP Image Restoration) lands as CORE-17, giving creators a high-fidelity upscaler that handles photorealism and faces without the hallucination problems older ESRGAN variants suffer. RIFE and FILM ship together under CORE-29, covering both real-time and high-quality interpolation paths so video creators can slow-mo or smooth AI-generated clips entirely inside the graph. SAM 3.1 (CORE-34) replaces SAM2 for segmentation. Standalone audio VAE support for the LTXV pipeline rounds out the core additions.

The release lands one week after ComfyUI's $30M Series B at a $500M valuation, and the velocity of v0.20.0 plus v0.20.1 hours apart is exactly what Series-B-funded velocity looks like. The partner-node catalog (Veo 3 Lite, 4K Kling, GPT-Image-2 versioning, ByteDance auto-downscaling for SeeDance 2) is the other half of that velocity: ComfyUI is moving fast on its own roadmap and fast on absorbing other vendors' newest releases as graph-callable nodes.

Deep Analysis

The post-processing parity thesis

What v0.20.1 settles is whether ComfyUI is an end-to-end production tool or a stage in a longer pipeline. Until April 27, it was a stage. You generated in Comfy, exported to Topaz for upscale, exported to Flowframes for interpolation, exported to a third tool for cleanup masks, then composited the result. Each export touched filesystem, picked an encoder, hit a tool with a different UI, and risked color-space mismatches.

v0.20.1 collapses that pipeline into a single graph. Generation in Comfy, upscale via SUPIR in Comfy, interpolate via RIFE or FILM in Comfy, mask via SAM 3.1 in Comfy, finish in Comfy. For agency-grade deliverables, this matters more than any single feature. Iteration time drops because feedback rounds happen in one tool. Color-space and format mismatches drop because the graph holds the data in one tensor stack until export. Solo creators get the productivity gain that previously required three paid licenses.

The partner-node catalog as moat

The strategic move in v0.20.1 is not the open-source nodes; it is the partner catalog. Native 4K output for Veo and Kling means agency-grade video deliverables can be assembled without re-rendering through the vendor UIs. Veo 3 Lite as a versioned node sits next to Veo 3 standard. GPT-Image-2 is now a versioned option inside the GPTImage node. ByteDance auto-downscaling lands on the SeeDance 2 nodes. SD2 real-human support arrives.

For each partner, the integration is asymmetric. The vendor (Google, OpenAI, ByteDance) gets distribution into a graph that creators already use, complete with version pinning and node history. ComfyUI gets to be the place where the most expensive proprietary models are used most flexibly. That asymmetry is the moat. Once a creator's graph contains a Veo node, a GPT-Image-2 node, a Kling node, and a HappyHorse node alongside open weights, switching off ComfyUI means rebuilding multiple proprietary integrations elsewhere. There is no equivalent runtime. A1111 does not have it. Adobe's Firefly Assistant runs in Adobe's apps, not as a generic graph. ComfyUI's Series B was funded on this asymmetry, and v0.20.1 is the deliverable that proves it.

The @kijai contribution model and what it means for open-source maintainer economics

Three of the four headline core nodes (SUPIR, RIFE plus FILM, LTX audio VAE) were contributed by @kijai. This is not incidental. @kijai has been the most prolific community node author in ComfyUI for over a year. The fact that v0.20.1 ships @kijai's work as core, rather than as a third-party "comfyui-kijai-nodes" install, signals that Comfy-Org is moving the highest-quality community work into core to give creators a stable surface to build on.

This is the model open-source creative tools have been searching for since Blender's early days: a way to reward prolific community maintainers without absorbing them as employees who lose freedom to ship across the ecosystem. The Series B round explicitly named "supporting the contributor ecosystem" as a use of funds. v0.20.1 is the first release where that funding shows up as core integration of community work rather than a separate maintainer grant.

The 4K video pivot

The 4K Kling and Veo additions are the bigger story for video creators in the near term. Kling 3.0 and Veo 3 already supported 1080p inside Comfy, but native 4K through the partner nodes means professional broadcast and streaming deliverables can be assembled without leaving the graph. Combined with RIFE for frame interpolation and SUPIR for upscaling, ComfyUI now produces 4K video output that can be color-graded directly in DaVinci Resolve or sent to a NLE for final cut, without any quality-loss re-render in between.

For solo creators making YouTube-quality content, the impact is real but incremental: better quality at the same workflow. For agency teams producing client deliverables, the impact is structural: a junior compositor running a Comfy graph can now produce 4K output that previously required Resolve plus Nuke plus Topaz, all licensed at thousands per seat. The cost spread is large enough to move at least some agency work out of the proprietary stack.

Impact on Creators

For self-hosted Comfy users, the immediate action is to pull v0.20.1 and update Manager to 4.2.1. Custom-node maintainers should test against the new SAM 3.1 path and the LTX audio VAE before pushing breaking changes. If you publish workflows publicly on the 2026 ComfyUI workflow guide, label them with the minimum version so v0.19.x downloaders know to upgrade before SUPIR or interpolation branches resolve.

For solo video creators, the slow-motion and frame-smoothing capability via RIFE plus FILM, combined with SUPIR for upscaling, means a 720p-generated clip can be polished to 4K-ready output without leaving the graph. The end-to-end test: generate 1080p in Veo or Kling, interpolate to 60fps in RIFE, upscale to 4K in SUPIR, mask the subject in SAM 3.1, output to DaVinci Resolve. That whole chain is now four nodes in one graph.

For studios doing client photo work, SUPIR is the new default upscale path. SUPIR's photorealism mode preserves faces, fabric, and product detail in a way that Topaz Gigapixel handles well but ESRGAN variants do not. The license check matters: SUPIR is research-licensed and creators using it commercially should review the upstream license, but for agency work the quality jump is real and the tool is finally first-class inside Comfy.

For ComfyUI graph designers building production workflows, the partner catalog change is the strategic shift. A graph that pins specific Veo, Kling, GPT-Image-2, and HappyHorse versions, alongside SUPIR and RIFE post-processing, is now portable across machines because the runtime knows how to resolve every node. That is what enables team production: the graph is the deliverable, not just the output.

Key Takeaways

- Pipeline collapse, not feature ship. v0.20.1 turns ComfyUI from a generation stage into an end-to-end production tool. Three separate tool exits removed in one release.

- The partner catalog is the moat. Native 4K Veo, 4K Kling, versioned GPT-Image-2, ByteDance SeeDance 2 nodes mean creators run proprietary models inside the graph and cannot easily port that elsewhere.

- @kijai's three core contributions signal a new maintainer model. Series-B-funded velocity shows up as elevating community work into core, not absorbing maintainers as employees.

- 4K video output is now native. Broadcast and streaming deliverables can be assembled in Comfy without re-rendering through vendor UIs. This moves real money out of legacy post-production.

- Action: pull v0.20.1, update Manager, test SAM 3.1 path. Workflow publishers should label minimum version so downloaders upgrade before branches resolve.

- License diligence on SUPIR matters. The quality jump is real but commercial usage should follow the upstream license terms.

What to Watch

Three signals over the next 60 days will determine whether v0.20.1 marks the inflection point or just keeps pace.

First, agency adoption of the 4K Veo plus Kling plus SUPIR chain for client deliverables. The benchmark is whether mid-size agencies start replacing their Topaz plus Flowframes plus Nuke chain with ComfyUI for at least some commercial work. Six weeks is enough to see this in industry posts and case studies. If yes, the production-stack story is real. If no, v0.20.1 is a quality-of-life release for the ComfyUI core audience.

Second, partner-node release cadence. ComfyUI's claim to be the canonical runtime for proprietary models depends on shipping new vendor versions within days of vendor release. v0.20.1 included GPT-Image-2 versioning the same week OpenAI shipped it. Watch whether the next round of model releases (Midjourney V8.2, Imagen 5, Veo 4 at Google I/O) show up in core within 7 days. If they do, the asymmetry holds.

Third, the @kijai integration model spreading to other prolific maintainers. If Comfy-Org continues elevating community work into core via funded contributor grants, the ecosystem locks in. If the next 60 days show one or two more high-impact community nodes hitting core, this is a sustained model, not a one-time. If not, the v0.20.1 contributor pattern becomes a snapshot rather than a roadmap.