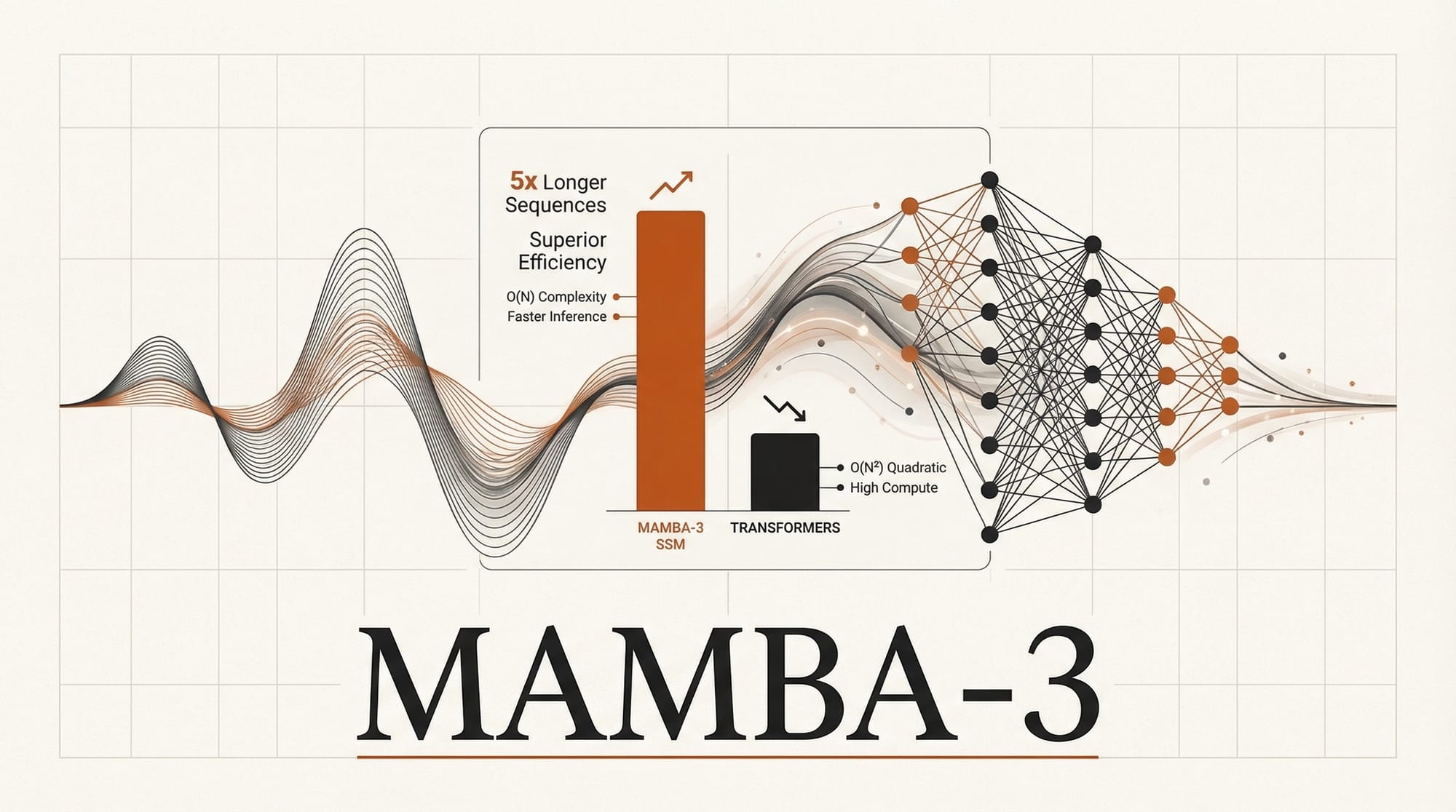

Researchers from CMU, Princeton, Together AI, and Cartesia AI published Mamba-3, a state-space model that matches transformer performance at the 1.5 billion parameter scale while running inference 7x faster. Presented at ICLR 2026 and detailed on the Together AI blog, the architecture introduces three technical innovations that close the quality gap with transformers while preserving the linear scaling that makes SSMs fundamentally cheaper to run. If the results hold at larger scales, the economics of AI inference change substantially.

Background

The transformer architecture has dominated AI since 2017, powering every major language model from GPT to Claude to Llama. Its core mechanism, self-attention, allows every token in a sequence to attend to every other token. This produces excellent results but creates a fundamental scaling problem: compute requirements grow quadratically with sequence length. Doubling the context window quadruples the cost.

State-space models take a different approach. Derived from control theory, SSMs process sequences through a fixed-size latent state that compresses past information into a constant-size representation. This gives them linear scaling: doubling the sequence length only doubles the cost. The tradeoff has been capability. Until now, SSMs produced noticeably worse results than transformers on tasks requiring precise recall or complex reasoning.

The Mamba lineage traces through four major milestones. S4 (2022) from Albert Gu demonstrated that structured state-space models could match transformers on long-range benchmarks. Mamba (December 2023) added selective state spaces, allowing the model to focus on relevant parts of the input rather than processing everything uniformly. Mamba-2 (May 2024) improved efficiency with a structured state-space duality framework. Mamba-3 now closes the remaining quality gap through three targeted innovations.

Deep Analysis

Three Technical Advances, One Goal

Mamba-3 introduces exponential-trapezoidal discretization, complex-valued state updates, and a MIMO formulation. Each targets a specific weakness in prior SSM architectures.

Standard SSMs use zero-order hold (ZOH) discretization to convert continuous dynamics into discrete steps. This is computationally convenient but introduces approximation errors that accumulate over long sequences. Exponential-trapezoidal discretization replaces ZOH with a higher-order method that more accurately captures the continuous-time dynamics. The practical result: better sequence modeling accuracy without adding significant compute overhead.

Complex-valued state updates allow the model to represent richer patterns by using complex numbers rather than real values for the state representation. Complex numbers naturally encode both magnitude and phase, which is useful for modeling periodic patterns, oscillations, and other structured behaviors in data. This is not a new idea in signal processing, but applying it systematically to the SSM state gives Mamba-3 a richer internal representation.

The MIMO (multiple-input, multiple-output) formulation allows the model to process and generate multiple signal streams simultaneously. Prior Mamba versions used SISO (single-input, single-output) formulations that processed one stream at a time. MIMO enables the model to capture cross-channel dependencies, similar to how multi-head attention in transformers processes multiple representation subspaces in parallel.

The Benchmark Numbers and What They Mean

At the 1.5 billion parameter scale, Mamba-3 outperforms Mamba-2 and matches Meta's Llama-3.2-1B across standard language benchmarks. The speed comparison is stark: Mamba-3 SISO completes a benchmark sequence of length 16,384 in 140.61 seconds compared to 976.50 seconds for Llama-3.2-1B. That 7x speedup is a direct consequence of linear versus quadratic scaling.

The inference cost difference is the real story. At current cloud GPU pricing, 7x faster inference translates to roughly 7x cheaper per token. For companies spending millions annually on inference compute, even partial adoption of SSM-based models could meaningfully reduce costs. The race to cheaper AI inference is already driving model architecture decisions across the industry, and Mamba-3 adds a new dimension to that competition.

The 1.5B parameter scale is both a strength and a limitation of the current results. Matching Llama-3.2-1B proves the architecture works. But the largest language models operate at 70B to over 400B parameters, and it is not guaranteed that SSM advantages scale proportionally. The research community is watching whether Mamba-3's improvements hold at 7B, 13B, and beyond.

The Hybrid Question

The AI architecture debate is not purely SSM versus transformer. AI21 Labs' Jamba demonstrated that hybrid architectures combining transformer attention layers with Mamba SSM layers can capture the benefits of both: the precise recall and in-context learning of attention with the efficiency of state-space processing. Nvidia's work on hybrid architectures and various research efforts on combining attention mechanisms with SSM blocks suggest the future may be a blend rather than a replacement.

Mamba-3's improvements make pure SSM architectures more competitive against hybrids. If the quality gap is small enough, the simplicity and efficiency of a pure SSM stack may outweigh the marginal quality gains from adding attention layers. This is an economic decision as much as a technical one: hybrid architectures are more complex to implement, optimize, and deploy.

Together AI and Cartesia AI, two of the co-authors, are both building commercial products on SSM foundations. Together AI offers inference infrastructure, while Cartesia AI builds real-time voice and language processing systems where low latency is critical. Their involvement signals that SSM architectures are moving from research curiosity to production deployment.

Implications for Creative AI

Most creative AI tools today run on transformer-based architectures. Image generators use transformer-based diffusion models. Video generation relies on temporal transformers. Audio synthesis increasingly uses transformer attention for long-range coherence. The compute demands of these models are a primary driver of API pricing and generation speed.

SSM-based architectures could reduce those costs substantially. For image generation pipelines that process long token sequences representing high-resolution images, linear scaling means generation time grows more slowly with resolution. For video generation, where temporal consistency across hundreds or thousands of frames creates enormous context lengths, the quadratic-versus-linear gap becomes even more consequential.

Several research efforts have already explored SSM-based vision architectures, including Vision Mamba (ViM) and variants that replace the attention mechanism in vision transformers with Mamba blocks. These remain early-stage, but Mamba-3's quality improvements make the vision case more compelling. NVIDIA's push for cheaper inference hardware combined with more efficient model architectures could drive a meaningful reduction in creative AI costs over the next 12 to 18 months.

Impact on Creators

The direct impact on creative professionals today is limited. No major creative AI tool has switched from transformers to SSMs. The impact is in what comes next.

If SSM architectures reach parity with transformers at production scale, API pricing for AI image, video, and audio generation could decrease significantly. Faster inference means shorter wait times for generation. Lower compute requirements mean more capable models can run on consumer hardware, expanding the reach of local AI tools like those covered in our post on SD3.5-Flash bringing image generation to phones.

The open-source nature of the Mamba project on GitHub means the broader research community can build on these results immediately. For creators who work with open-source AI tools and run local inference, SSM-based models represent a path to running more capable models on the same hardware.

Key Takeaways

1. Mamba-3 matches transformer quality at 1.5B parameters while running inference 7x faster, closing the SSM capability gap.

2. Three innovations (exponential-trapezoidal discretization, complex-valued states, MIMO) each target a specific prior SSM weakness.

3. 7x faster inference translates to roughly 7x cheaper per-token costs, with significant implications for AI API pricing.

4. The results are proven at 1.5B parameters. Scaling to 7B+ remains unverified and is the critical next question.

5. Hybrid transformer-SSM architectures may outperform pure approaches, but Mamba-3 makes the pure SSM case stronger than ever.

What to Watch

Two factors will determine whether Mamba-3 reshapes the AI landscape or remains an academic milestone. First, scaling behavior: if the quality match holds at 7B and 13B parameters, expect rapid commercial adoption from inference-cost-sensitive providers. Second, adoption in creative AI pipelines: SSM-based vision and video architectures are still early-stage, but the combination of Mamba-3's quality improvements and the economic pressure of quadratic scaling on long-context generation creates strong incentives for exploration.

Together AI and Cartesia AI shipping commercial products on SSM foundations provides real-world validation that the research community alone cannot. If those products demonstrate SSM quality at transformer-competitive levels in production, the architecture conversation shifts from "interesting alternative" to "serious contender." The full implementation is open source. The next generation of experiments is already underway.

Deep dive by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.