LTX HDR beta is live. Lightricks just pushed the first piece of an HDR pipeline into its open LTX-2.3 video model: a new image-control LoRA on HuggingFace that outputs ARRI LogC3-encoded 16-bit EXR sequences, configurable up to 4000-nit peak white. Access is gated behind a terms accept for now, but the model is public and runs via ComfyUI today.

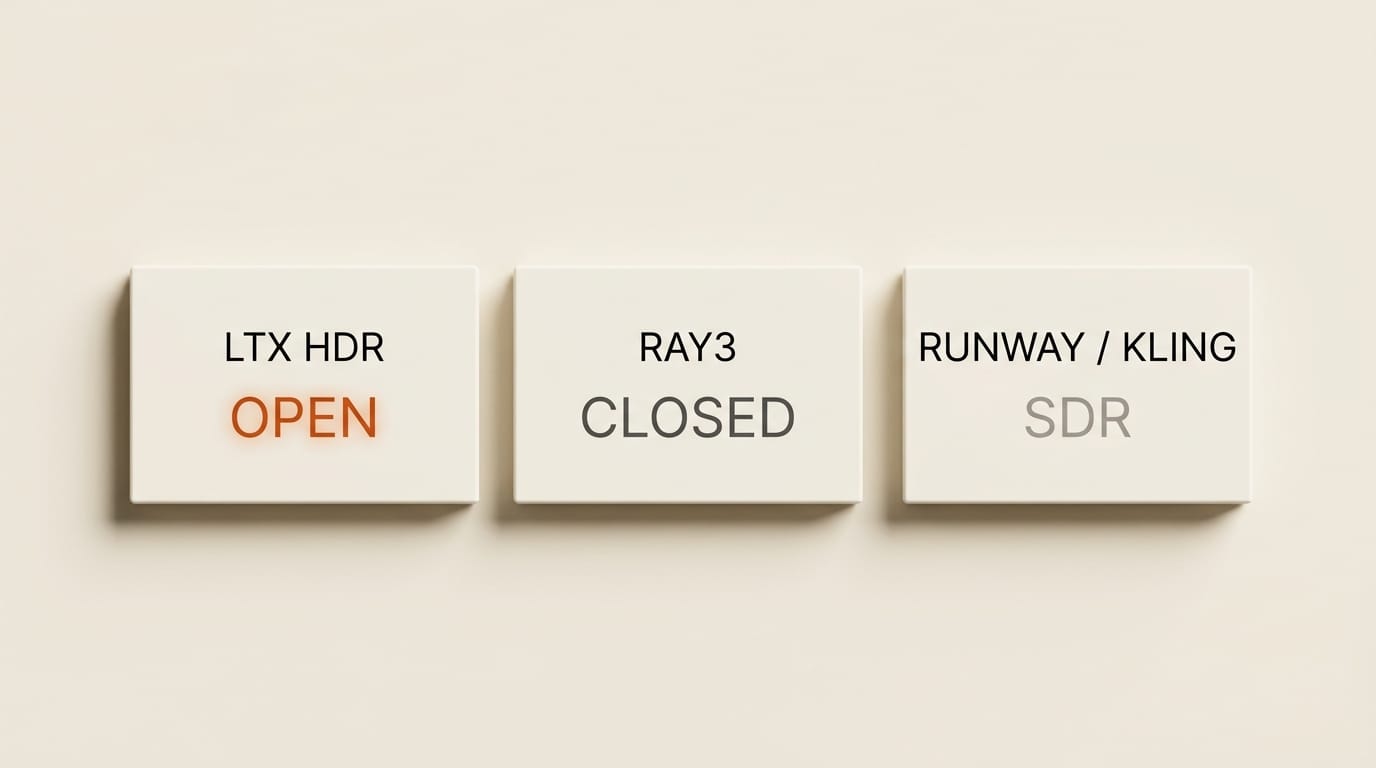

Every AI video model shipping up to this week outputs 8-bit SDR by default. Runway, Pika, Kling, Sora 2 before its late-April shutdown: all of them. Fine for social clips. The format falls apart the moment you try to grade. Highlights clip. Shadows crush. AI footage will not composite cleanly against higher-bit-depth CGI because you are mixing a 256-step tonal ladder into a 65,536-step pipeline.

Resolution was never the real issue. Dynamic range was.

What actually shipped

The model is Lightricks/LTX-2.3-22b-IC-LoRA-HDR, published April 20 as an IC-LoRA. An image-control adapter that composes with the 22B-parameter LTX-2.3 base model. It is a LoRA, not a new checkpoint, so the runtime VRAM footprint is the same as vanilla LTX-2.3 inference. The HDR behavior is switched in by loading the adapter alongside your existing workflow.

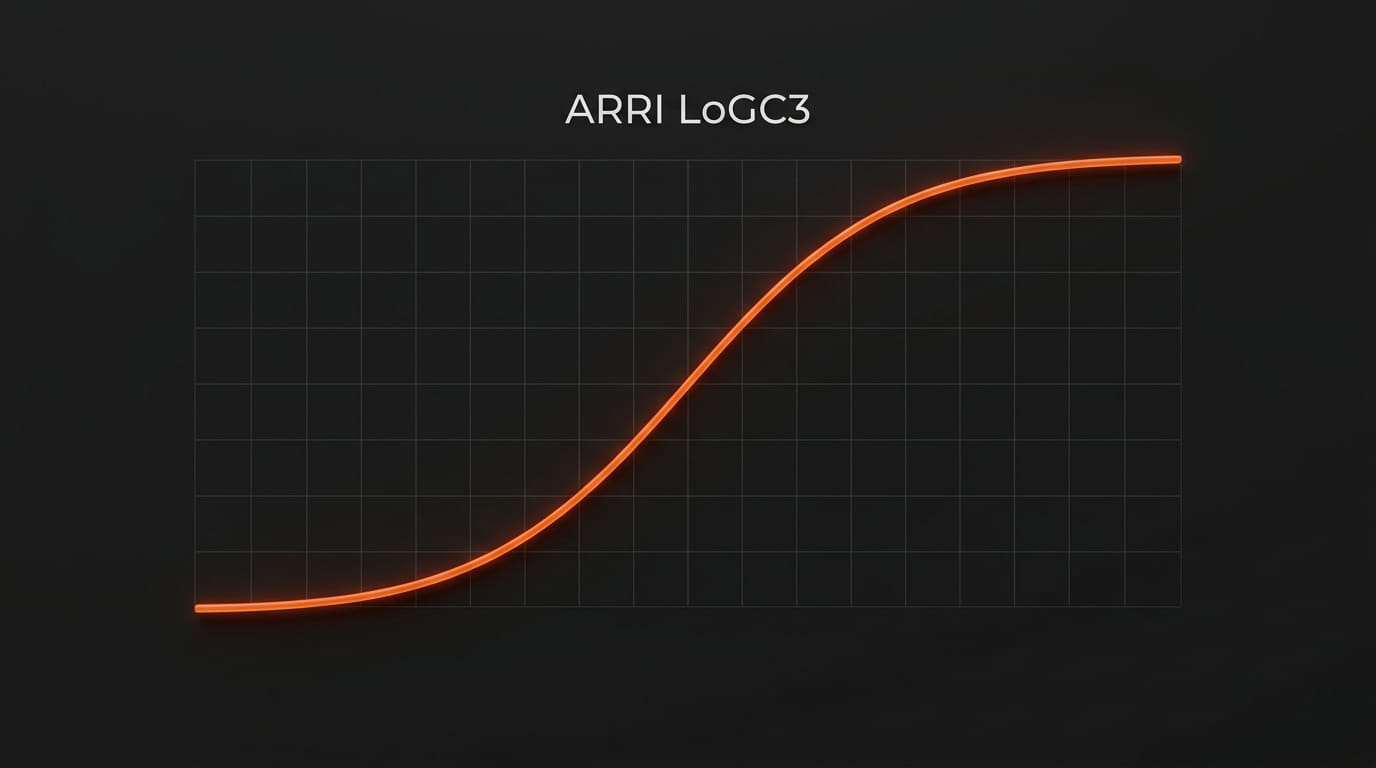

The output pipeline is the interesting part. Instead of baking a tone curve into RGB frames, the LoRA emits frames in ARRI LogC3 color space, the log encoding that Alexa cameras shoot in, with a choice of 1000-nit, 2000-nit, or 4000-nit peak white target. The ComfyUI integration ships an HDRICLoraPipeline node that writes the result as either a 16-bit or 32-bit OpenEXR image sequence plus a tone-mapped SDR proxy for preview.

Why ARRI LogC3 is the right choice

Log encodings compress scene-referred linear light into a perceptually efficient 10 to 12 bit range. ARRI LogC3 specifically is the log curve that camera operators, colorists, and grading LUT vendors have collectively standardized around for the last decade. Every professional grading tool, DaVinci Resolve, Baselight, FilmLight, Lattice, has first-class LogC3 support. Every DCP master and streaming HDR delivery pipeline starts from a log negative.

That means an AI video clip emerging from LTX HDR drops straight into a Resolve color page with the same workflow a colorist uses for Alexa footage. Assign the clip input color space to ARRI LogC3. Grade. Export in whatever delivery container the job needs: HDR10, Dolby Vision, P3 DCI for cinema, Rec. 2020 for broadcast HDR. No custom input transform, no weird LUT massage, no special-casing AI clips in the bin.

What this unblocks for creators

Three workflows that were actively broken with 8-bit SDR AI footage:

Color grading room. An 8-bit file has 256 tonal steps per channel. Once you push saturation, lift shadows, or retiming stretch, you can see the banding. LogC3 at 16 bits gives you 65,536 steps. Your grade survives aggressive adjustment without the AI frames falling apart.

CGI compositing. Render frames out of Blender, Houdini, or Unreal and they land in your compositor as linear float (usually 32-bit). Dropping an 8-bit SDR AI plate into the same comp forced either a massive quality compromise on the CGI side or a mess of conversion nodes. LogC3 EXR slots in cleanly: same bit depth ceiling, same linear-after-decode pipeline, compositor treats it like any other EXR plate.

Broadcast and streaming HDR delivery. Apple TV Plus, Netflix, Disney Plus, Prime Video: all mandate HDR masters for original content. You cannot legally deliver to those pipelines from an 8-bit source. Until LTX HDR, any AI-generated footage in a professional HDR deliverable required a hand-graded upscaling pass that defeats the point of using AI to move faster.

How it compares

Luma Ray3 has supported HDR output for several months, but it is proprietary, cloud-only, and the pricing scales with production-infrastructure economics. LTX HDR is open-weight on HuggingFace. If you have a GPU capable of LTX-2.3 inference (broadly: 24GB VRAM and up for comfortable runs), you can produce HDR frames locally, for free, on your own hardware.

Runway Gen-4.5 and Kling 2.5 ship 4K but are still SDR. Pika and Sora have not announced HDR roadmaps. OpenAI's Sora 2 is winding down API access entirely in September. At the moment, the practical choice for a creator who needs HDR is LTX HDR locally or Ray3 in the cloud.

Access and cost

The LoRA is a public but gated HuggingFace repository. You request access, accept the license terms, and the weights become downloadable. No API key, no SaaS tier. Lightricks has not (as of publication) rolled the HDR LoRA into the web-based LTX Studio SaaS platform, so for now this is a ComfyUI-on-your-own-hardware play. If you already have an LTX-2.3 workflow running locally, adding HDR is installing one more LoRA file and swapping in the HDRICLoraPipeline node.

Compute is the gating factor. LTX-2.3 at 22B parameters is a meaningful-sized model. Community benchmarks put clean 4K inference at roughly 30 to 60 seconds per second of video on a 4090, scaling up on A100s. The HDR LoRA does not materially change that. You are running the same base model with one additional adapter.

What is still unknown

Direct HDR10 or Dolby Vision container export is not called out in the model card. For now, the path is EXR sequence out of ComfyUI, grade in Resolve, deliver to whichever HDR profile the job needs. That is fine for production but costs a step that a native export option could remove.

The model card also does not quantify how the HDR LoRA affects motion quality, per-scene consistency, or prompt adherence versus vanilla LTX-2.3. Those trade-offs, if any, will surface in the next few weeks as colorists and VFX shops actually load the LoRA onto production work.

What to do next

If you are on LTX-2.3 already, request access to the HDR LoRA, drop the weight into your ComfyUI workflow, and generate one test clip at 1000-nit target. Pull it into DaVinci Resolve, assign ARRI LogC3 input, and see how your existing grading chain handles it. If the answer is clean, and it should be, your AI video pipeline just gained a dynamic range ceiling it did not have 48 hours ago.

If you are not on LTX yet and you care about HDR, this is the moment to evaluate it. The open-weight angle matters. Every competing HDR option is cloud-only, metered, and someone else's infrastructure. LTX HDR running locally is the first time AI-generated HDR has the cost structure of traditional CGI: fixed hardware, unlimited runs.

Dynamic range, not resolution, was the gap that kept AI video off professional HDR masters. That gap just closed.