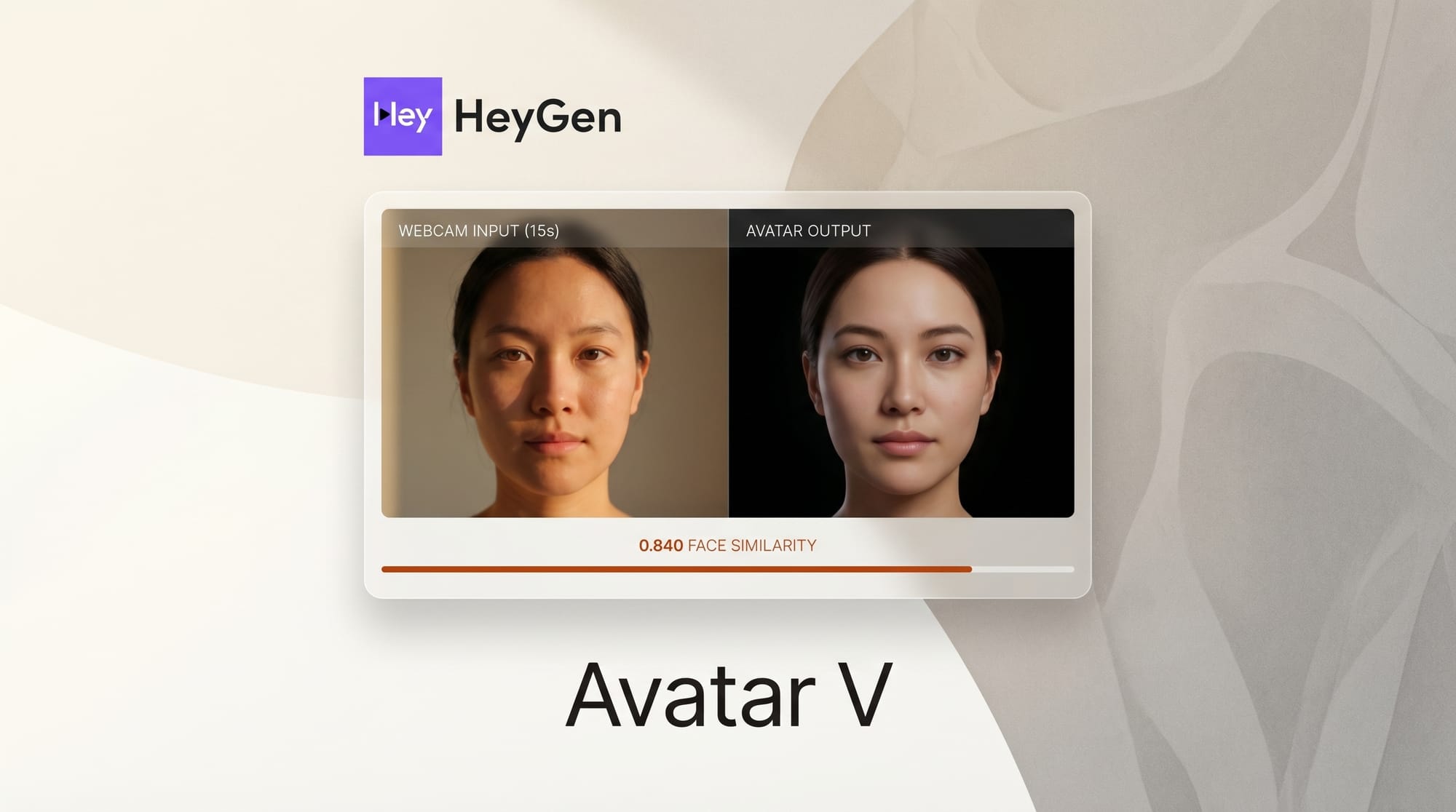

HeyGen's Avatar V, launched April 8, 2026, creates a realistic AI video clone from a single 15-second webcam recording. The model scores 0.840 on Face Similarity, the highest published benchmark in avatar AI, outperforming Google Veo 3.1 (0.714) by a significant margin. The system supports 175 languages with phoneme-level lip sync, generates videos up to 30 minutes long, and separates identity from appearance so creators can swap outfits and backgrounds without re-filming. For creators who have spent years paying for studio-quality video production, that 15-second capture window changes the math entirely.

Background

AI avatar technology has progressed through three distinct phases. First-generation systems like D-ID animated still photos with basic lip movements. Second-generation tools like Synthesia introduced multi-language support and enterprise-grade avatar studios but required controlled recording environments and lengthy capture sessions. Avatar V represents the third generation: a system where a brief phone recording contains enough identity signal to produce studio-quality output.

HeyGen has positioned itself at the intersection of avatar AI and video production tools. The company previously offered avatar creation through guided studio sessions, similar to competitors. Avatar V collapses that entire setup into 15 seconds of uncontrolled webcam footage, a technical leap that required rethinking how identity information is extracted and preserved across long video sequences.

The launch comes at a time when the AI video generation landscape is consolidating around identity preservation as the primary differentiator. Raw video quality has plateaued across major players; the question now is whether the output looks like the specific person it was meant to represent.

Deep Analysis

Video-Reference Architecture Breaks the Embedding Bottleneck

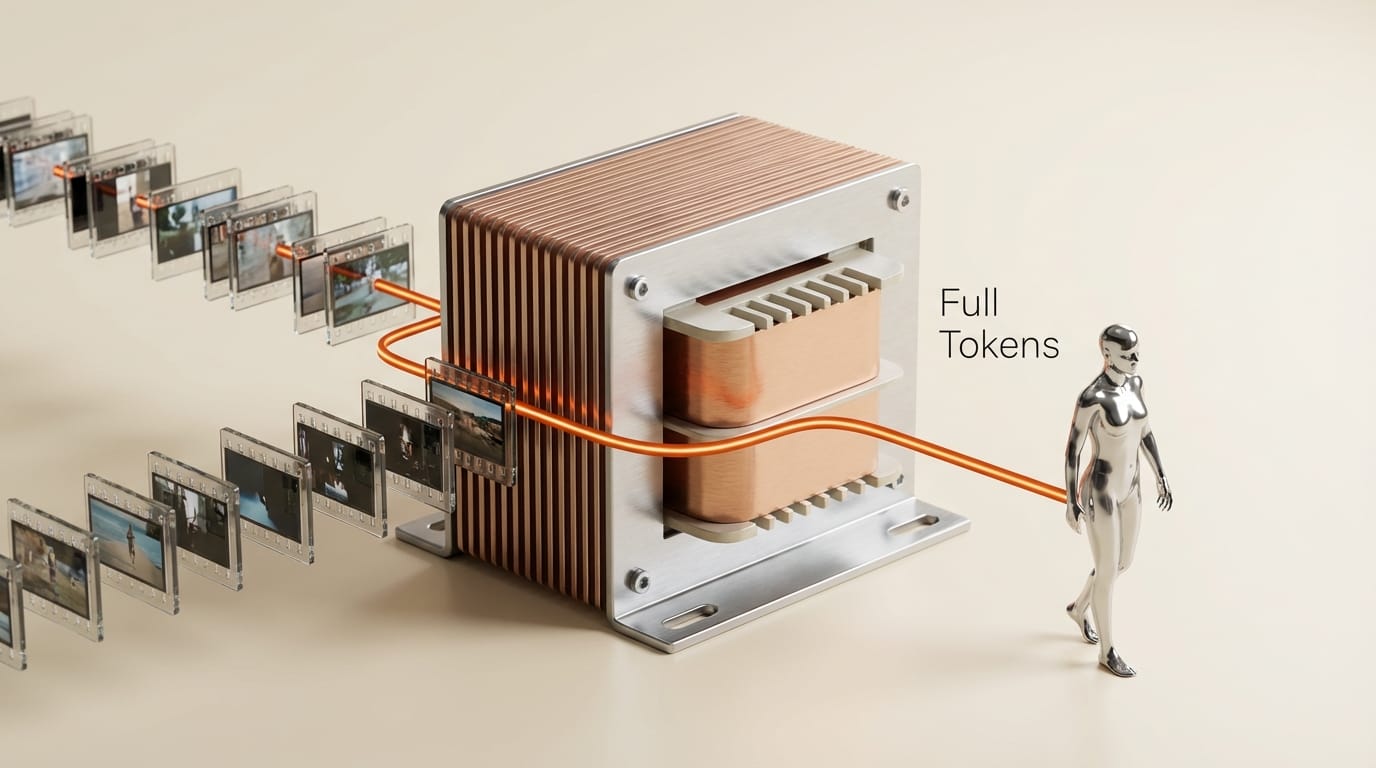

Previous avatar systems compressed a person's identity into a low-dimensional embedding, a fixed-size vector meant to capture everything from lip geometry to skin texture. This approach works for short clips but degrades over longer sequences as the system loses subtle identity signals that the embedding could not encode.

Avatar V takes a fundamentally different approach. According to HeyGen's research page, the model conditions on the full token sequence of the reference video at every transformer layer. The 15-second clip is patchified into a unified token sequence alongside audio and text inputs, then processed through transformer blocks with what HeyGen calls Sparse Reference Self-Attention. This means the model continuously references the original recording rather than working from a compressed summary.

The architecture scales naturally with reference length. A longer or higher-quality input recording produces richer identity context, not a different or larger embedding. This design choice eliminates the ceiling that plagued earlier systems where additional recording time offered diminishing returns after the embedding was saturated.

Benchmark Breakaway: 0.840 Face Similarity Sets a New Standard

The numbers tell a clear story. Avatar V posts a Face Similarity score of 0.840, compared to Google Veo 3.1 at 0.714. On lip-sync metrics, Avatar V achieves LSE-C of 8.97 (highest confidence) and LSE-D of 6.75 (lowest distance, surpassing even ground truth recordings). In blind human preference tests, Avatar V was chosen between 68.9% and 85.7% of the time in pairwise comparisons.

These are not marginal improvements. The 0.126-point gap between Avatar V and Veo 3.1 on Face Similarity is substantial in a metric space where differences of 0.05 are perceptible to viewers. The LSE-D score beating ground truth recordings suggests the model has learned to produce lip movements that are more temporally consistent than actual human speech patterns, likely because the model smooths out the micro-jitters present in real recordings.

The five-stage training pipeline behind these results is worth noting. HeyGen starts with general video generation on internet-scale data, adds audio-driven synthesis, then identity-preserving embedding, followed by distillation to reduce inference cost, and finally human feedback alignment using GRPO and DPO. That final RLHF stage appears to be the differentiator: the model is not just technically accurate but perceptually preferred by human evaluators.

15-Second Capture Rewrites Production Economics

The production cost implications are stark. A traditional avatar video setup requires studio rental ($500-2,000/day), lighting equipment, multiple camera angles, and a recording session of 30 minutes to several hours. Even previous AI avatar services required 5-10 minutes of guided recording in controlled conditions.

Avatar V reduces input requirements to 15 seconds on a phone camera. HeyGen recommends stable lighting and a neutral background but does not require them. The voice clone component needs just 10 seconds of separate audio. A creator can generate their avatar during a coffee break.

At $29/month for the Creator plan, a solo content producer can now generate localized video content in 175 languages from a single recording session. The previous cost structure for multilingual video production involved hiring voice talent ($100-500 per language), lip-sync post-production ($50-200 per minute), and additional studio time. Avatar V collapses these line items to a single subscription.

The outfit and background separation feature adds another economic multiplier. One recording session yields unlimited wardrobe and setting combinations. A creator filming a product review, a tutorial, and a social media clip no longer needs three separate recording sessions with three wardrobe changes.

Identity Preservation as the New Competitive Frontier

The avatar AI market has quietly shifted its competitive axis. Two years ago, the differentiator was whether the output looked "real enough." Today, every major player produces photorealistic video. The question is whether it looks like the right person.

This shift matters because creator trust depends on identity fidelity. An avatar that looks generically professional but not specifically like the creator undermines the parasocial connection that drives audience engagement. Viewers who subscribe to a creator expect to see that creator, not an approximation.

HeyGen's approach of modeling both static features (dental structure, skin texture, facial geometry) and dynamic features (talking rhythm, habitual micro-expressions, gestural tendencies) suggests the company understands this distinction. The model trained on over 10 million data points captures identity signals that go beyond surface-level appearance into behavioral patterns unique to each individual.

The integration with Seedance 2.0 for cinematic generation hints at where this technology is heading. Avatar V handles the identity layer; Seedance handles the cinematic language. Combined, they suggest a future where creators direct AI-generated video content rather than performing in it.

Impact on Creators

For solo creators, Avatar V removes the single biggest bottleneck in video production: being on camera. Creators who produce educational content, product reviews, or news commentary can now generate weeks of content from a single 15-second session. The 175-language support opens international markets without the production overhead of localization.

For small teams and agencies, the economics are even more compelling. A five-person agency producing client video content no longer needs to coordinate talent schedules with studio availability. Each team member records once; the system handles everything after that. Client-facing videos in multiple languages become a configuration step, not a production milestone.

The creative implications extend beyond cost savings. When the barrier to producing a video drops to 15 seconds plus a text prompt, creators can experiment freely. Test different scripts, try different tonal approaches, and iterate on content without the sunk cost anxiety of expensive studio time. That freedom to experiment is where the real creative value lies.

Key Takeaways

- Avatar V achieves 0.840 Face Similarity from a 15-second webcam clip, beating Google Veo 3.1 (0.714) by 17.6%

- The video-reference architecture conditions on full token sequences rather than compressed embeddings, enabling stable identity over 30-minute videos

- Production setup collapses from hours of studio time to seconds on a phone, starting at $29/month

- 175-language lip sync with phoneme-level accuracy makes multilingual content a configuration step

- Identity preservation, not visual realism, is now the primary competitive differentiator in avatar AI

What to Watch

The competitive response will be telling. Google, which has Veo 3.1's avatar capabilities integrated into Google Vids, will need to close the 0.126-point Face Similarity gap. Synthesia and D-ID, both positioned more toward enterprise markets, face pressure to match Avatar V's capture efficiency without sacrificing their compliance certifications.

Watch for adoption patterns among content creators in the 10,000 to 100,000 subscriber range. This cohort has enough audience to justify video production investment but not enough revenue to maintain studio infrastructure. Avatar V is designed precisely for this market segment. If adoption accelerates here, it validates the broader thesis that AI avatars are not a novelty but a production tool.

The deeper signal is HeyGen's integration with Seedance 2.0 and the broader trend toward composable AI video pipelines. Avatar V handles identity; other models handle cinematography, effects, and editing. The creator who masters this stack will produce content at a scale and speed that traditional production cannot match.

This analysis was produced by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.