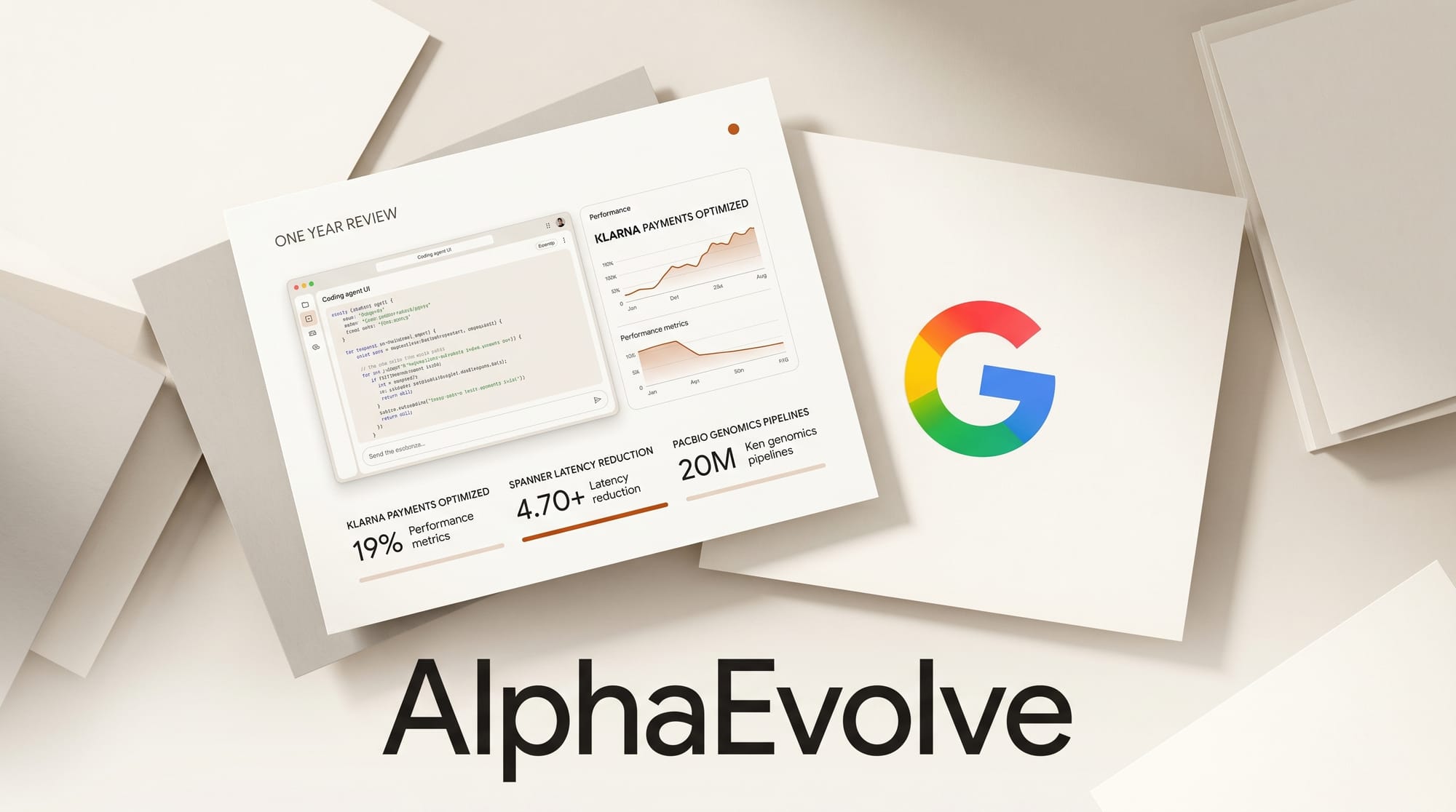

Google DeepMind on May 7, 2026 published a year-in-review of AlphaEvolve, the Gemini-powered coding agent the lab introduced in May 2025. The new post moves past lab demos and lists named partners, named numbers, and named systems where the agent is now in production: a 2x training-speed gain at Klarna, a 20% write-amplification reduction inside Google Spanner, a 30% drop in variant-detection errors at sequencing company PacBio, and 10x lower error on a class of quantum circuits. For developers and creator-tool builders, the interesting question is no longer "does evolutionary code search work" but "what should I send to it, and how do I get access."

What Happened

AlphaEvolve was first announced in May 2025 as a research system that uses Gemini to propose candidate algorithms, then evolves them against an automated evaluator. The May 7, 2026 update reframes it as an applied product. DeepMind chief scientist Jeff Dean is quoted in the post: "AlphaEvolve began optimizing the lowest levels of hardware powering our AI stacks." That is no longer a one-off result.

DeepMind also opened a public examples gallery showing the kinds of problems the system has shipped solutions for: matrix multiplication kernels, scheduling heuristics, compiler passes, materials-science force fields, and physics simulations. The gallery is the closest thing to a how-to-prompt-it spec the lab has released.

The Named Wins

The May 7 post groups results by partner. The numbers below are the lab's own claims, sourced to the linked blog and not independently verified:

| Partner | Domain | AlphaEvolve result |

|---|---|---|

| Klarna | Internal ML training | 2x faster transformer training, with quality preserved |

| Google Spanner | Distributed database | 20% reduction in write amplification |

| Google compiler team | Production compilers | Roughly 9% reduction in compiled-binary storage footprint |

| PacBio (DeepConsensus) | DNA sequencing | 30% reduction in variant-detection errors |

| FM Logistic | Routing | 10.4% routing efficiency gain, ~15,000 km saved per year |

| Schrödinger | Materials science | ~4x speedup on Machine Learned Force Field training and inference |

| Earth AI (Google Research) | Disaster prediction | 5% accuracy gain across 20 disaster categories |

| WPP | Ad-targeting model components | 10% accuracy gain on listed components |

| Substrate | Computational lithography | "Multi-fold" runtime improvement, no specific multiplier in the post |

| Internal grid optimization | Power flow | Feasible-solution rate improved from 14% to 88% |

| Internal quantum effort | Error correction | 10x lower error on a class of quantum circuits |

Two things stand out. First, AlphaEvolve has now optimized parts of Google's own infrastructure (Spanner, the compiler stack, TPU hardware paths) where any percentage improvement compounds across the company. Second, the partner mix is heavier on industrial and scientific work than on creative tooling.

How AlphaEvolve Differs From Today's Coding Copilots

The May 7 post does not give a fresh architectural diagram, but it confirms the system uses Gemini to propose code, an automated evaluator to score candidates, and an evolutionary loop to keep improving them. That is structurally different from a chat copilot like Cursor's programmatic agents or Claude's managed agents with self-learning memory, which target end-to-end developer workflows.

A useful split for builders evaluating which surface to use:

| Surface | Best for | Where it falls short |

|---|---|---|

| Chat copilots (Cursor, Claude Code, Copilot) | Reading a repo, fixing bugs, drafting whole features | Will not run thousands of evaluation passes against a numeric metric |

| Agent frameworks (Claude managed agents, OpenAI Agents SDK) | Multi-step workflows, parallel teams, tool calls | Optimization is implicit; success is judged by task completion, not a number |

| AlphaEvolve-style evolutionary search | Problems with a clean numeric scoring function: throughput, error rate, area, latency | Useless without a scorable evaluator. Requires significant compute per run. |

The TL;DR: AlphaEvolve is the surface to reach for when the bottleneck is a single measurable kernel that needs to get faster, smaller, or more accurate. It is not the surface for shipping a feature.

Why It Matters for Creative AI Builders

Creative-AI tooling has plenty of measurable kernels. A few candidate problems where an AlphaEvolve-style search would have a clean evaluator:

- Inference-time scheduling. Routing requests across model variants to maximize tokens-per-second under a memory budget. Runway's recent Kueue work doubled GPU efficiency by hand-tuning a similar problem.

- Quantization layouts. Choosing per-layer precision and tile sizes to minimize quality loss at a given memory ceiling. The DS4 project covered separately today is hand-tuned for one model on one chip; an evolutionary search could automate that work.

- Batching policies. Image and video generation services have to balance latency for the front of the queue against throughput for the long tail.

- Prompt-cache eviction. Real-time agents like OpenAI's GPT-Realtime-2 have to decide which prefix tokens to keep in cache as conversations grow past 128K. That is a clean optimization target.

- Render passes. Compositing pipelines for AI-generated video assets have measurable cost-per-frame budgets.

The unlock is not "AI writes my code." It is "AI runs the inner loop of your performance work, against your evaluator, while you sleep."

How To Get Access

This is the part the new post is least specific about. DeepMind says AlphaEvolve is "scaling commercial applications" through Google Cloud, but the post does not link a self-serve console, an API, or a price sheet. The named partners look like enterprise engagements rather than self-serve customers.

If you want to try the technique now, three practical paths:

- Run the public examples. The gallery shows the problem types and the kind of evaluator that works. Use it as a template for what makes a good AlphaEvolve target before you ask for access.

- Use the Gemini API to roll your own loop. The published architecture is reproducible at small scale. Send Gemini a prompt with the current best solution, an evaluator output, and a request for a variant. Score, keep, mutate. This is what open-source projects like FunSearch already implement.

- Talk to your Google Cloud rep. The named partners suggest the formal product lives behind a Cloud sales conversation rather than a developer console.

What To Do Next

If you operate a creative-AI service with measurable kernels, the action this week is to write down your top three optimization targets and the evaluator function for each. Even before AlphaEvolve is self-serve, that exercise is what unlocks any evolutionary search system, including the ones you can stand up yourself with the Gemini API. The teams shipping today's biggest performance gains are doing this exercise. The post-AlphaEvolve teams will do it with an automated search agent in the loop.

Frequently asked questions

Is AlphaEvolve generally available to developers?

Not yet. The May 7, 2026 update names enterprise partners and references commercial deployment through Google Cloud, but does not link a self-serve console, API endpoint, or pricing page. Treat it as a Cloud-sales product for now.

How is AlphaEvolve different from a chat coding assistant like Claude Code or Cursor?

Chat assistants take a request and return code in seconds. AlphaEvolve runs an evolutionary loop: Gemini proposes candidate code, an automated evaluator scores each candidate against a numeric metric, and the best candidates seed the next round. The right surface for "fix this bug" is a chat assistant. The right surface for "make this kernel 9% smaller" is an evolutionary search.

What numeric improvements does the May 7 post claim?

Headline numbers: 2x faster training at Klarna, 20% write-amplification reduction in Google Spanner, 30% fewer variant-detection errors at PacBio, 10.4% routing gain at FM Logistic, ~4x speedup at Schrödinger, ~9% binary footprint reduction in Google compilers, and a 14%-to-88% jump in feasible-solution rate on an internal grid problem.

Can I reproduce the technique without AlphaEvolve access?

Yes, at smaller scale. The published architecture (LLM proposes, evaluator scores, evolutionary loop) is implemented in the open by projects like Google's FunSearch. Stand up an evaluator first, point any capable LLM API at it, and you have the basic loop. The DeepMind version wins on compute, prompt design, and the evolutionary harness.

What kinds of problems are good AlphaEvolve targets?

Problems with a clean numeric scoring function and a search space larger than a human can hand-tune. Examples from the gallery: matrix-multiplication kernels, scheduling heuristics, compiler passes, materials-science force fields, quantum-error-correction codes. Bad fits: anything where success is judged by qualitative product feel.

Does AlphaEvolve write your tests for you?

No. You bring the evaluator. The whole approach depends on a fast, deterministic, automated way to score every candidate. Building that evaluator is the hard part of using systems in this family, and it is the work that pays off whether or not you ever get AlphaEvolve access.