Six open-source releases in two weeks just made a full creative pipeline possible without a single subscription

Between March 1 and March 15, 2026, something happened that has never happened before in creative AI: open-source models matched or exceeded commercial tools across every major creative modality simultaneously. Image editing, video editing, video generation, and voice synthesis all received production-grade open-source releases within the same two-week window. For independent creators who spend $100 to $300 per month on AI subscriptions, the math just changed.

Background: The Gap That Kept Closing

Open-source creative AI has been gaining ground steadily since Stable Diffusion launched in 2022 and proved that state-of-the-art image generation could run on consumer hardware. But for years, the open-source ecosystem had critical gaps. You could generate images locally, but editing them precisely required Adobe or Runway. You could experiment with video, but nothing matched the consistency of commercial platforms. Voice synthesis was either robotic or locked behind API pricing.

By early 2026, FLUX.2 from Black Forest Labs had already closed the image generation gap, delivering production-quality output that rivaled Midjourney. But generation is only one piece of a creative workflow. The missing links were editing, video, and voice. March 2026 filled every one of them.

Deep Analysis

Image and Video Editing: FireRed 1.1 and Kiwi-Edit

FireRed-Image-Edit 1.1, released March 3 by Xiaohongshu's FireRed team, is a 28-billion parameter image editing model that scored 7.94 across five authoritative benchmarks, surpassing Alibaba's Qwen-Image-Edit. It handles instruction-based editing with identity-consistent results: swap a background, change clothing, apply makeup, or fuse 10+ elements while keeping faces recognizable. The model runs under the Apache 2.0 license and requires 30GB VRAM with its optimized inference script, or as little as 12GB with NVFP4 quantization.

For video, Kiwi-Edit from NUS ShowLab arrived March 5 as the first unified open-source framework for both instruction-guided and reference-guided video editing. Trained on 477,000 high-quality quadruplets, it scored 3.02 overall on OpenVIE-Bench, the highest among open-source methods. It handles global edits like style transfer (cartoon, sketch, watercolor) and local edits like object removal, replacement, and background swaps at 720p resolution. The entire codebase, dataset, and weights are MIT-licensed.

Together, these two models cover the editing workflows that previously required tools like Adobe Generative Fill ($22.99/month) or Runway's editor ($28/month for Pro). They are not perfect replacements yet. FireRed's full-quality mode needs a 30GB GPU, and Kiwi-Edit caps at 720p. But for creators working on social content, thumbnails, and web assets, the quality is now sufficient.

Video Generation: Helios and LTX-2.3 Rewrite the Rules

Video generation saw two major releases that approach the problem from different angles. Helios, a collaboration between Peking University and ByteDance released March 4, is a 14-billion parameter autoregressive diffusion model that generates videos up to 60 seconds long at 19.5 FPS on a single NVIDIA H100. It supports text-to-video, image-to-video, and video-to-video natively. Three model variants (Base, Mid, and Distilled) are available under Apache 2.0, and with Group Offloading, the model can run on as little as 6GB of VRAM, putting it within reach of an RTX 3060.

LTX-2.3 from Lightricks, released in March, takes a different approach: native 4K output at up to 50 FPS with synchronized audio generation. This is the first open-source model that generates video and sound together, producing ambient noise, sound effects, and dialogue in sync with the visual output. It also supports portrait 9:16 mode for social media content. Lightricks shipped a desktop application that runs the entire model locally with a 12GB GPU for 1080p generation, or 24GB for full-quality output. Apache 2.0 licensed.

Compare this to commercial alternatives. Runway's Gen-3 starts at $12/month for 625 credits (roughly 25 five-second clips). The Standard plan generates at 1080p with no audio. At the Pro tier ($28/month), you get 2,250 credits and higher resolution. For a creator producing 20 to 30 video clips per week, monthly costs climb fast. With Helios or LTX-2.3 running locally, generation is unlimited after the hardware investment.

Voice Synthesis: TADA and Fish Audio S2 End the Quality Gap

Voice synthesis has been the domain where commercial tools held the firmest advantage. ElevenLabs built a business on natural-sounding speech that open-source models could not match. Two March releases changed this.

Hume AI's TADA (Text-Acoustic Dual Alignment), released March 10, is a speech-language model that synchronizes text and audio generation in a single stream. The result: zero content hallucinations across 1,000+ test samples from LibriTTSR. It synthesizes speech five times faster than comparable LLM-based systems and can produce up to 700 seconds of audio within a standard 2,048-token context window. Hume released both a 1-billion parameter English model and a 3-billion parameter multilingual variant on Hugging Face.

Fish Audio S2, also released March 10, attacked the expressiveness problem. Trained on over 10 million hours of audio across approximately 50 languages, S2 achieved the lowest word error rate among all evaluated models, including closed-source systems like Qwen3-TTS and MiniMax Speech-02. On the Audio Turing Test, S2 scored 0.515, surpassing Seed-TTS (0.417) by 24%. Its signature feature is word-level emotional control: embed natural-language instructions like [whisper] or [professional broadcast tone] at specific positions in the text. The S2-Pro variant hits sub-150ms latency on NVIDIA H200 hardware.

ElevenLabs' Starter plan costs $5/month for 30,000 credits. Their Pro plan at $99/month delivers 500,000 credits with production-quality output. For creators producing regular voiceover content, podcasts, or video narration, TADA and Fish Audio S2 now offer competitive quality at zero ongoing cost, though Fish Audio's model weights use a research license that requires a commercial agreement for business use.

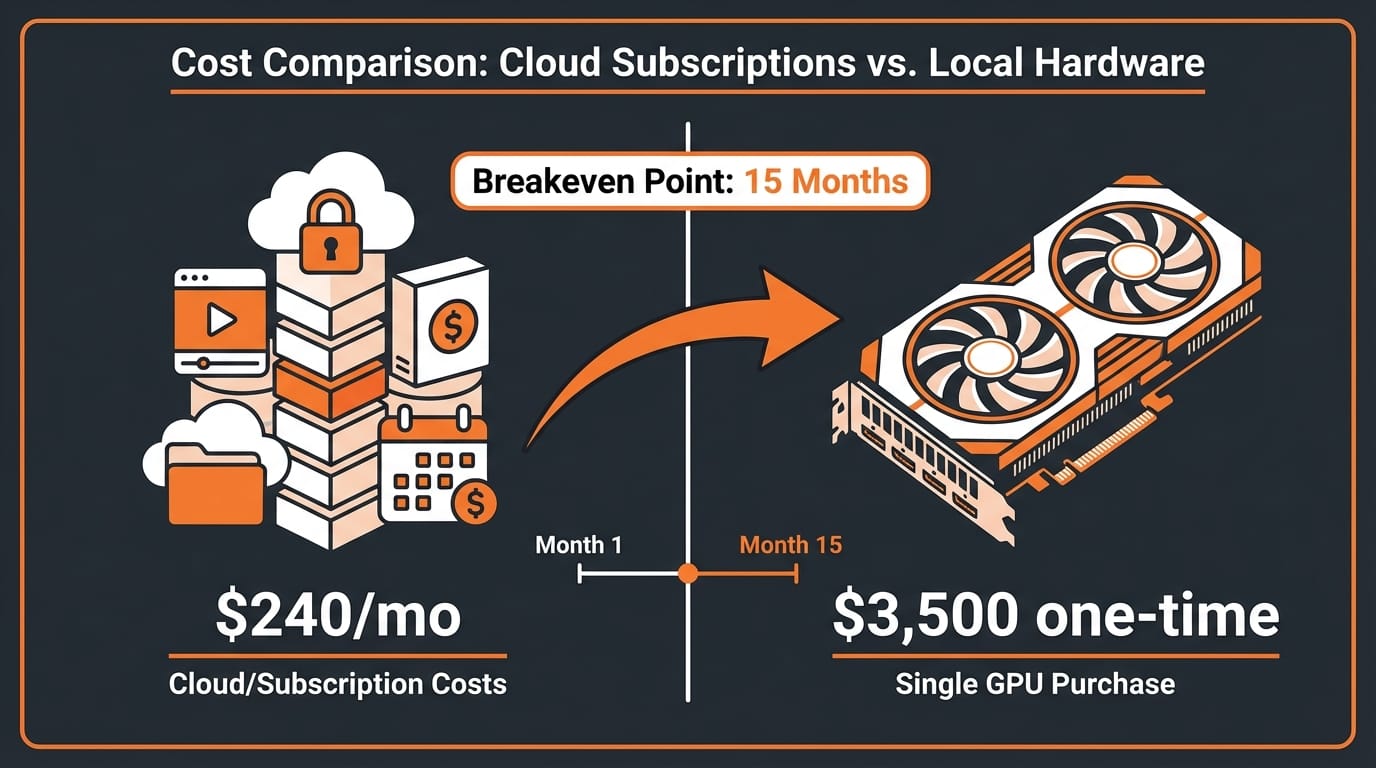

The Total Cost Equation: Hardware vs. Subscriptions

The practical question for creators: what does it actually cost to run these tools locally?

The minimum viable setup is an NVIDIA RTX 4090 with 24GB VRAM. Used prices sit around $1,800 to $2,200 in March 2026. Combined with a suitable CPU, 64GB RAM, and storage, a complete workstation runs approximately $3,000 to $4,000. This configuration handles FireRed 1.1 (quantized), Kiwi-Edit, Helios (with Group Offloading), LTX-2.3 (at 1080p), and TADA comfortably.

Now stack up the subscription costs for equivalent commercial capability:

- Image editing: Adobe Creative Cloud ($22.99/month) or Runway ($28/month)

- Video generation: Runway Pro ($28/month) or Pika ($58/month)

- Voice synthesis: ElevenLabs Pro ($99/month)

- Image generation: Midjourney Standard ($30/month)

Total: $150 to $240 per month, or $1,800 to $2,880 per year. A $3,500 local setup pays for itself in 15 to 24 months and continues producing indefinitely. The hardware also appreciates in utility as new open-source models release, since most are designed to run on consumer GPUs.

The tradeoff is real, though. Local setups require technical comfort with Python environments, model loading, and occasional troubleshooting. There is no customer support. Updates require manual model downloads. And for creators who need only occasional AI assistance, subscriptions remain more practical.

Impact on Creators

This wave matters most for three groups. Solo creators and small studios producing daily content (social media, YouTube thumbnails, podcast intros) now have a zero-marginal-cost alternative to subscription tools. The upfront hardware cost is meaningful but recoverable. Creators in developing markets, where $100/month in AI subscriptions represents a significant percentage of income, gain access to professional-grade tools for a one-time hardware investment. And technical creators who integrate AI into custom pipelines, using tools like Modular Diffusers for composable workflows, can now chain open-source models across every modality without API rate limits or per-use costs.

The gap that remains is in convenience and polish. Commercial tools offer integrated interfaces, cloud processing, and consistent updates. Open-source tools require assembly. But the quality gap, which was the primary barrier, has effectively closed across image editing, video generation, video editing, and voice synthesis within a single two-week period.

Key Takeaways

- Six production-grade open-source creative AI models launched between March 1 and 15, 2026, covering image editing (FireRed 1.1), video editing (Kiwi-Edit), video generation (Helios, LTX-2.3), and voice synthesis (TADA, Fish Audio S2)

- Benchmark parity with commercial tools is now real: FireRed 1.1 tops open-source image editing rankings, Fish Audio S2 beats closed-source TTS on word error rate, and TADA achieved zero hallucinations

- Consumer hardware is sufficient: most models run on a 24GB GPU (RTX 4090), with Helios scaling down to 6GB VRAM via Group Offloading

- Cost breakeven is 15 to 24 months comparing a $3,500 local setup against $150 to $240/month in combined subscriptions

- All models use permissive licenses (Apache 2.0 or MIT), except Fish Audio S2's model weights, which require a commercial license for business use

What to Watch

The next test is adoption. Open-source creative AI has historically struggled with the "last mile" of user experience. ComfyUI and similar frontends have improved, but the gap between downloading a model and producing professional output remains wider than clicking "generate" in Midjourney. Watch for integrated desktop applications like the one Lightricks shipped with LTX-2.3. If other teams follow that pattern, bundling models with polished local interfaces, the subscription model for creative AI tools faces genuine disruption.

Also watch the training data question. As models like Black Forest Labs' Self-Flow approach to multimodal training mature, the next generation of open-source models may train more efficiently on smaller, curated datasets. That would lower the barrier for specialized creative models tuned to specific workflows, making the open-source ecosystem even more competitive.

March 2026 is not the moment open-source won. It is the moment the tools became good enough that the choice between open-source and commercial is no longer about quality. It is about workflow preference, technical comfort, and how you want to spend your money.