Apple just admitted it cannot build a competitive voice assistant alone. On February 12, 2026, the company announced a multi-year partnership with Google to rebuild Siri from the ground up using Gemini AI. The deal is reportedly worth $1 billion per year and represents the most significant strategic pivot in Apple's AI history. For the roughly 2.2 billion Apple device owners worldwide, this means the voice assistant that has been a punchline for years is about to become something genuinely useful. For creators who live inside the Apple ecosystem, the implications run deeper than a better Siri. This is about turning every Apple device into a context-aware creative assistant that understands what you are working on, across every app, without you having to explain it.

Background: Why Apple Needed Google

Apple has been losing the AI race since ChatGPT launched in late 2022. While Google shipped Gemini, OpenAI reached 700 million weekly ChatGPT users, and Anthropic carved out the enterprise market with Claude, Apple's AI efforts produced Apple Intelligence features that reviewers called "underwhelming" and a Siri that still struggled with basic multi-step requests.

The core problem is data. Apple's privacy-first philosophy, while admirable, left the company with a critical training data gap. You cannot build a state-of-the-art large language model without massive datasets, and Apple's refusal to harvest user data the way Google and Meta do meant its in-house models consistently underperformed. Internal testing reportedly put OpenAI's ChatGPT, Anthropic's Claude, and Google's Gemini through rigorous evaluation. Gemini won.

The choice of Google over OpenAI is itself a signal. Apple already integrates ChatGPT into Siri for complex queries requiring world knowledge. But for the foundational intelligence layer that will power the next generation of Siri, Apple chose Gemini's multimodal capabilities and contextual understanding. Google's 1.2 trillion parameter model apparently exceeded Apple's requirements across the board.

This is not Apple outsourcing its AI. It is Apple doing what it has always done with components: finding the best supplier and wrapping it in Apple's design, privacy framework, and hardware integration. The same way Apple uses TSMC to fabricate its chips rather than building its own fabs, it is now using Google's model expertise while keeping the user experience and data handling entirely within Apple's control.

Deep Analysis

What the $1 Billion Per Year Actually Buys

The partnership gives Apple access to Google's frontier Gemini models as the foundation for what Apple internally calls "Apple Foundation Models version 11." This is not a simple API integration where Siri sends queries to Google's servers. Apple is building custom models derived from Gemini's architecture, training them on Apple-specific tasks, and running them on Apple's own infrastructure.

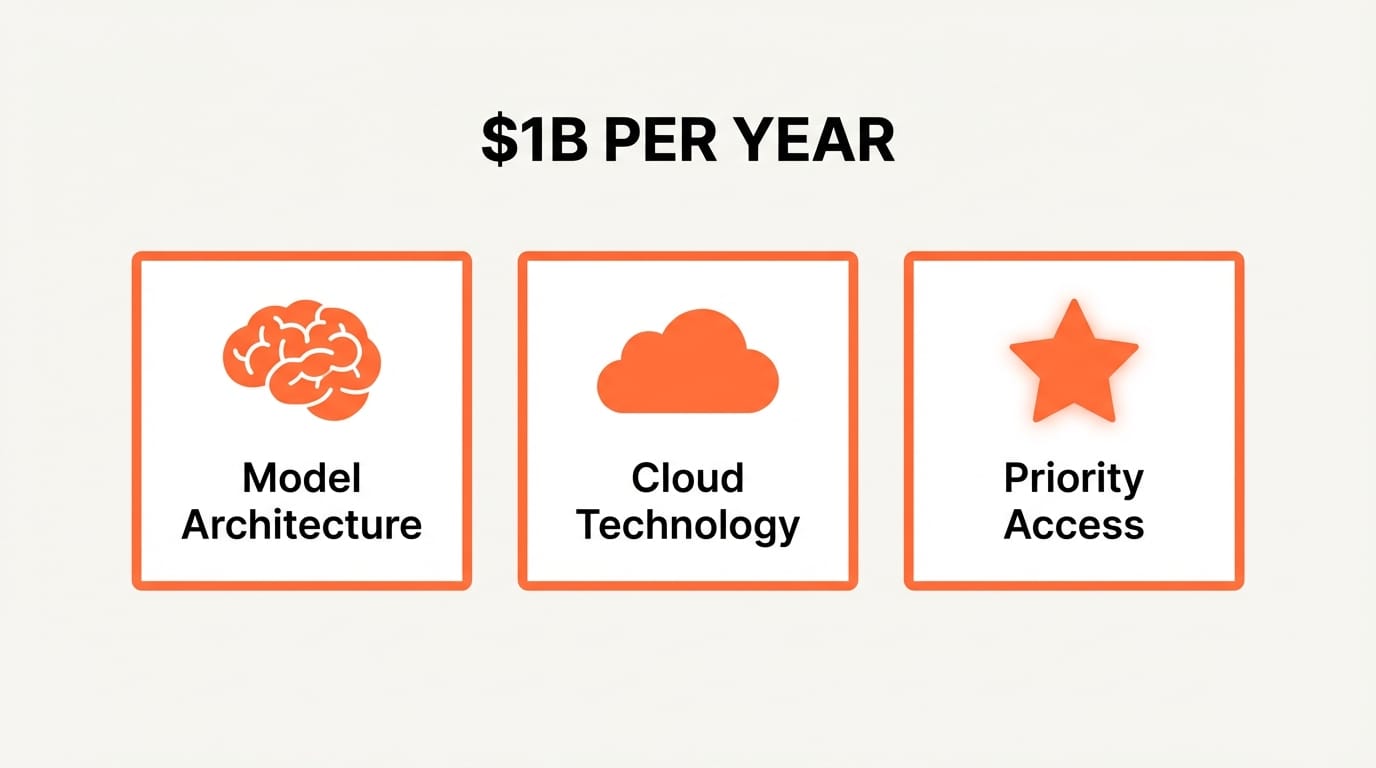

The $1 billion annual price tag buys three things. First, licensing rights to Gemini's model architecture and weights as a training foundation. Second, access to Google's cloud technology and AI research for ongoing model development. Third, a multi-year guarantee that Apple gets priority access to Google's latest model improvements.

For Google, the deal validates Gemini as the foundation model of choice for the world's most valuable company. It also creates a recurring revenue stream and ensures Google's AI technology reaches every iPhone, iPad, and Mac on the planet. The strategic value of being embedded in 2.2 billion devices is enormous, even if Apple's privacy layer means Google never sees the user data.

The Technical Architecture: Privacy Without Compromise

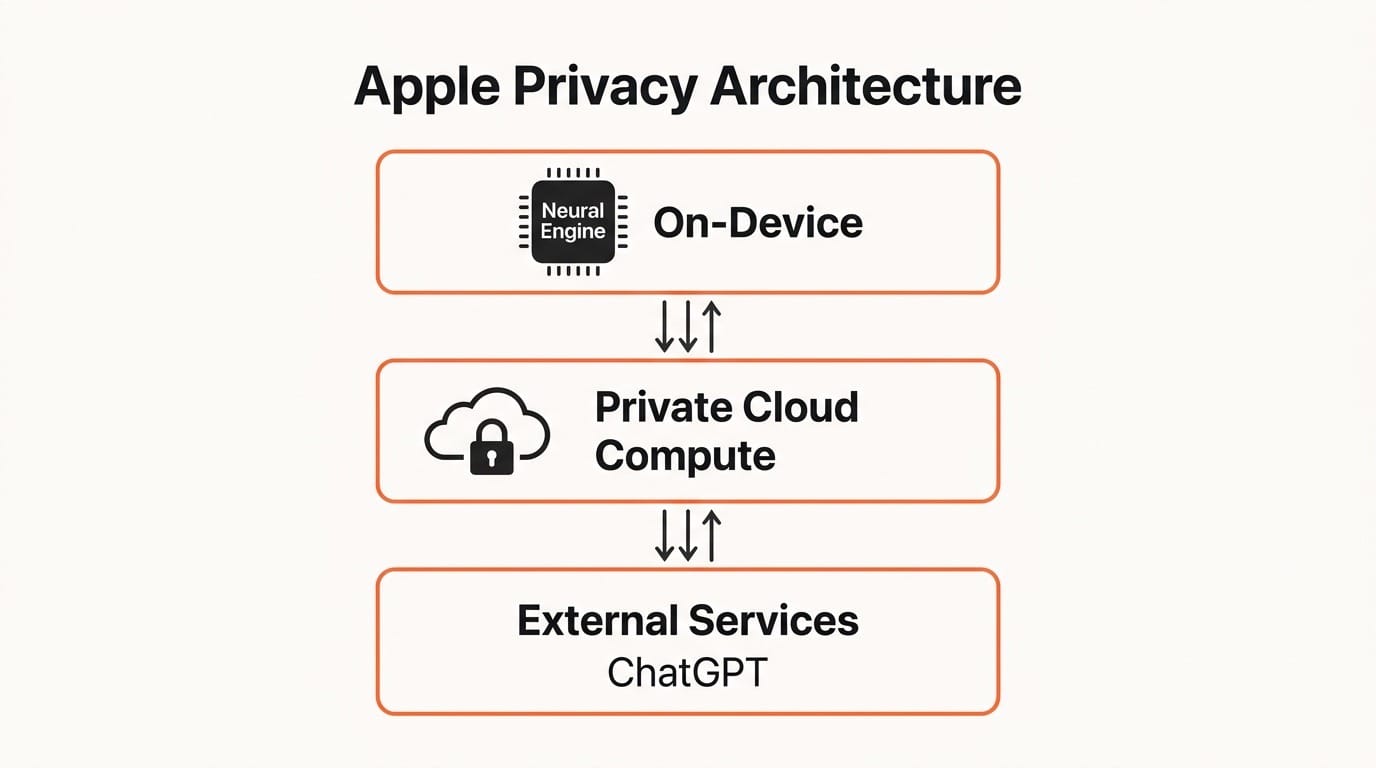

Tim Cook addressed the privacy question directly in a January 29 statement: Apple will not change its privacy rules for this partnership. The technical architecture makes this possible through a three-tier system.

Tier 1: On-device processing. The simplest Siri requests run entirely on the device using Apple's Neural Engine. No data leaves the phone. This handles basic commands, quick lookups, and routine tasks where the on-device model is sufficient.

Tier 2: Private Cloud Compute. More complex requests that exceed the on-device model's capabilities are routed to Apple's Private Cloud Compute (PCC) servers. These servers run the custom Gemini-derived models. The critical detail: data processed through PCC is not stored, not used to train models, and not accessible to Apple employees. The system is designed to minimize the amount of data shared with the cloud in the first place.

Tier 3: External services. For queries requiring real-time world knowledge (weather, sports scores, web searches), Siri can still route to external services including ChatGPT, but only with explicit user permission.

This architecture means creators working with sensitive client content, unreleased designs, or proprietary material can use the new Siri features without the privacy concerns that come with cloud-first alternatives. Your creative work stays within Apple's boundary.

The Feature Rollout: What Is Coming and When

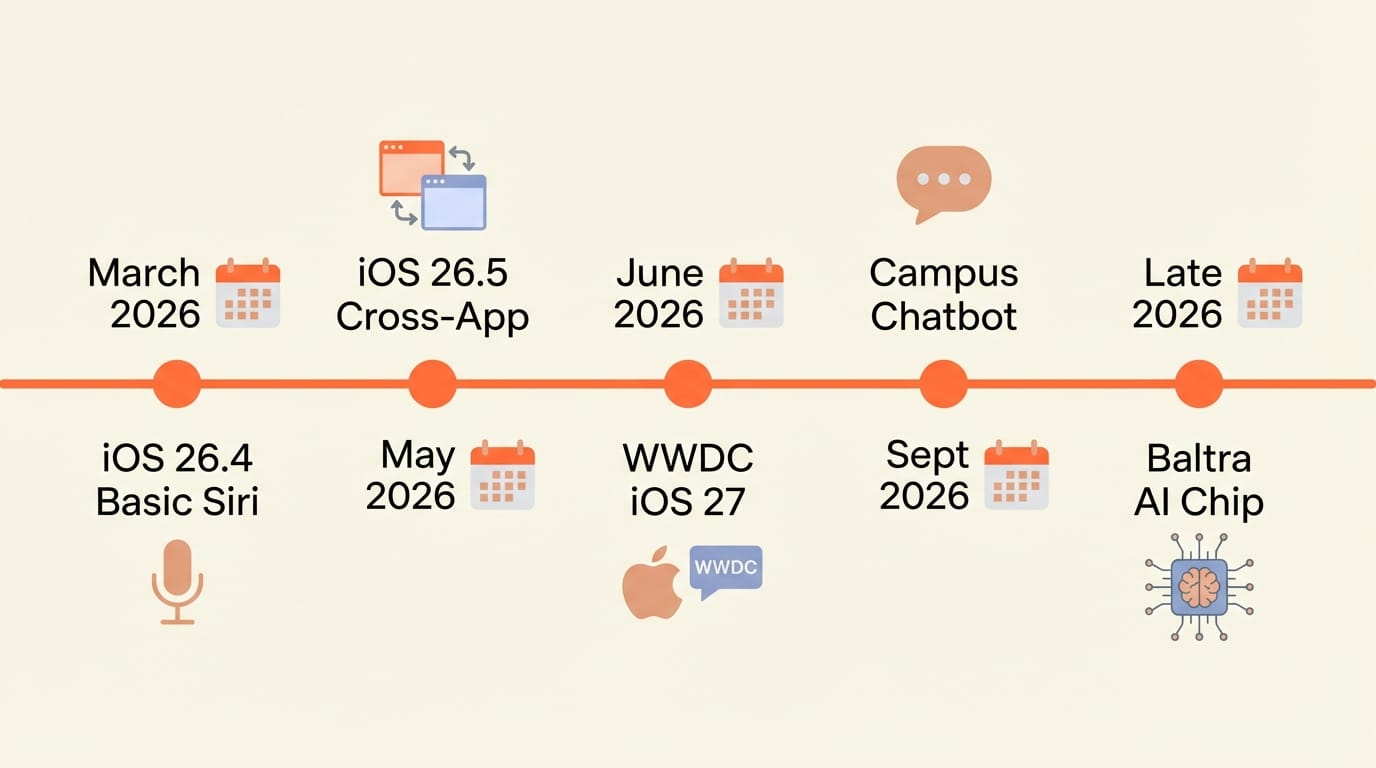

Apple confirmed three core capabilities for iOS 26.4, originally targeted for March 2026. However, the rollout has become more complicated than the initial announcement suggested.

On-Screen Awareness: Siri can interpret what is currently displayed on screen and act on it. If a restaurant appears in Safari, Siri can make a reservation without the user copying the name or address. If a flight confirmation email is open, Siri can add it to the calendar and set departure reminders automatically. This uses the Neural Engine on Apple's latest silicon for real-time screen interpretation.

Personal Context: Siri can retrieve specific information from notes, emails, messages, photos, and files stored on the device. Ask for a driving license number from a saved photo. Find a podcast episode recommended in a message thread. Pull reservation details from a text conversation. The assistant understands your personal data graph without that data ever leaving Apple's ecosystem.

Cross-App Intelligence: Siri can take actions across multiple apps in a single request. "Send Sarah the photos from last weekend's shoot and schedule a follow-up call for Thursday" would require Siri to access Photos, identify the relevant images, open Messages or Mail, attach them, then open Calendar and create an event. No manual app-switching required.

Here is the complication. The iOS 26.4 beta launched in February without many of these features. Internal testing revealed quality problems and performance issues, particularly with the machine learning components. Apple is now spreading the rollout across multiple releases. Some features will ship in iOS 26.4, others in iOS 26.5 (expected May), and the most ambitious capabilities may not arrive until iOS 27 at WWDC in September.

The Campos Project: Siri Becomes a Chatbot

Beyond the iOS 26.4 features, Apple is working on an internal project codenamed "Campos" that represents a more fundamental reimagining of Siri. Expected to debut at WWDC 2026 with iOS 27, Campos transforms Siri from a voice-command interface into a full AI chatbot.

The redesigned Siri will support both voice and text input, handle longer conversational interactions, and gain capabilities that directly compete with ChatGPT and Gemini as standalone products. Reported features include web search, content creation, image generation, file analysis, and deeper control of device features and system settings.

This is the endgame. Apple is not just improving Siri's intelligence. It is rebuilding the entire interaction model from task-based voice commands to sustained, context-aware conversations that can span multiple sessions and handle complex multi-step workflows.

Apple's Long-Term Infrastructure Play

The Google partnership is a bridge, not a destination. Apple is simultaneously developing its own AI server chip, codenamed "Baltra," in collaboration with Broadcom. Built on TSMC's N3P process (the same node OpenAI and Nvidia are using for their AI chips), Baltra is scheduled for mass production in the second half of 2026, with dedicated Apple AI data centers coming online in 2027.

This tells you Apple's strategic thinking. Use Google's models now to close the capability gap immediately. Build custom silicon and infrastructure in parallel. Eventually reduce or eliminate the dependency on Google once Apple's own hardware and models can match the performance. The $1 billion per year to Google is the cost of not falling further behind while the long-term solution comes together.

Impact on Creators: What Changes for You

If You Create Visual Content on Apple Devices

On-screen awareness is the feature that matters most for visual creators. The ability to say "save this color palette" while viewing an image, "add this to my mood board" while browsing Pinterest, or "send this mockup to the client" while reviewing in Figma removes the friction of context-switching that eats hours every week.

For photographers and videographers, asking Siri to "find all the photos from the studio session on Tuesday and create a contact sheet" becomes possible when Siri understands your Photos library, your Calendar (to identify "Tuesday"), and can execute multi-step actions across apps.

Actionable step: Start identifying the repetitive multi-app tasks in your creative workflow. Which actions involve copying information from one app and pasting it into another? Those are the first tasks the new Siri will handle.

If You Manage Content Across Platforms

Creators who publish across multiple platforms spend significant time on distribution logistics. Cross-app intelligence could eventually handle sequences like "take the blog post draft from Notes, create a summary for LinkedIn, pull the hero image from Photos, and schedule both for Thursday morning." Each step currently requires manual app-switching and copy-pasting.

The Campos chatbot interface adds another dimension. Instead of rigid voice commands, you could have a conversation with Siri about your content strategy, ask it to analyze engagement patterns from your email newsletters, and have it draft responses to collaboration requests, all within a sustained dialogue that remembers context from previous conversations.

Actionable step: Document your content distribution workflow as a numbered sequence of steps. When the new Siri capabilities ship, you will be ready to delegate the mechanical parts immediately.

If You Work With Client Content

The privacy architecture is the differentiator for professional creators. Agencies, freelancers, and studios handling client work under NDAs need assurance that their AI assistant is not sending proprietary material to external servers for training. Apple's Private Cloud Compute model provides that guarantee in a way that cloud-first competitors currently do not match.

This could make Apple devices the default choice for professional creative work where confidentiality matters. If you can get ChatGPT-level intelligence through Siri without the data privacy tradeoffs, the value proposition shifts significantly toward Apple's ecosystem.

Actionable step: Review your current AI tool usage through a privacy lens. Which tools process client data on external servers? The new Siri may offer equivalent capabilities with stronger privacy guarantees.

Key Takeaways

1. Apple is rebuilding Siri using Google's Gemini AI in a deal worth $1 billion per year. The custom 1.2 trillion parameter model runs on Apple's Private Cloud Compute, not Google's servers, preserving Apple's privacy guarantees.

2. Three core capabilities are coming: on-screen awareness, personal context understanding, and cross-app intelligence. The rollout is staggered across iOS 26.4, 26.5, and iOS 27, with the most ambitious features arriving at WWDC in September.

3. Project Campos will transform Siri into a full AI chatbot with iOS 27, supporting text input, longer conversations, content creation, and image generation. This directly competes with ChatGPT and Gemini as standalone products.

4. Apple is simultaneously building custom AI server chips (Baltra) for mass production in late 2026, signaling that the Google partnership is a bridge to eventual AI independence.

5. For creators, the privacy-first architecture makes Apple devices uniquely positioned for professional work involving sensitive client content. The tradeoff of waiting for Apple's slower rollout is getting AI assistance without data privacy compromises.

What to Watch

March 2026: iOS 26.4 ships. Watch which Siri features actually make the cut versus what gets pushed to later releases. The gap between Apple's promises and the shipping product will tell you how much the Gemini integration has matured.

May 2026: iOS 26.5 should deliver the second wave of Siri capabilities. Cross-app actions and deeper personal context features are expected here. This is when creative workflow automation becomes practical.

June 2026 (WWDC): Project Campos debuts with iOS 27. This is the big reveal. The full chatbot interface, expanded capabilities, and Apple's vision for Siri as a creative assistant will be laid out. Watch for developer APIs that let third-party creative tools integrate with the new Siri.

Late 2026: Baltra chips enter mass production. When Apple's custom AI silicon comes online, expect a performance and capability jump in on-device AI processing. This is when the dependency on Google's models starts to decrease.

The Siri overhaul is not just an upgrade. It is Apple's admission that AI assistants are the next major platform battleground, and that winning requires partnering with the best model provider rather than building everything in-house. For creators in the Apple ecosystem, the result should be an AI assistant that understands your work, respects your privacy, and actually helps rather than frustrates. The question is whether Apple can execute the rollout without the delays and quality issues that have plagued its AI efforts so far.

Deep dive by Creative AI News.

Subscribe for free to get the weekly digest.