OpenAI signed a military contract that its rival Anthropic refused to accept. The deal triggered the most senior resignation in OpenAI's recent history, an unprecedented government blacklisting of an American AI company, and a legal battle that is still unfolding. For creators who build workflows around these platforms, this is not an abstract policy debate. It is a concrete signal about how AI companies prioritize revenue versus the ethical commitments their users rely on.

Background

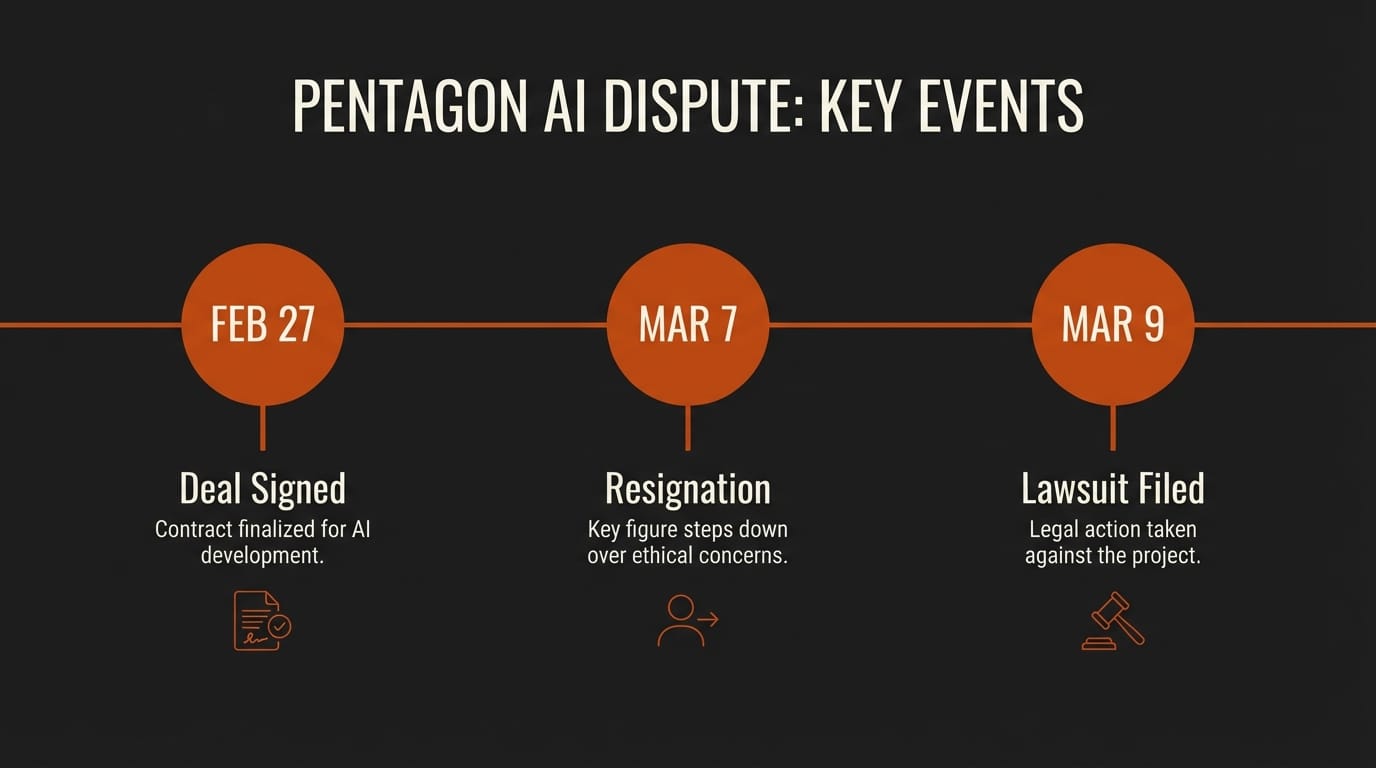

On February 27, 2026, the U.S. Department of Defense asked Anthropic to agree that the Pentagon could use its Claude AI models for "any lawful purpose," including mass domestic surveillance and fully autonomous weapons. Anthropic refused. The company maintained two explicit red lines: no surveillance of American citizens without judicial oversight, and no autonomous weapons without human authorization.

The Trump administration responded by designating Anthropic a "supply chain risk," a label historically reserved for foreign adversaries like Huawei and Kaspersky. Defense contractors were ordered to certify that they do not use Claude in any Pentagon work. Anthropic estimated the designation put hundreds of millions of dollars in 2026 revenue at risk.

Within hours, OpenAI moved to fill the gap. Sam Altman announced a new agreement with the Pentagon on the same day. The speed drew immediate backlash. Altman later admitted the deal "looked opportunistic and sloppy" and said he "shouldn't have rushed" it out on a Friday.

Deep Analysis

The Contract Loopholes

OpenAI's published contract excerpts state three prohibitions: no mass domestic surveillance, no directing autonomous weapons systems, and no high-stakes automated decisions like social credit systems. On the surface, these match the red lines Anthropic demanded.

The critical difference is enforcement. Anthropic wanted contractual language that would give it a free-standing right to prohibit certain government uses. OpenAI's contract, according to legal experts who reviewed the published excerpts, does not grant that right. Instead, it states that the Pentagon cannot use OpenAI's technology to break existing laws and policies "as they're stated today."

Brad Carson, former Army undersecretary, said he does not believe the strongest prohibitions are actually in the full contract. Alan Rozenshtein, a former Department of Justice attorney, stated that "there is nothing OpenAI can do to clarify this except release the contract." The full text has not been made public.

The language includes qualifiers like "intentionally" and "consistent with applicable laws," which legal analysts note have historically provided intelligence agencies with significant flexibility. The Electronic Frontier Foundation described the contract language as "weasel words" that would not prevent AI-powered surveillance in practice.

The Resignation and Internal Backlash

On March 7, Caitlin Kalinowski, OpenAI's head of robotics and consumer hardware, resigned over the deal. (We covered the initial resignation in our quick post.) She posted publicly that "surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got." She called it a "governance concern first and foremost," noting that guardrails should have been established before the deal was signed, not after.

Kalinowski is the most senior OpenAI employee to publicly break with the company over an ethical issue. Internally, OpenAI staff were upset as well. CNN reported that employees were "fuming" about the deal, with many expressing respect for Anthropic's decision to stand firm. More than 30 employees from OpenAI and Google DeepMind, including Google chief scientist Jeff Dean, filed an amicus brief warning that the Pentagon's blacklisting of Anthropic threatens the entire American AI industry.

The internal dissent is notable because it reveals a gap between how AI companies market their ethical commitments to users and how they behave when major government revenue is on the table.

Anthropic's Legal Fight

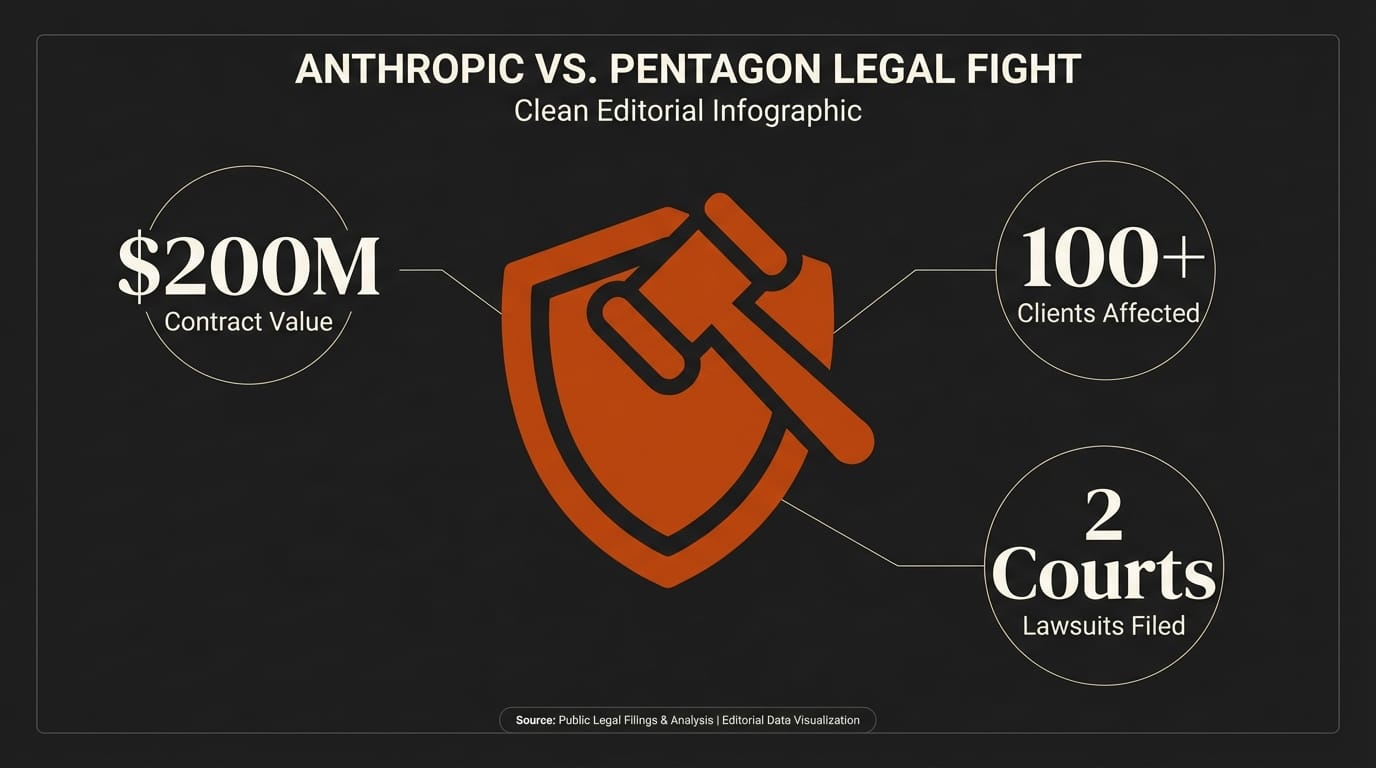

On March 9, Anthropic filed lawsuits in both the U.S. District Court for the Northern District of California and the federal appeals court in Washington, D.C. The company argued that the supply chain risk designation violated its First Amendment rights and exceeded the scope of the underlying statute, which was designed to address foreign adversary contractors.

Anthropic had previously held a $200 million contract with the Department of Defense and was the first AI lab to deploy its technology across the Pentagon's classified networks. The blacklisting effectively reversed that position overnight. Meanwhile, contradictions emerged: Palantir CEO Alex Karp confirmed his company still uses Anthropic's Claude models in military operations, including in Iran, despite the blacklist.

The legal battle is expected to take months, possibly years. Anthropic sought an emergency stay from the appeals court on March 12 to prevent irreversible damage to its business while the case proceeds.

Impact on Creators

This fight matters for anyone who builds creative workflows on top of AI platforms. Platform risk is not just about whether a company's API stays online. It is about whether the company's decision-making reflects values you can predict. When OpenAI moved within hours to sign a deal its competitor refused on ethical grounds, it sent a signal about what the company will prioritize under pressure. If you have built critical workflow components around ChatGPT, DALL-E, or OpenAI's API, you are exposed not only to technical risk but to reputational and ethical alignment risk.

For creators in media, journalism, education, or activism, the surveillance implications are direct. If your AI tools are also deployed in mass surveillance systems, your use of those same tools carries different weight. Your audience and collaborators may have their own views about which platforms they trust.

The practical takeaway is straightforward: diversify your AI stack. No single provider should be a single point of failure for your creative work. Use OpenAI for what it does best, Anthropic for what it does best, and open-source models for tasks where you need full control. Evaluate providers not only on capability and price, but on how they handle pressure from their largest customers.

Key Takeaways

1. OpenAI signed a Pentagon deal within hours of Anthropic's blacklisting, a deal whose full contract text remains unreleased and whose safeguards experts call insufficient.

2. Anthropic's refusal to allow its AI to be used for mass surveillance or autonomous weapons led to an unprecedented supply chain risk designation against an American company.

3. The most senior OpenAI resignation over the deal, plus internal employee dissent and a cross-company amicus brief, signal that the AI industry itself is divided on these questions.

4. Creators who depend on AI platforms should evaluate provider ethics alongside technical capability and diversify their tool stack to reduce platform risk.

What to Watch

The Anthropic lawsuit will set precedent for whether the U.S. government can punish AI companies that impose ethical restrictions on military use. If Anthropic wins, it strengthens the position of every AI company that wants to maintain ethical guardrails. If it loses, expect other AI companies to quietly drop their own red lines when government contracts are at stake.

Watch for OpenAI to release more details about its Pentagon contract in the coming weeks as public pressure mounts. And pay attention to which AI companies your creative tools depend on. The next time an AI company faces a similar pressure test, the choice it makes will directly affect the tools you use every day.

Deep dive by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.