OpenAI just raised more money in a single round than most countries spend on defense. The $110 billion deal, backed by Amazon, Nvidia, and SoftBank, values the company at $730 billion and reshapes the competitive landscape for every creator who depends on AI tools. But behind the headline number, the real story is the Amazon partnership, and what it signals about who will control the AI tools you use every day. Here is what the numbers actually mean for your workflow, your costs, and the tools you will build on in 2026 and beyond.

Background: How We Got Here

To understand the scale of this deal, consider the trajectory. In March 2025, OpenAI raised $40 billion at a $300 billion valuation. At the time, it was the largest private funding round in history. Twelve months later, they nearly tripled the capital and more than doubled the valuation. OpenAI now sits at a market cap larger than all but six publicly traded companies globally, surpassing Walmart, JPMorgan Chase, and Samsung.

The acceleration reflects both the AI market's explosive growth and the existential competition between three ecosystems. OpenAI dominates consumer AI with ChatGPT reaching 700 million weekly users and 20 million paying subscribers. Google is aggressively catching up with Gemini 3.1 Pro and 750 million monthly active users. Anthropic has carved out the enterprise niche, valued at approximately $380 billion and growing fast with corporate customers who prioritize safety and reliability.

But the most important context is the infrastructure arms race. Training frontier AI models now costs billions of dollars in compute. Running them at scale costs even more. OpenAI's inference costs alone are projected to hit $14.1 billion in 2026. The company that secures the most GPU capacity and the cheapest power wins the next generation of AI. That is exactly what this deal is designed to do.

Deep Analysis

Breaking Down the $110 Billion: Who Paid What and Why

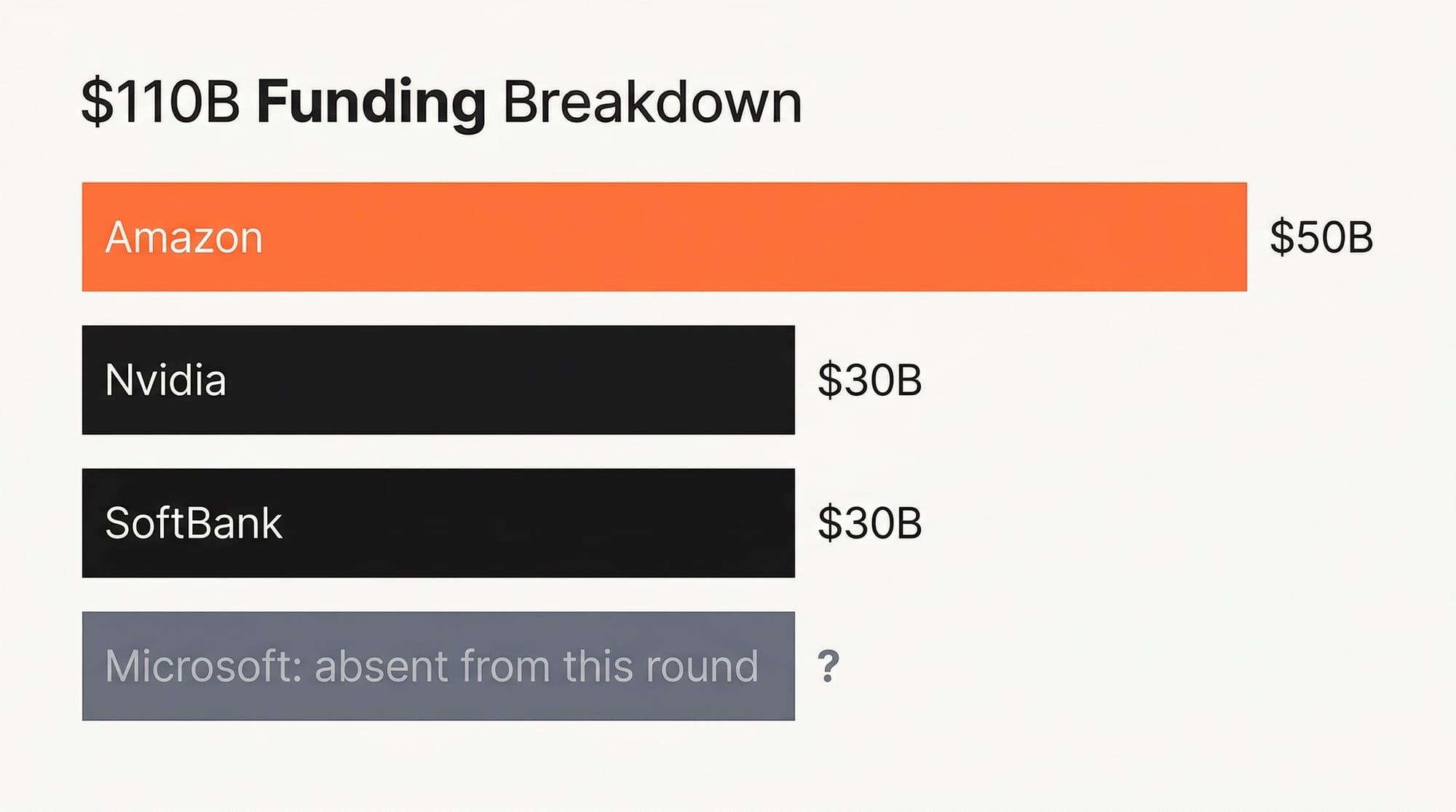

The investor lineup is not random. Each participant brings strategic value far beyond capital.

Amazon ($50 billion, lead investor): Amazon's investment comes in two tranches. The first $15 billion is immediate. The remaining $35 billion is conditional on OpenAI meeting specific milestones, likely related to the Frontier platform launch and revenue targets. Amazon previously invested heavily in Anthropic ($8 billion), making this deal a hedge. Amazon now has deep financial relationships with the two leading AI companies, ensuring that regardless of which model architecture wins, AWS remains the infrastructure layer.

Nvidia ($30 billion): Nvidia's investment is as much about securing a customer as backing a winner. OpenAI is one of the world's largest GPU buyers. The deal locks in 3 gigawatts of dedicated inference capacity and 2 gigawatts of training compute on Nvidia's next-generation Vera Rubin systems. For perspective, 5 gigawatts is roughly the power output of five nuclear reactors dedicated to running AI models. By investing $30 billion, Nvidia guarantees a massive hardware order while gaining insight into how the world's most advanced models are being built.

SoftBank ($30 billion): SoftBank's Masayoshi Son has bet his entire legacy on AI being the next platform shift. SoftBank previously invested $40 billion in OpenAI's prior round, with half conditional on OpenAI completing its for-profit conversion. SoftBank is also the lead investor in Stargate, the planned $500 billion AI infrastructure joint venture. Son is effectively building the picks-and-shovels layer for the entire AI economy.

Microsoft (absent from this round): The most conspicuous detail is Microsoft's absence. Microsoft has invested over $13 billion in OpenAI previously and built its Copilot product line on OpenAI models. Both companies issued a statement saying their partnership remains unchanged, but the optics are unavoidable. Amazon is now OpenAI's largest single-round investor, and the partnership explicitly makes AWS the exclusive third-party cloud distributor for OpenAI's enterprise product. Microsoft retains an option to participate later, but the power dynamics have visibly shifted.

The Amazon Partnership: What "Exclusive Cloud Distribution" Actually Means

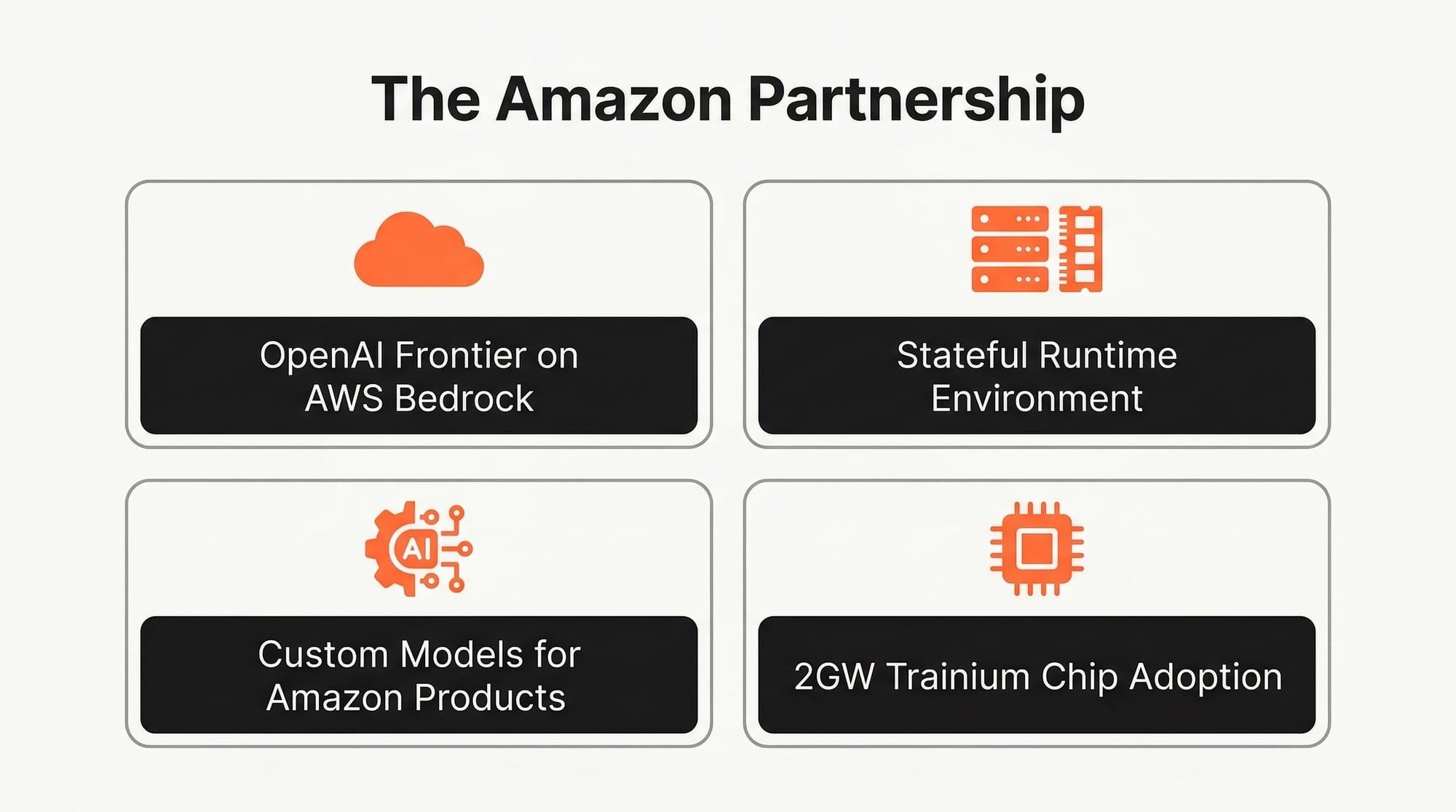

The funding is the headline. The partnership is the story. Amazon and OpenAI announced a multi-year strategic agreement with four key components that will reshape how AI tools reach enterprise customers.

1. OpenAI Frontier on AWS Bedrock: AWS becomes the exclusive third-party cloud provider for OpenAI Frontier, a new platform that lets organizations build, deploy, and manage teams of AI agents. If you are a company that wants to deploy OpenAI's agent capabilities at scale and you are not building directly on OpenAI's own infrastructure, you go through AWS. Period. This is a massive distribution advantage, given that AWS holds approximately 31% of the global cloud market.

2. Stateful Runtime Environment: OpenAI and Amazon are co-building a Stateful Runtime Environment on Bedrock. This is the technical foundation that makes AI agents practical for enterprise use. "Stateful" means the agent maintains context across sessions. It remembers what it did yesterday. It can pick up a project where it left off. It can coordinate across multiple software tools and data sources. For creators building AI-powered workflows, this is the difference between a chatbot and an actual digital coworker.

3. Custom Models for Amazon Products: OpenAI will develop customized models to power Amazon's customer-facing applications. While specifics have not been disclosed, reporting from CNBC indicates this could include integrating OpenAI models into Alexa, Amazon's shopping experience, and AWS enterprise tools. If OpenAI models start powering product recommendations and customer service across Amazon's ecosystem, the reach is staggering: Amazon has 310 million active customer accounts.

4. Trainium Chip Adoption: OpenAI will consume 2 gigawatts of Amazon's custom Trainium chip capacity. This validates Amazon's custom silicon strategy and reduces OpenAI's dependence on Nvidia GPUs for inference. For the broader AI ecosystem, this signals that the hardware monoculture is ending. Custom chips optimized for specific workloads will increasingly compete with Nvidia's general-purpose GPUs, which should drive inference costs down across the board.

Revenue vs. Reality: The Profitability Gap Every Creator Should Understand

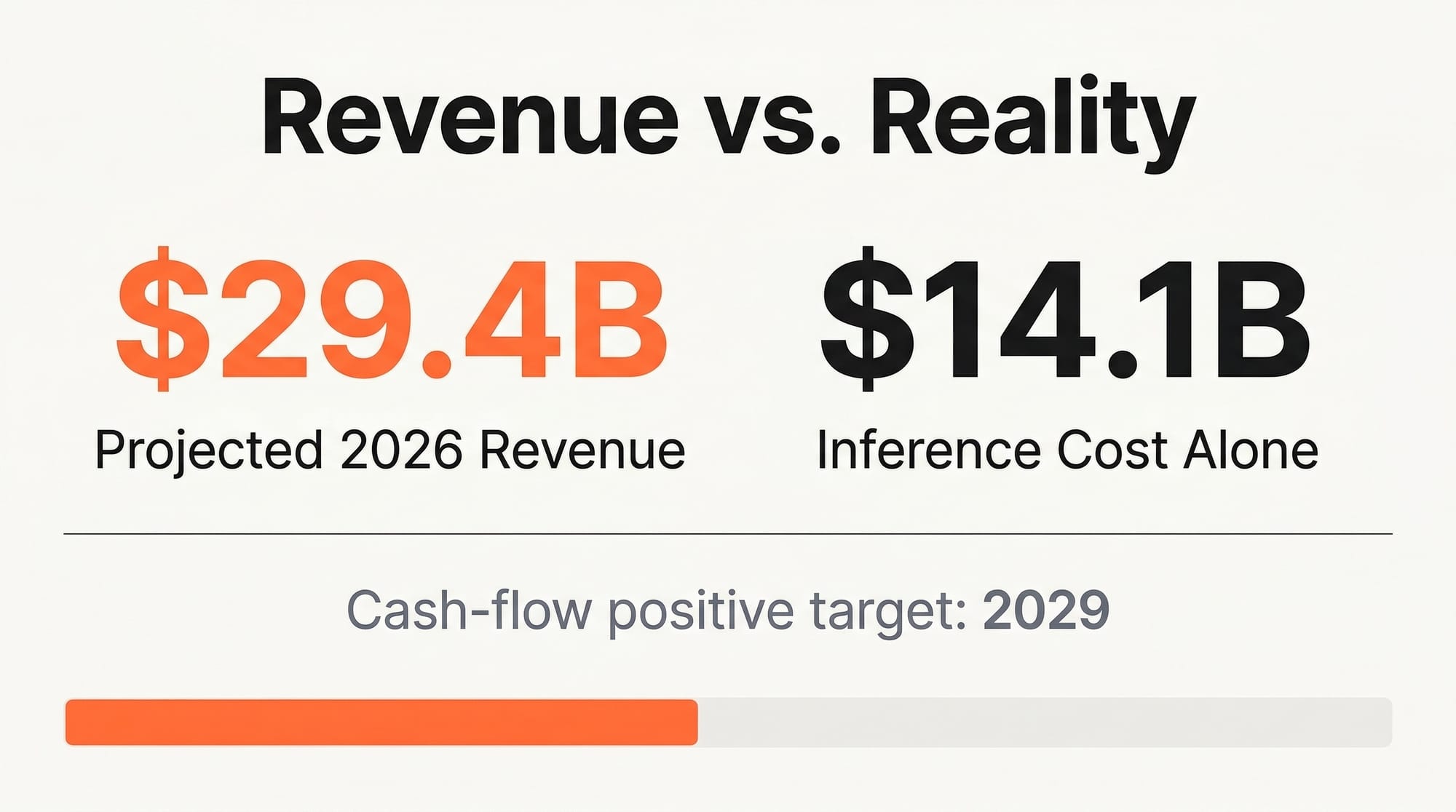

Despite the $730 billion valuation, OpenAI is not profitable. Understanding their financial picture helps you predict where pricing and product strategy will go.

OpenAI projects $29.4 billion in revenue for 2026, up from roughly $20 billion in 2025. That growth rate of approximately 47% year-over-year is impressive but slowing. Revenue comes from three sources: ChatGPT subscriptions ($20/month Plus, $200/month Pro, $8/month Go, and enterprise contracts), API access (pay-per-token for developers), and a nascent advertising business (limited ad testing on free and Go tiers).

On the cost side, inference alone eats $14.1 billion. Research and development consumes another significant chunk. Training the next generation of models is measured in billions. Gross margins sit around 40%, which is poor compared to traditional SaaS companies that typically operate at 70-80% margins. The company does not expect to be cash-flow positive until 2029.

What does this mean practically? OpenAI is incentivized to grow revenue aggressively, which means more product tiers, more features, and continued API price reductions to capture market share. The $8/month ChatGPT Go tier, the ad-supported free tier, and the enterprise push through AWS are all revenue diversification plays. An IPO targeting up to $1 trillion is reportedly planned for late 2026 or 2027, which will create additional pressure to demonstrate sustainable growth.

The Competitive Landscape After the Deal

This funding creates a three-tier competitive structure in AI:

Tier 1 - The Hyperscalers ($100B+ resources): OpenAI (backed by Amazon, Nvidia, SoftBank), Google DeepMind (backed by Alphabet's $175B+ AI budget), and Microsoft (building its own models alongside OpenAI partnership). These three can afford the compute needed to train and run frontier models.

Tier 2 - The Challengers ($10-50B resources): Anthropic ($380B valuation but smaller resource base), Meta AI (open-source Llama models with Meta's infrastructure), and xAI (Elon Musk's startup with a massive GPU cluster). These compete on architecture innovation and niche excellence.

Tier 3 - The Specialists: Mistral, Cohere, AI21 Labs, and others focusing on specific verticals, languages, or deployment models. Many will be acquired or consolidated.

For creators, this tiered structure is actually beneficial. The giants compete on price and features. The challengers innovate on quality and safety. The specialists build tools optimized for specific creative workflows. More competition at every level means better, cheaper, more diverse AI tools for end users.

Impact on Creators: What Changes for You

If You Build on OpenAI's API

The $110 billion injection means OpenAI can afford to subsidize API pricing to capture market share. Expect continued price reductions throughout 2026. GPT-5.3 Codex pricing is already competitive with Claude and Gemini for most use cases. The AWS Bedrock distribution means you will have more deployment options. If your app is hosted on AWS, latency and integration with OpenAI models will improve significantly once the Frontier platform launches.

Actionable step: If you currently use a single AI provider, start testing your most critical prompts across OpenAI, Claude, and Gemini. Pricing and performance shift quarterly. The creator who builds provider-agnostic workflows will save money and avoid lock-in.

If You Use ChatGPT for Creative Work

The new pricing tiers mean more options. ChatGPT Go at $8/month gives you access to GPT-5.3 capabilities at 60% of the Plus price. The Pro tier at $200/month gives you priority access to the most capable models and higher usage limits. The ad-supported free tier is fine for occasional use but includes advertisements and lower rate limits.

Actionable step: Audit your actual ChatGPT usage. If you are on Plus ($20/month) and primarily use it for writing and brainstorming, test whether Go ($8/month) meets your needs. If you run a business on ChatGPT with heavy daily usage, the Pro tier's higher rate limits may save you from hitting caps at critical moments. Track your usage for one week before deciding.

If You Create Video, Audio, or Visual Content

The Frontier platform's agent capabilities will eventually reach creative tools. Imagine deploying an AI agent team where one agent handles research, another writes a first draft, a third generates thumbnail options, and a fourth schedules distribution across platforms. This is the direction OpenAI is heading with the Stateful Runtime Environment. The timeline is unclear, but the infrastructure investment suggests this will be accessible to individual creators, not just enterprises, within 12-18 months.

Actionable step: Start documenting your creative workflow as a series of discrete steps. The creators who can clearly define their process (research, write, edit, design, publish, distribute) will be the first to effectively delegate parts of that process to AI agents when the tools mature.

If You Are Evaluating AI Tools for Your Business

The competitive pressure between OpenAI, Google, and Anthropic is the best thing that has happened to AI tool buyers. Prices are dropping, features are accelerating, and each platform is differentiating on specific strengths. OpenAI leads on consumer reach and brand recognition. Claude leads on long-context reliability and coding. Gemini leads on multimodal capabilities and Google Workspace integration.

Actionable step: Do not commit to annual enterprise contracts with any single AI provider right now. The market is moving too fast. Monthly or quarterly commitments give you the flexibility to switch as capabilities and pricing evolve. Lock in only when a provider offers a discount significant enough to justify the reduced flexibility.

Key Takeaways

1. OpenAI's $110 billion round is the largest private financing in history. Amazon leads with $50 billion and becomes the exclusive cloud distributor for OpenAI's enterprise agent platform, fundamentally changing AI distribution.

2. The deal secures 5 gigawatts of dedicated compute (3GW Nvidia + 2GW Amazon Trainium), creating an infrastructure moat that smaller competitors cannot easily replicate.

3. Despite $29.4 billion in projected 2026 revenue, OpenAI is not profitable and will not be until 2029. This means aggressive pricing, new product tiers, and rapid feature releases to grow revenue, all of which benefit creators.

4. The Stateful Runtime Environment on AWS Bedrock is the real product to watch. AI agents that maintain context, coordinate across tools, and handle ongoing workflows are the next major capability shift for creative professionals.

5. Microsoft's absence from this round and Amazon's new role as lead investor signals a power shift. Watch how this affects Copilot pricing, Azure AI features, and whether Microsoft accelerates its own model development in response.

What to Watch in the Next 90 Days

The deal closed on February 27. Here is the timeline to watch:

March-April 2026: Expect OpenAI to announce Frontier platform details and early access programs. The AWS Bedrock integration will likely launch in preview. Watch for API pricing adjustments, potentially a significant cut to undermine competitors and attract enterprise customers to the new platform.

May-June 2026: OpenAI's IPO preparations should become more visible. The company will likely release financial metrics to build investor confidence. This typically means aggressive product launches and revenue acceleration moves. Good for creators who benefit from the competitive pressure.

July-September 2026: The first generation of agent-based creative tools built on Frontier should start appearing. If you want to be an early adopter, start following OpenAI's developer announcements and the AWS Bedrock changelog.

The next 12 months will bring more capable, more affordable AI tools than any previous period in history. The question is not whether AI will transform creative workflows. It already has. The question is which platform wins your loyalty, and whether the winner uses that position to raise prices once the competition thins out. Creators who build provider-agnostic workflows and stay informed about the competitive landscape will be best positioned regardless of which company comes out on top.

Deep dive by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.