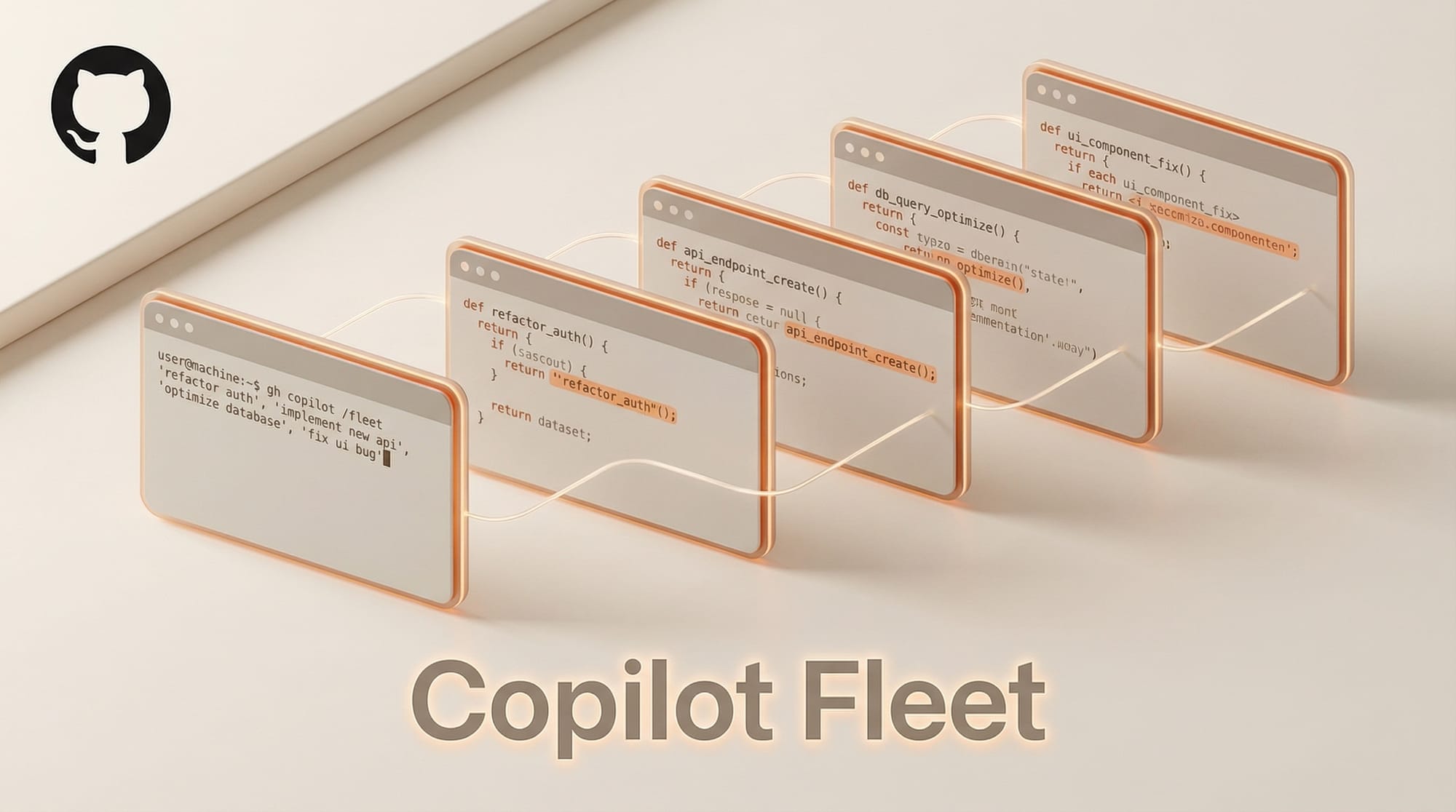

GitHub has launched /fleet, a new command in Copilot CLI that dispatches multiple AI agents to work on different tasks simultaneously. Instead of processing requests one at a time, developers can now break objectives into parallel workstreams that execute concurrently.

What Happened

The /fleet feature introduces an orchestrator that analyzes a developer's objective and splits it into discrete work items. It identifies which tasks can run in parallel versus which have dependencies, then dispatches independent items as background sub-agents. The orchestrator polls for completion, dispatches subsequent waves when dependencies resolve, and synthesizes final artifacts.

Agents dispatched by /fleet can use any supported model. If no model is specified, they default to the current Copilot default. Custom agents can pin specific models in their configuration files.

Why It Matters

Sequential agent execution has been a bottleneck in AI-assisted development. A task that requires changes across multiple files, writing tests, and updating documentation forces developers to wait as the agent processes each step in order. Parallel dispatch eliminates that wait by running independent workstreams concurrently.

This positions GitHub Copilot against competitors like Cursor and Claude Code's multi-agent review system, which have both introduced their own multi-agent capabilities. The AI coding tools market is converging on parallel execution as a core feature.

Key Details

- /fleet is available in Copilot CLI, not the VS Code extension

- The orchestrator handles dependency resolution automatically

- Each sub-agent runs as a background process with its own context

- Outputs are verified before synthesis into final deliverables

- No maximum agent count was specified in the announcement

GitHub's Applied Science team described /fleet as part of a broader shift toward agent-driven development, where developers describe outcomes and agents handle implementation details.

What to Do Next

Developers using Copilot CLI can try /fleet immediately. The feature works best with objectives that break naturally into independent tasks, such as scaffolding a project with separate frontend, backend, and test components. For creative AI workflows that involve multiple file types or pipeline stages, parallel agents can significantly reduce iteration time.