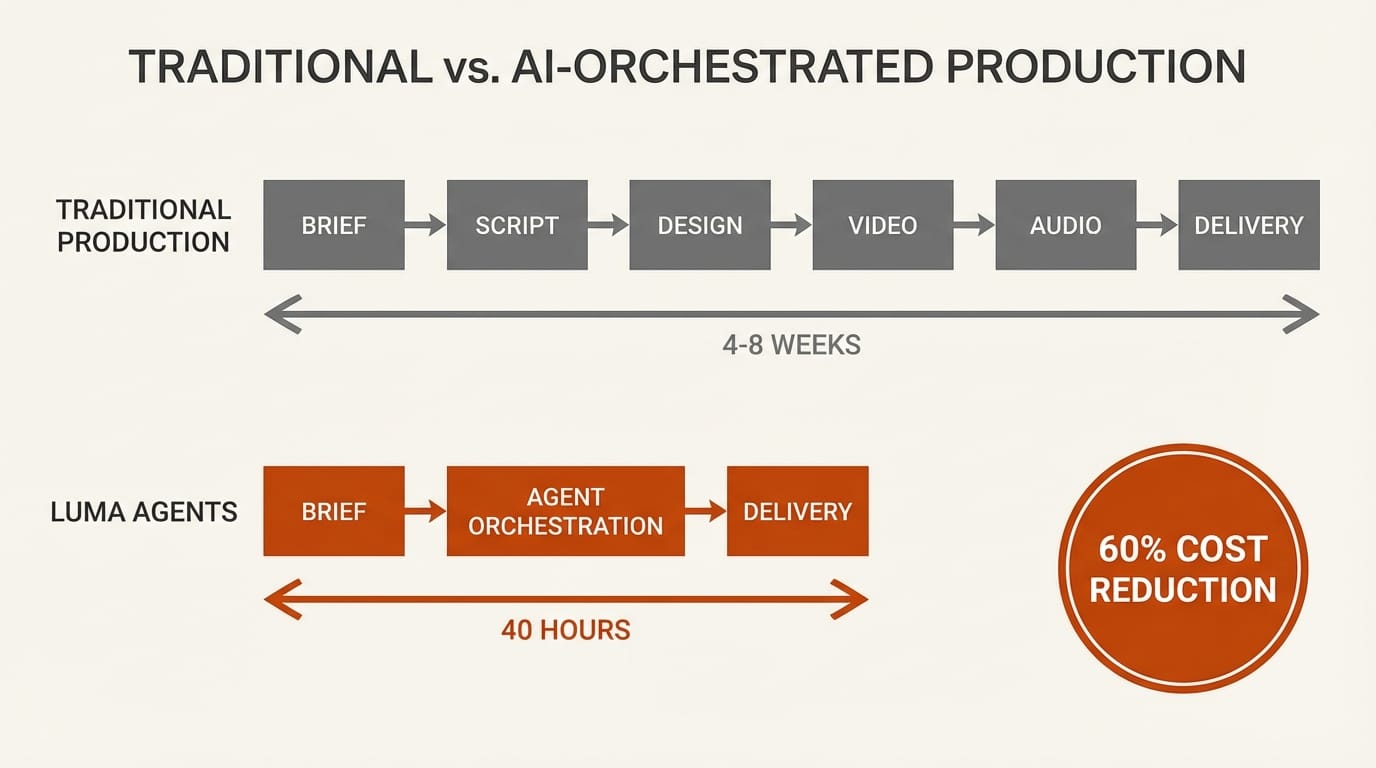

A $15 million, year-long advertising campaign reduced to localized multi-market ads in 40 hours for under $20,000. That is the headline number from Luma's new Creative AI Agents, launched March 5 with enterprise clients already running production workloads. The product is built on Uni-1, the first model in Luma's Unified Intelligence family, and it represents a fundamentally different approach to AI-assisted creative work: instead of one model doing everything, an orchestration layer routes each task to the best available model and manages the entire pipeline from brief to deliverables.

Background

Creative professionals have spent the past two years assembling patchwork AI workflows. Image generation in one tool. Video in another. Copywriting in a third. Each tool operates in isolation, which means manually ensuring visual consistency, tone alignment, and brand compliance across outputs. For agencies managing multi-market campaigns, this fragmentation multiplies with every language and format.

Luma, the San Francisco startup behind the Ray video generation models, raised a $900 million Series C in November 2025 backed by Andreessen Horowitz, AWS, AMD Ventures, Nvidia, and Saudi AI company Humain. That capital funded both Uni-1 and the infrastructure for Project Halo, a 2-gigawatt compute supercluster being built in Saudi Arabia. The agents platform is where that investment meets a product.

The timing aligns with a broader industry shift. Google Flow consolidated its creative AI tools into a single interface. Adobe expanded Firefly across its suite. But these approaches keep the same fundamental model architecture underneath. Luma is betting that orchestrating multiple specialized models will outperform any single model trying to do everything.

Deep Analysis

Uni-1: A New Kind of Creative Model

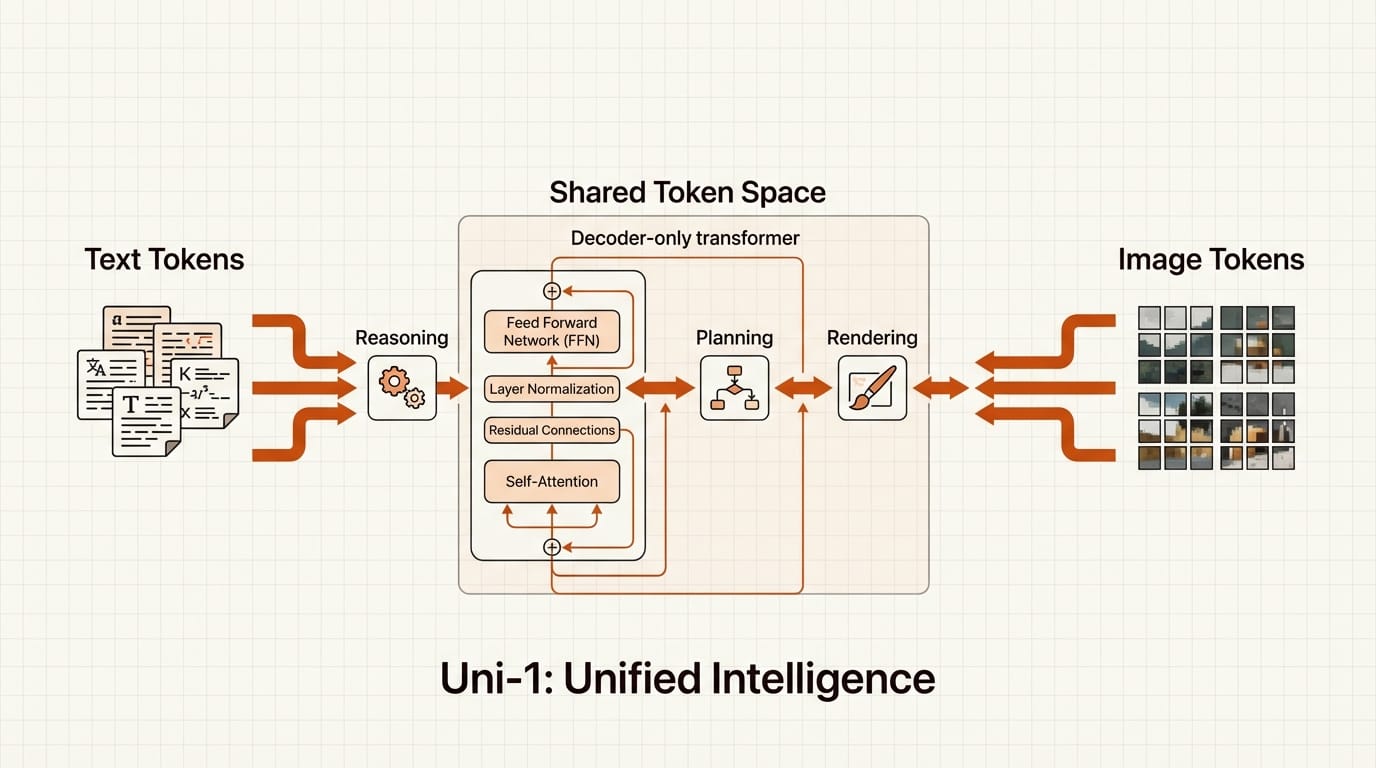

Uni-1 is a decoder-only autoregressive transformer that interleaves language tokens and image tokens in a shared vocabulary. Unlike chained systems where a language model writes a prompt and passes it to a separate image model, Uni-1 reasons and renders within the same forward pass. Text and image patches flow through one continuous sequence, allowing the model to decompose complex instructions, plan compositions, and generate visuals without sequential handoffs between separate systems.

This architecture produces measurable results. On RISEBench, the benchmark for logic-based image processing, Uni-1 scored 0.51, narrowly beating both Nano Banana 2 and GPT Image 1.5. It nearly matches Google's Gemini 3 Pro on object recognition tasks. The model also supports 76+ art styles, multi-image composition from reference photos, and multilingual prompting. For creative teams, the practical implication is that briefs involving spatial reasoning, multi-element layouts, or brand-specific compositions get handled more reliably than with diffusion-based alternatives.

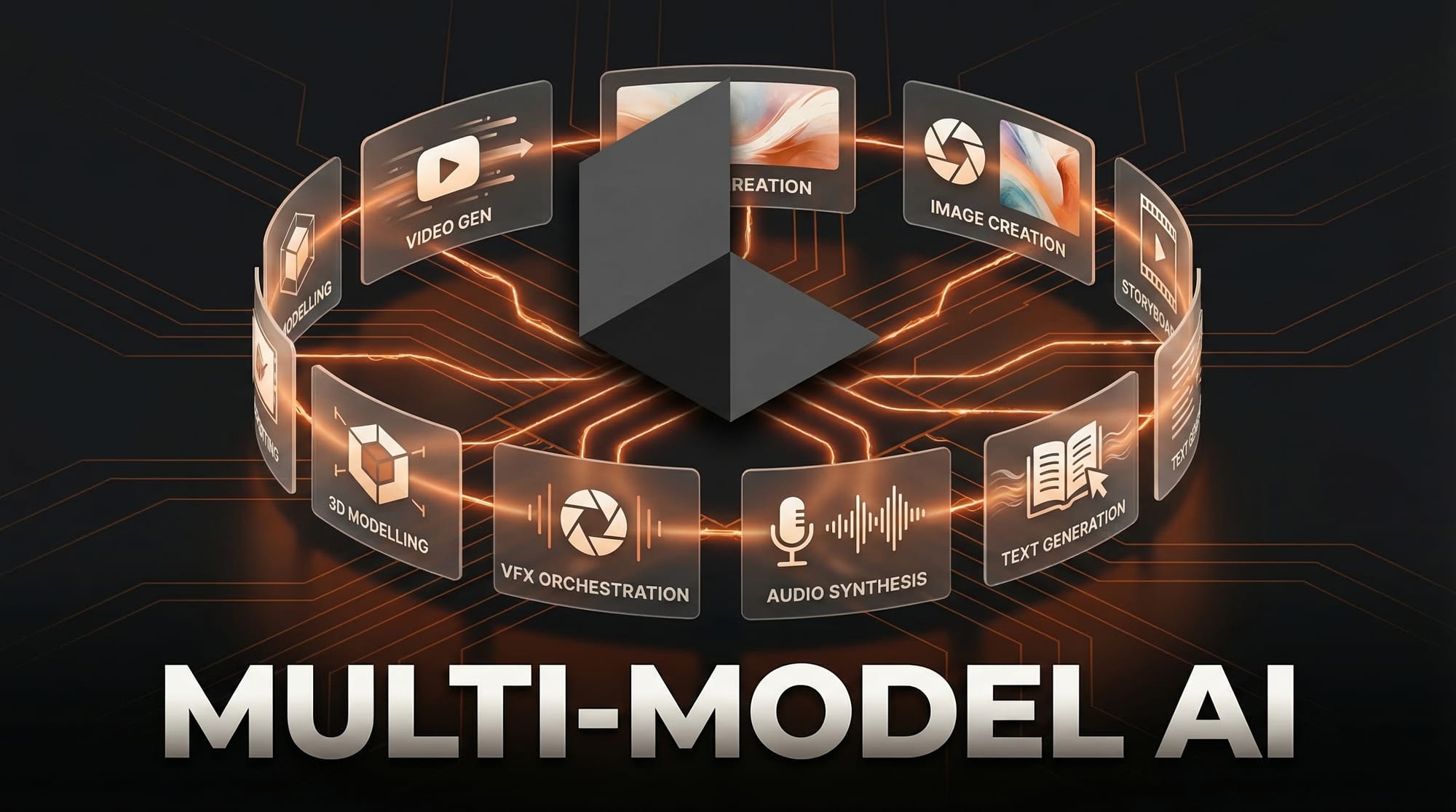

The 8-Model Orchestra

Uni-1 handles reasoning and image generation, but Luma Agents coordinate a roster of eight or more external models for the full production pipeline. Ray 3.14, Luma's own video model, handles primary video at native 1080p with 4x generation speed. Google's Veo 3 provides video with native audio. OpenAI's Sora 2 and Kuaishou's Kling 2.6 offer additional video options. ByteDance's Seedream generates storyboards and images. OpenAI's GPT Image 1.5 handles image editing. ElevenLabs synthesizes voice and audio. Google's Nano Banana Pro covers lightweight inference tasks.

The routing is automatic. Feed the agent a creative brief, and it decides which model handles each piece. A campaign that needs product photography, animated explainer video, voiceover, and social copy gets split across models optimized for each format. The agent maintains persistent context throughout, ensuring a consistent visual language and tone across all outputs. It also runs self-critique loops, evaluating outputs against the original brief and regenerating anything that falls short before delivering results.

Developers can tune this through an API parameter that adjusts chain-of-thought depth. Simple tasks get fast, shallow reasoning. Complex multi-market briefs get deeper planning compute. This configurability is what separates an orchestration platform from a chatbot that happens to generate images.

The Enterprise Proof Points

Two of the world's largest advertising networks are already using Luma Agents in production. Publicis Groupe, the world's third-largest ad company, has integrated the agents across strategy, creative development, and production workflows. Serviceplan Group, Europe's largest independent agency network operating in 20+ countries, embedded Luma into what Global CCO Alexander Schill calls their "House of AI ecosystem," directly within creative workflows.

Brands including Adidas, Mazda, and Humain are also active on the platform. The $15 million campaign case study is the most striking data point: a year-long project compressed into 40 hours of agent-assisted production at a fraction of the cost. Even accounting for the human creative direction still required, the timeline and cost reduction is orders of magnitude, not incremental improvement.

Industry data supports the trajectory. The IAB reported in July 2025 that 86% of ad buyers were using or planning to implement generative AI for video ads. AI-generated creative is projected to account for 40% of all advertisements by the end of 2026. Luma is positioning its agents as the infrastructure layer for that shift.

Impact on Creators

The pricing structure determines who can actually use this. At $30 per month for the Plus tier, individual creators get access to the core agent capabilities. The Pro tier at $90 per month offers 4x the usage for freelancers and small teams. Studios and agencies pay $300 per month for Ultra with 15x usage, and enterprise contracts are custom-priced. All tiers include trial credits and offer 20% savings on annual billing.

For freelancers and small studios, the value proposition is clearest on multi-format projects. If a client needs a brand video, matching social assets, and adapted versions for three markets, that workflow currently requires separate tools, manual consistency checks, and hours of context-switching. Luma Agents collapse that into a single brief with persistent context. The agent handles format adaptation, model selection, and quality verification. At $30 to $90 per month, the ROI calculation is straightforward for anyone producing more than a handful of multi-asset projects per month.

For larger agencies, the implications are structural. AI-assisted production is already compressing timelines from weeks to roughly 10 days while cutting costs up to 60%, according to industry data. Luma Agents push that further by eliminating the integration overhead between different AI tools. When Publicis and Serviceplan adopt a platform at this scale, it signals that multi-model orchestration is moving from experiment to standard operating procedure.

Key Takeaways

1. Luma Agents are the first production-ready AI platform that orchestrates 8+ specialized models from a single creative brief, with major agencies already running client work on it.

2. Uni-1's shared-token architecture for language and image generation outperforms leading models on logic-based image benchmarks, offering more reliable handling of complex creative briefs.

3. Pricing from $30 to $300 per month makes multi-model orchestration accessible to individual creators, not just enterprise budgets.

4. The $15M-to-$20K case study and 86% industry adoption intent suggest AI-orchestrated creative production is approaching an inflection point, not a gradual shift.

What to Watch

The API-first rollout means third-party integrations will define how most creators interact with Luma Agents. Watch for plugins in existing tools like Figma, Premiere Pro, and project management platforms. If Luma becomes the orchestration layer that other creative tools plug into, it could become infrastructure rather than a destination app.

The competitive response matters too. Google, Adobe, and OpenAI all have the models and the distribution to build competing orchestration layers. Whether Luma's first-mover advantage in multi-model creative agents holds depends on execution speed and the depth of its enterprise relationships. Project Halo's Saudi compute supercluster will also be worth watching. If it delivers the promised scale, Luma's cost advantages on inference could widen the gap with competitors relying on more expensive cloud infrastructure.

Deep dive by Creative AI News.

Subscribe for free to get the weekly digest every Tuesday.